Leaderboard

Popular Content

Showing content with the highest reputation on 10/19/21 in all areas

-

In this episode, hear an update on Windows 11 support for VMs on Unraid, the process for updating, and potential pitfalls. In addition, @jonp goes deep on VM gaming and how anti-cheat developers are wrongfully targeting VM users for bans. Helpful Links: Expanding a vdisk Expanding Windows VM vdisk partitions Converting from seabios to OVMF4 points

-

No hagáis caso a este hilo. Ya he encontrado el zip con el backup del usb y no puede tener un nombre más descriptivo... Saludos2 points

-

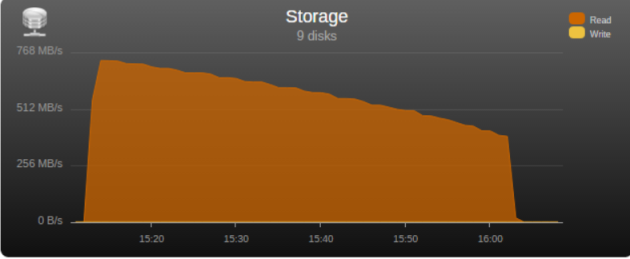

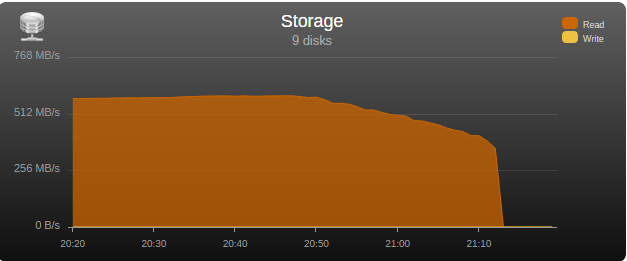

I had the opportunity to test the “real word” bandwidth of some commonly used controllers in the community, so I’m posting my results in the hopes that it may help some users choose a controller and others understand what may be limiting their parity check/sync speed. Note that these tests are only relevant for those operations, normal read/writes to the array are usually limited by hard disk or network speed. Next to each controller is its maximum theoretical throughput and my results depending on the number of disks connected, result is observed parity/read check speed using a fast SSD only array with Unraid V6 Values in green are the measured controller power consumption with all ports in use. 2 Port Controllers SIL 3132 PCIe gen1 x1 (250MB/s) 1 x 125MB/s 2 x 80MB/s Asmedia ASM1061 PCIe gen2 x1 (500MB/s) - e.g., SYBA SY-PEX40039 and other similar cards 1 x 375MB/s 2 x 206MB/s JMicron JMB582 PCIe gen3 x1 (985MB/s) - e.g., SYBA SI-PEX40148 and other similar cards 1 x 570MB/s 2 x 450MB/s 4 Port Controllers SIL 3114 PCI (133MB/s) 1 x 105MB/s 2 x 63.5MB/s 3 x 42.5MB/s 4 x 32MB/s Adaptec AAR-1430SA PCIe gen1 x4 (1000MB/s) 4 x 210MB/s Marvell 9215 PCIe gen2 x1 (500MB/s) - 2w - e.g., SYBA SI-PEX40064 and other similar cards (possible issues with virtualization) 2 x 200MB/s 3 x 140MB/s 4 x 100MB/s Marvell 9230 PCIe gen2 x2 (1000MB/s) - 2w - e.g., SYBA SI-PEX40057 and other similar cards (possible issues with virtualization) 2 x 375MB/s 3 x 255MB/s 4 x 204MB/s IBM H1110 PCIe gen2 x4 (2000MB/s) - LSI 2004 chipset, results should be the same as for an LSI 9211-4i and other similar controllers 2 x 570MB/s 3 x 500MB/s 4 x 375MB/s Asmedia ASM1064 PCIe gen3 x1 (985MB/s) - e.g., SYBA SI-PEX40156 and other similar cards 2 x 450MB/s 3 x 300MB/s 4 x 225MB/s Asmedia ASM1164 PCIe gen3 x2 (1970MB/s) - NOTE - not actually tested, performance inferred from the ASM1166 with up to 4 devices 2 x 565MB/s 3 x 565MB/s 4 x 445MB/s 5 and 6 Port Controllers JMicron JMB585 PCIe gen3 x2 (1970MB/s) - 2w - e.g., SYBA SI-PEX40139 and other similar cards 2 x 570MB/s 3 x 565MB/s 4 x 440MB/s 5 x 350MB/s Asmedia ASM1166 PCIe gen3 x2 (1970MB/s) - 2w 2 x 565MB/s 3 x 565MB/s 4 x 445MB/s 5 x 355MB/s 6 x 300MB/s 8 Port Controllers Supermicro AOC-SAT2-MV8 PCI-X (1067MB/s) 4 x 220MB/s (167MB/s*) 5 x 177.5MB/s (135MB/s*) 6 x 147.5MB/s (115MB/s*) 7 x 127MB/s (97MB/s*) 8 x 112MB/s (84MB/s*) * PCI-X 100Mhz slot (800MB/S) Supermicro AOC-SASLP-MV8 PCIe gen1 x4 (1000MB/s) - 6w 4 x 140MB/s 5 x 117MB/s 6 x 105MB/s 7 x 90MB/s 8 x 80MB/s Supermicro AOC-SAS2LP-MV8 PCIe gen2 x8 (4000MB/s) - 6w 4 x 340MB/s 6 x 345MB/s 8 x 320MB/s (205MB/s*, 200MB/s**) * PCIe gen2 x4 (2000MB/s) ** PCIe gen1 x8 (2000MB/s) LSI 9211-8i PCIe gen2 x8 (4000MB/s) - 6w – LSI 2008 chipset 4 x 565MB/s 6 x 465MB/s 8 x 330MB/s (190MB/s*, 185MB/s**) * PCIe gen2 x4 (2000MB/s) ** PCIe gen1 x8 (2000MB/s) LSI 9207-8i PCIe gen3 x8 (4800MB/s) - 9w - LSI 2308 chipset 8 x 565MB/s LSI 9300-8i PCIe gen3 x8 (4800MB/s with the SATA3 devices used for this test) - LSI 3008 chipset 8 x 565MB/s (425MB/s*, 380MB/s**) * PCIe gen3 x4 (3940MB/s) ** PCIe gen2 x8 (4000MB/s) SAS Expanders HP 6Gb (3Gb SATA) SAS Expander - 11w Single Link with LSI 9211-8i (1200MB/s*) 8 x 137.5MB/s 12 x 92.5MB/s 16 x 70MB/s 20 x 55MB/s 24 x 47.5MB/s Dual Link with LSI 9211-8i (2400MB/s*) 12 x 182.5MB/s 16 x 140MB/s 20 x 110MB/s 24 x 95MB/s * Half 6GB bandwidth because it only links @ 3Gb with SATA disks Intel® SAS2 Expander RES2SV240 - 10w Single Link with LSI 9211-8i (2400MB/s) 8 x 275MB/s 12 x 185MB/s 16 x 140MB/s (112MB/s*) 20 x 110MB/s (92MB/s*) * Avoid using slower linking speed disks with expanders, as it will bring total speed down, in this example 4 of the SSDs were SATA2, instead of all SATA3. Dual Link with LSI 9211-8i (4000MB/s) 12 x 235MB/s 16 x 185MB/s Dual Link with LSI 9207-8i (4800MB/s) 16 x 275MB/s LSI SAS3 expander (included on a Supermicro BPN-SAS3-826EL1 backplane) Single Link with LSI 9300-8i (tested with SATA3 devices, max usable bandwidth would be 2200MB/s, but with LSI's Databolt technology we can get almost SAS3 speeds) 8 x 500MB/s 12 x 340MB/s Dual Link with LSI 9300-8i (*) 10 x 510MB/s 12 x 460MB/s * tested with SATA3 devices, max usable bandwidth would be 4400MB/s, but with LSI's Databolt technology we can closer to SAS3 speeds, with SAS3 devices limit here would be the PCIe link, which should be around 6600-7000MB/s usable. HP 12G SAS3 EXPANDER (761879-001) Single Link with LSI 9300-8i (2400MB/s*) 8 x 270MB/s 12 x 180MB/s 16 x 135MB/s 20 x 110MB/s 24 x 90MB/s Dual Link with LSI 9300-8i (4800MB/s*) 10 x 420MB/s 12 x 360MB/s 16 x 270MB/s 20 x 220MB/s 24 x 180MB/s * tested with SATA3 devices, no Databolt or equivalent technology, at least not with an LSI HBA, with SAS3 devices limit here would be the around 4400MB/s with single link, and the PCIe slot with dual link, which should be around 6600-7000MB/s usable. Intel® SAS3 Expander RES3TV360 Single Link with LSI 9308-8i (*) 8 x 490MB/s 12 x 330MB/s 16 x 245MB/s 20 x 170MB/s 24 x 130MB/s 28 x 105MB/s Dual Link with LSI 9308-8i (*) 12 x 505MB/s 16 x 380MB/s 20 x 300MB/s 24 x 230MB/s 28 x 195MB/s * tested with SATA3 devices, PMC expander chip includes similar functionality to LSI's Databolt, with SAS3 devices limit here would be the around 4400MB/s with single link, and the PCIe slot with dual link, which should be around 6600-7000MB/s usable. Note: these results were after updating the expander firmware to latest available at this time (B057), it was noticeably slower with the older firmware that came with it. Sata 2 vs Sata 3 I see many times on the forum users asking if changing to Sata 3 controllers or disks would improve their speed, Sata 2 has enough bandwidth (between 265 and 275MB/s according to my tests) for the fastest disks currently on the market, if buying a new board or controller you should buy sata 3 for the future, but except for SSD use there’s no gain in changing your Sata 2 setup to Sata 3. Single vs. Dual Channel RAM In arrays with many disks, and especially with low “horsepower” CPUs, memory bandwidth can also have a big effect on parity check speed, obviously this will only make a difference if you’re not hitting a controller bottleneck, two examples with 24 drive arrays: Asus A88X-M PLUS with AMD A4-6300 dual core @ 3.7Ghz Single Channel – 99.1MB/s Dual Channel - 132.9MB/s Supermicro X9SCL-F with Intel G1620 dual core @ 2.7Ghz Single Channel – 131.8MB/s Dual Channel – 184.0MB/s DMI There is another bus that can be a bottleneck for Intel based boards, much more so than Sata 2, the DMI that connects the south bridge or PCH to the CPU. Socket 775, 1156 and 1366 use DMI 1.0, socket 1155, 1150 and 2011 use DMI 2.0, socket 1151 uses DMI 3.0 DMI 1.0 (1000MB/s) 4 x 180MB/s 5 x 140MB/s 6 x 120MB/s 8 x 100MB/s 10 x 85MB/s DMI 2.0 (2000MB/s) 4 x 270MB/s (Sata2 limit) 6 x 240MB/s 8 x 195MB/s 9 x 170MB/s 10 x 145MB/s 12 x 115MB/s 14 x 110MB/s DMI 3.0 (3940MB/s) 6 x 330MB/s (Onboard SATA only*) 10 X 297.5MB/s 12 x 250MB/s 16 X 185MB/s *Despite being DMI 3.0** , Skylake, Kaby Lake, Coffee Lake, Comet Lake and Alder Lake chipsets have a max combined bandwidth of approximately 2GB/s for the onboard SATA ports. **Except low end H110 and H310 chipsets which are only DMI 2.0, Z690 is DMI 4.0 and not yet tested by me, but except same result as the other Alder Lake chipsets. DMI 1.0 can be a bottleneck using only the onboard Sata ports, DMI 2.0 can limit users with all onboard ports used plus an additional controller onboard or on a PCIe slot that shares the DMI bus, in most home market boards only the graphics slot connects directly to CPU, all other slots go through the DMI (more top of the line boards, usually with SLI support, have at least 2 slots), server boards usually have 2 or 3 slots connected directly to the CPU, you should always use these slots first. You can see below the diagram for my X9SCL-F test server board, for the DMI 2.0 tests I used the 6 onboard ports plus one Adaptec 1430SA on PCIe slot 4. UMI (2000MB/s) - Used on most AMD APUs, equivalent to intel DMI 2.0 6 x 203MB/s 7 x 173MB/s 8 x 152MB/s Ryzen link - PCIe 3.0 x4 (3940MB/s) 6 x 467MB/s (Onboard SATA only) I think there are no big surprises and most results make sense and are in line with what I expected, exception maybe for the SASLP that should have the same bandwidth of the Adaptec 1430SA and is clearly slower, can limit a parity check with only 4 disks. I expect some variations in the results from other users due to different hardware and/or tunnable settings, but would be surprised if there are big differences, reply here if you can get a significant better speed with a specific controller. How to check and improve your parity check speed System Stats from Dynamix V6 Plugins is usually an easy way to find out if a parity check is bus limited, after the check finishes look at the storage graph, on an unlimited system it should start at a higher speed and gradually slow down as it goes to the disks slower inner tracks, on a limited system the graph will be flat at the beginning or totally flat for a worst-case scenario. See screenshots below for examples (arrays with mixed disk sizes will have speed jumps at the end of each one, but principle is the same). If you are not bus limited but still find your speed low, there’s a couple things worth trying: Diskspeed - your parity check speed can’t be faster than your slowest disk, a big advantage of Unraid is the possibility to mix different size disks, but this can lead to have an assortment of disk models and sizes, use this to find your slowest disks and when it’s time to upgrade replace these first. Tunables Tester - on some systems can increase the average speed 10 to 20Mb/s or more, on others makes little or no difference. That’s all I can think of, all suggestions welcome.1 point

-

Ich wollte hier mal meinen aktuellen Stand zeigen. Meinen Server habe ich selbst in einem 10 Zoll Rack verbaut: HDDs und Motherboard sind auf simplen Rackböden montiert: Hardware MB: Gigabyte C246N-WU2 CPU: Xeon E-2146G mit Boxed Kühler vom i3-9100 (der vorher verbaut war) RAM: 64GB ECC NT: Corsair SF450 Platinum LAN: 10G QNAP Karte HDD: 126TB bestehend aus 1x 18TB Ultrastar (Parität) und 7x 18TB WD Elements (Ultrastar White Label) Cache: 1TB WD 750N NVMe M.2 SSD USV: AEG Protect NAS quer auf Gummifüßen Als Server-Namen habe ich "Thoth" gewählt, da dies der ägyptische Gott der Weisheit war. Das verleitet auch manchmal dazu ihn "Thot" zu nennen. ^^ Bei 8 stehenden HDDs liegt der Verbrauch im Leerlauf bei 23W: Disk-Übersicht: Beim Hochladen direkt auf die Disks komme ich auf über 90 MB/s, was ich den schnellen HDDs zu verdanken habe: Auf den Cache sind natürlich 1 GB/s kein Problem: Dank 50% RAM-Cache gehen aber auch die ersten 30GB auf die HDDs mit 1 GB/s: Diese Kombination aus Performance und geringem Stromverbrauch bietet nur Unraid 😍 Ich betreibe außerdem noch einen Unraid Backupserver an einem externen Standort. Dieser nutzt ein Asrock J5005 und ist möglichst kompakt / günstig aufgebaut, wobei ich in einem Bitfenix ITX Case einen zusätzlichen HDD Käfig eingepasst habe, um 9 HDDs verbauen zu können:1 point

-

Hier eine Liste der Server, die von den Usern der Community vorgestellt wurden (hier bitte nur Projekte verlinken):1 point

-

@guy.davis thanks for your reply. in the meantime i checked our last conversation months ago in this thread and also the cleaning of machinaris.db did the trick last time. already did that, the phantom records are gone. many thanks and as always KEEP UP THAT GOOD WORK. adding the additional coin containers now one by one. working like a charm1 point

-

Hi, apologies for the shock. Known issue around default string values in the Chiadog version I enhanced to support the various forks. I'll get it fixed.1 point

-

Yes, Flora support is less than 1 day old and only supported on Machinaris v0.6.1+ (aka development branch). You'll need to upgrade all to ":develop" images. And expect breakages like this. I'll fix them though.1 point

-

Please read the notes on updating from 0.5.x to 0.6.0 for unraid docker. https://github.com/guydavis/machinaris/wiki/Unraid#how-do-i-update-from-v05x-to-v060-with-fork-support1 point

-

J'ai lancé le check avec correction, resultat dans quelques heures...1 point

-

There is no rule to put all vdisks into the "domain" share. So feel free to create as many shares as you like and set their caching rules as you prefer it. For example add the share "vmarray" and set its caching to "no". Now move the vdisk file (of course as long the vm isn't running) into this new share, edit the vm to update the vdisk path and you're done. PS you don't need to use /mnt/diskX/sharename as path in your VM config. You can use /mnt/user/sharename. Unraid automatically replaces /mnt/user against /mnt/diskX in the background, before starting the VM. Another solution could be to add multiple HDDs to a second pool. Its not as fast as the SSD cache pool, but would be a lot faster than your array. Some unraid users even create ZFS pools (through the command line) and use those paths for their VMs (or even for all data).1 point

-

I just migrated to a UDM-Pro over the weekend, but previous to that I was using 6.4.54 via UnRaid. I had no problems with the container itself, just issues with actual Unifi Software and its New Dashboard. One thing to keep in mind was that I still had DPI turned off as that was the cause of my Unifi Container ballooning in memory on previous releases and I never turned it back on. Otherwise the 6.4.54 container itself was running no issues for my previous if anyone else was loking for a few more positive reports before they upgraded. Also NGinx Proxy Container worked great for hiding my Unifi Controller behind a sub-domain of mine before I migrated to the UDM-Pro in case anyone is looking for a way to get somewhat HTTPS connection while not at home, or use Wireguard to access your network and then the container from there.1 point

-

Hola de nuevo, Bueno pues debía ser la red integrada de la placa... Montado en otro pc y reconstruyendo el array (cascó un HD por el camino y tengo que meter los datos de un backup). Esperaré a que acabe con el proceso pero ya he visto que tengo un problema con el servicio docker, no se inicia... Abriré otro hilo. Gracias y un saludo.1 point

-

Thats great. Thats 5 unraid users verified on linuxserver/unifi-controller:version-6.4.54 without any issues then, who took the time to post.1 point

-

1 point

-

I'm seeing this error. 2021-10-19 10:49:19 [920] [ERROR] Exception on / [GET] Traceback (most recent call last): File "/chia-blockchain/venv/lib/python3.9/site-packages/flask/app.py", line 2073, in wsgi_app response = self.full_dispatch_request() File "/chia-blockchain/venv/lib/python3.9/site-packages/flask/app.py", line 1518, in full_dispatch_request rv = self.handle_user_exception(e) File "/chia-blockchain/venv/lib/python3.9/site-packages/flask/app.py", line 1516, in full_dispatch_request rv = self.dispatch_request() File "/chia-blockchain/venv/lib/python3.9/site-packages/flask/app.py", line 1502, in dispatch_request return self.ensure_sync(self.view_functions[rule.endpoint])(**req.view_args) File "/machinaris/web/routes.py", line 19, in landing gc = globals.load() File "/machinaris/common/config/globals.py", line 82, in load cfg['blockchain_version'] = load_blockchain_version(enabled_blockchains()[0]) IndexError: list index out of range edit: And I *think* I followed the upgrade doc... edit: FIXED. I missed the original blockchain variable and added a second. It didn't like that.1 point

-

1 point

-

Haven't used NFS in awhile since I don't have NFS clients locally to the Unraid server anymore But I think it should be like this: 10.0.0.0/24(sec=sys,rw,async,insecure,no_subtree_check,crossmnt) There a few things that depend on your setup. what's the admin user for you in Unraid? the user that's allowed write access to the shares? then you'll add something like anonuid=99,anongid=100,all_squash this will "squash" all access to uid = 99, which is the nobody user in Unraid and group = 100, which is the users group so you can change the uid to match the "admin" user will the clients be accessing the files as root? then add "no_root_squash" to allow root continue access as root1 point

-

1 point

-

Genau wie @alturismo schon sagte kommt mit RC2, einfach noch ein paar Tage warten, das hat zwar mal so mit den Developer Previews so funktioniert weil die "alten" builds noch kein TPM und Secure Boot verfügbar vorausgesetzt haben, was dann beides in RC2 integriert ist. Wenn du beispielsweise von Windows 10 upgraden willst funktioniert das dann auch, erfordert aber eventuell eine erneute aktivierung von Windows also falls du eine VM mit Windows 10 hast jetzt gleich mit dem Microsoft Account verknüpfen um Probleme vorzubeugen und deine derzeitige Windows 10 installation muss den BIOS Typ "OVMF" haben, dann kannst du nachher auf "OVMF-TPM" ändern und auf Windows 11 upgraden. Hier noch weitere Infos:1 point

-

The best next option, besides running a dedicated, non-virtual pfsense box, is to have all NICs physical passed through for the pfsense-VM. pfsense is for routing and firewall. Hence it should at least have two NICs passed through (maybe a third for a DMZ)...one for WAN to your ISP(-modem) and one for LAN. The NIC dedicated in the pfsense for LAN should go into a physical Switch. When adding another NIC (for WAN), the one for LAN should be your 1Gbps on-board NIC. There is no need to use a higher spped than 1Gbps NIC for LAN in pfsense unless, either your WAN (ISP) is above a 1Gbps connection you want to route internally, like inter--(V)LAN routing, between clients on diffent networks. You then connect unraid with its dedicated NIC to the physical Switch as well. Should you wish to use the 2.5G for unraid, your switch should support that. As a hybrid solution, with only your two onboard NICs, you could go with: use the 1Gbps NIC for WAN, physically passed through to pfsense-VM use the 2.5G NIC for LAN/unraid...configure this as a bridge (even enable VLANs, if you wish) - AND connect it to the physical switch This is the only option to provide 2.5 for clients in your network connect LAN port of pfsense-VM via virtio to the unraid bridge - use this IP as default gateway.1 point

-

Just a small thing I noticed. In my alerts the Flax farmer claims to have won 1.75 XCH I nearly spilled my cup of tea!!! I wish it was true LOL (XFX) ========================================================== "SERVER1 flax WALLET Cha-ching! Just received 1.75 XCH ☘️" ==========================================================1 point

-

AFAIK the most models of the P400 are even x16 cards. Don't know if the slots are open-ended in the Dell T340, so that it can fit.1 point

-

I did all this when we first discussed the /mnt/cache and after some time you really get used to having all the appdata and VM's on your fast cache drive. My 2TB Nvme drive is reaching the 100G limit (Now defined on the cache drive and not the Gloval share settings) If money wasn't an issue I would upgrade to a nvme 4TB - but they are incredible expensive (My IRQ doesn't allow for a second nvme) So my option is to do some split of VM's some on the Cache drive and others on the Array How would this best be done in practice? I gues it isnt enought to remove the /mnt/cache/ path when the "domains" folder is set to: Prefer Would the best option keeping performance be to create a new domains share on the array? and just split them (Fast & Slow) @mgutt Also I am still using many of your great scripts (Also in this thread) the one you did for doing backups of the "Plex appdata backup" - Having everything on cache for speed requires good backup 🙂 I was wondering if you have a similar solution doing VM backups? (I found the solution in the app store to be buggy and cause to many problems) again thanks for all your help and especially all the great posts that you share with all of us in the forum!1 point

-

You have to mount it through Unassigned Devices first, then you have a mount point, for example '/mnt/disks/NAMEOFYOURCARDREADER' Sent from my C641 point

-

OK, looks nice...glad you solved it...nevertheless, running WAN via a vNIC in your main firewall is not a good thing to do.1 point

-

Oct 16 09:39:13 Chunker kernel: ------------[ cut here ]------------ Oct 16 09:39:13 Chunker kernel: WARNING: CPU: 8 PID: 0 at net/netfilter/nf_conntrack_core.c:1120 __nf_conntrack_confirm+0x9b/0x1e6 [nf_conntrack] Oct 16 09:39:13 Chunker kernel: Modules linked in: tun xt_mark nvidia_uvm(PO) macvlan veth xt_nat xt_tcpudp xt_conntrack xt_MASQUERADE nf_conntrack_netlink nfnetlink xt_addrtype iptable_nat nf_nat nf_conntrack nf_defrag_ipv6 nf_defrag_ipv4 br_netfilter iptable_mangle xfs md_mod nvidia_drm(PO) nvidia_modeset(PO) drm_kms_helper syscopyarea sysfillrect sysimgblt fb_sys_fops nvidia(PO) drm backlight agpgart ip6table_filter ip6_tables iptable_filter ip_tables x_tables bonding mlx4_en mlx4_core igb i2c_algo_bit edac_mce_amd kvm crct10dif_pclmul crc32_pclmul crc32c_intel ghash_clmulni_intel mxm_wmi wmi_bmof aesni_intel crypto_simd cryptd glue_helper mpt3sas i2c_piix4 i2c_core rapl k10temp raid_class ccp nvme scsi_transport_sas ahci nvme_core libahci wmi button acpi_cpufreq [last unloaded: mlx4_core] Oct 16 09:39:13 Chunker kernel: CPU: 8 PID: 0 Comm: swapper/8 Tainted: P O 5.10.28-Unraid #1 Oct 16 09:39:13 Chunker kernel: Hardware name: System manufacturer System Product Name/ROG STRIX B450-F GAMING, BIOS 4402 06/28/2021 Oct 16 09:39:13 Chunker kernel: RIP: 0010:__nf_conntrack_confirm+0x9b/0x1e6 [nf_conntrack] Oct 16 09:39:13 Chunker kernel: Code: e8 dc f8 ff ff 44 89 fa 89 c6 41 89 c4 48 c1 eb 20 89 df 41 89 de e8 36 f6 ff ff 84 c0 75 bb 48 8b 85 80 00 00 00 a8 08 74 18 <0f> 0b 89 df 44 89 e6 31 db e8 6d f3 ff ff e8 35 f5 ff ff e9 22 01 Oct 16 09:39:13 Chunker kernel: RSP: 0018:ffffc90000394938 EFLAGS: 00010202 Oct 16 09:39:13 Chunker kernel: RAX: 0000000000000188 RBX: 000000000000c0bf RCX: 00000000b0198cd7 Oct 16 09:39:13 Chunker kernel: RDX: 0000000000000000 RSI: 0000000000000000 RDI: ffffffffa0238f10 Oct 16 09:39:13 Chunker kernel: RBP: ffff8881113c2bc0 R08: 00000000fb009142 R09: 0000000000000000 Oct 16 09:39:13 Chunker kernel: R10: 0000000000000158 R11: ffff8880962fc200 R12: 0000000000005b44 Oct 16 09:39:13 Chunker kernel: R13: ffffffff8210b440 R14: 000000000000c0bf R15: 0000000000000000 Oct 16 09:39:13 Chunker kernel: FS: 0000000000000000(0000) GS:ffff88881ea00000(0000) knlGS:0000000000000000 Oct 16 09:39:13 Chunker kernel: CS: 0010 DS: 0000 ES: 0000 CR0: 0000000080050033 Oct 16 09:39:13 Chunker kernel: CR2: 0000000000fdd120 CR3: 00000001ae75e000 CR4: 0000000000350ee0 Oct 16 09:39:13 Chunker kernel: Call Trace: Oct 16 09:39:13 Chunker kernel: <IRQ> Oct 16 09:39:13 Chunker kernel: nf_conntrack_confirm+0x2f/0x36 [nf_conntrack] Oct 16 09:39:13 Chunker kernel: nf_hook_slow+0x39/0x8e Oct 16 09:39:13 Chunker kernel: nf_hook.constprop.0+0xb1/0xd8 Oct 16 09:39:13 Chunker kernel: ? ip_protocol_deliver_rcu+0xfe/0xfe Oct 16 09:39:13 Chunker kernel: ip_local_deliver+0x49/0x75 Oct 16 09:39:13 Chunker kernel: ip_sabotage_in+0x43/0x4d [br_netfilter] Oct 16 09:39:13 Chunker kernel: nf_hook_slow+0x39/0x8e Oct 16 09:39:13 Chunker kernel: nf_hook.constprop.0+0xb1/0xd8 Oct 16 09:39:13 Chunker kernel: ? l3mdev_l3_rcv.constprop.0+0x50/0x50 Oct 16 09:39:13 Chunker kernel: ip_rcv+0x41/0x61 Oct 16 09:39:13 Chunker kernel: __netif_receive_skb_one_core+0x74/0x95 Oct 16 09:39:13 Chunker kernel: netif_receive_skb+0x79/0xa1 Oct 16 09:39:13 Chunker kernel: br_handle_frame_finish+0x30d/0x351 Oct 16 09:39:13 Chunker kernel: ? skb_copy_bits+0xe8/0x197 Oct 16 09:39:13 Chunker kernel: ? ipt_do_table+0x570/0x5c0 [ip_tables] Oct 16 09:39:13 Chunker kernel: ? br_pass_frame_up+0xda/0xda Oct 16 09:39:13 Chunker kernel: br_nf_hook_thresh+0xa3/0xc3 [br_netfilter] Oct 16 09:39:13 Chunker kernel: ? br_pass_frame_up+0xda/0xda Oct 16 09:39:13 Chunker kernel: br_nf_pre_routing_finish+0x23d/0x264 [br_netfilter] Oct 16 09:39:13 Chunker kernel: ? br_pass_frame_up+0xda/0xda Oct 16 09:39:13 Chunker kernel: ? br_handle_frame_finish+0x351/0x351 Oct 16 09:39:13 Chunker kernel: ? nf_nat_ipv4_pre_routing+0x1e/0x4a [nf_nat] Oct 16 09:39:13 Chunker kernel: ? br_nf_forward_finish+0xd0/0xd0 [br_netfilter] Oct 16 09:39:13 Chunker kernel: ? br_handle_frame_finish+0x351/0x351 Oct 16 09:39:13 Chunker kernel: NF_HOOK+0xd7/0xf7 [br_netfilter] Oct 16 09:39:13 Chunker kernel: ? br_nf_forward_finish+0xd0/0xd0 [br_netfilter] Oct 16 09:39:13 Chunker kernel: br_nf_pre_routing+0x229/0x239 [br_netfilter] Oct 16 09:39:13 Chunker kernel: ? br_nf_forward_finish+0xd0/0xd0 [br_netfilter] Oct 16 09:39:13 Chunker kernel: br_handle_frame+0x25e/0x2a6 Oct 16 09:39:13 Chunker kernel: ? br_pass_frame_up+0xda/0xda Oct 16 09:39:13 Chunker kernel: __netif_receive_skb_core+0x335/0x4e7 Oct 16 09:39:13 Chunker kernel: ? dev_gro_receive+0x55d/0x578 Oct 16 09:39:13 Chunker kernel: __netif_receive_skb_list_core+0x78/0x104 Oct 16 09:39:13 Chunker kernel: netif_receive_skb_list_internal+0x1bf/0x1f2 Oct 16 09:39:13 Chunker kernel: gro_normal_list+0x1d/0x39 Oct 16 09:39:13 Chunker kernel: napi_complete_done+0x79/0x104 Oct 16 09:39:13 Chunker kernel: mlx4_en_poll_rx_cq+0xa8/0xc7 [mlx4_en] Oct 16 09:39:13 Chunker kernel: net_rx_action+0xf4/0x29d Oct 16 09:39:13 Chunker kernel: __do_softirq+0xc4/0x1c2 Oct 16 09:39:13 Chunker kernel: asm_call_irq_on_stack+0x12/0x20 Oct 16 09:39:13 Chunker kernel: </IRQ> Oct 16 09:39:13 Chunker kernel: do_softirq_own_stack+0x2c/0x39 Oct 16 09:39:13 Chunker kernel: __irq_exit_rcu+0x45/0x80 Oct 16 09:39:13 Chunker kernel: common_interrupt+0x119/0x12e Oct 16 09:39:13 Chunker kernel: asm_common_interrupt+0x1e/0x40 Oct 16 09:39:13 Chunker kernel: RIP: 0010:native_safe_halt+0x7/0x8 Oct 16 09:39:13 Chunker kernel: Code: 60 02 df f0 83 44 24 fc 00 48 8b 00 a8 08 74 0b 65 81 25 a1 a9 95 7e ff ff ff 7f c3 e8 95 4d 98 ff f4 c3 e8 8e 4d 98 ff fb f4 <c3> 53 e8 04 ef 9d ff e8 04 76 9b ff 65 48 8b 1c 25 c0 7b 01 00 48 Oct 16 09:39:13 Chunker kernel: RSP: 0018:ffffc9000016fe78 EFLAGS: 00000246 Oct 16 09:39:13 Chunker kernel: RAX: 0000000000004000 RBX: 0000000000000001 RCX: 000000000000001f Oct 16 09:39:13 Chunker kernel: RDX: ffff88881ea00000 RSI: ffffffff820c8c40 RDI: ffff88810207d064 Oct 16 09:39:13 Chunker kernel: RBP: ffff8881056c8000 R08: ffff88810207d000 R09: 00000000000000b8 Oct 16 09:39:13 Chunker kernel: R10: 00000000000000cb R11: 071c71c71c71c71c R12: 0000000000000001 Oct 16 09:39:13 Chunker kernel: R13: ffff88810207d064 R14: ffffffff820c8ca8 R15: 0000000000000000 Oct 16 09:39:13 Chunker kernel: ? native_safe_halt+0x5/0x8 Oct 16 09:39:13 Chunker kernel: arch_safe_halt+0x5/0x8 Oct 16 09:39:13 Chunker kernel: acpi_idle_do_entry+0x25/0x37 Oct 16 09:39:13 Chunker kernel: acpi_idle_enter+0x9a/0xa9 Oct 16 09:39:13 Chunker kernel: cpuidle_enter_state+0xba/0x1c4 Oct 16 09:39:13 Chunker kernel: cpuidle_enter+0x25/0x31 Oct 16 09:39:13 Chunker kernel: do_idle+0x1a6/0x214 Oct 16 09:39:13 Chunker kernel: cpu_startup_entry+0x18/0x1a Oct 16 09:39:13 Chunker kernel: secondary_startup_64_no_verify+0xb0/0xbb Oct 16 09:39:13 Chunker kernel: ---[ end trace 906f7f9f734c7e09 ]--- This is not my area of expertise, hopefully someone can point you on the right track.1 point

-

1 point

-

@ itimpi: Gotcha. So instead I turned parity on. It completed this evening: Duration: 8 hours, 3 minutes, 54 seconds. Average speed: 344.5 MB/sec - for a 10TB WDC Gold. Still 3 Days for the copies to complete. Once that is done, I will test shares on my Network. Then I want to install Windows 10 as a VM on the same machine - to eventually replace the original Windows 10 installation. Once that is all working then I will start migrating one drive at a time, the other 8TB drives. I'll probably be back here to continue my education... 🙂1 point

-

I really wish you hadn't removed the Docker Hub integration. I realize the templates were pretty bare-bones but it at least filled out the name, description, repository etc. making it a lot faster than going to the Docker page, manually adding a container and starting with a completely blank template.1 point

-

Ok - I found a MUCH easier way..... After making the changes to goaccess.conf to be: time-format %T date-format %d/%b/%Y log_format [%d:%t %^] %^ %^ %s - %m %^ %v "%U" [Client %h] [Length %b] [Gzip %^] [Sent-to %^] "%u" "%R" log-file /opt/log/proxy_logs.log Simply add the following line to each proxy host in NGINX Proxy Manager - Official "advanced" access_log /data/logs/proxy_logs.log proxy; like so: (if you already have advanced stuff here, add the line to the VERY top) Now they all log to the same file, and same format, simply add the line to all proxy_hosts and remember to add it to any new ones.1 point

-

Thank you very much for the extensive answer, it works like a charme.1 point

-

Ist sogar "besser". Der wird nicht so warm wie der 3.0 und die paar Daten die darauf geschrieben/gelesen werden reicht 2.01 point

-

..go to the source, not Amazon reviews, when checking compatibility. Use the latest pfsense iso or try opnsense as well Gesendet von meinem SM-G780G mit Tapatalk1 point

-

I've tested that exact combination and can't replicate. An update will be available to CA tonight that will help me diagnose where it's failing.1 point

-

The other one IS in use (I assume ethernet at 5:00.0 is eth1). Any reason you are trying to passthrough the ethernet?Could you consider using the virtual bridged network br1 (the xml snippet does so..)? So either passthrough the ethernet by attaching to vfio, or use a bridge: the difference is the vm in the first case will see the real ethernet card, in the other case it will create a virtual controller that will use the bridge connectivity. Passthrough case: Correct, but starting from unraid 6.9, the setup in System devices page will bind the device both on vendor/device ids and domain:bus:slot.function, so in your case it will be setup like: BIND=0000:05:00.0|8086:10d3 The issue here is that the ethernet will always be grabbed by unraid even if it's inactive. And apparently you cannot isolate from the gui. If I were you I woud: 1. backup the unraid usb, so to restore if something goes wrong 2. open config/vfio-pci.cfg with a text editor in the unraid usb stick 3. Add BIND=0000:05:00.0|8086:10d3 If you have multiple devices isolated just append them, for example: BIND=0000:05:00.0|8086:10d3 0000:04:00.0|10de:100c 0000:04:00.1|10de:0e1a Save and reboot, and your eth1 should now be isolated, without loosing eth0 connectivity for unraid.1 point

-

1 point

-

1 point

-

1 point