-

Posts

55 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by Kacper

-

-

Hi,

thank You for detailed instruction. I will do so if everything fails, but for now I think that mariadb is not working for some reason. Problem is not owncloud related.

I think that in docker /etc/systemd/system/mysqld.service

User=abc

Group=users

must be changed. By default it is: mysql. This resolved my issue. After reinstalling container it went back to mysql, but somehow database is running fine and is running as user abc (inside container) which is correct.

I was thinking that owncloud is updated together with container update. I need to run owncloud update manually?

After mariadb went up I did update to the newest owncloud version with php 7.4. Thanks for advice. I have a rule, I don't touch what is working

I see that docker approach is forcing updates always.

I see that docker approach is forcing updates always.

-

Hi,

yes I am running newest container.

In messages I have found:

mariadb: Error: Databases failed to upgrade - check 'DB_PASS' password.

I didn't change any password, so sth. went wrong with autoupdate.

-

Hi,

after power was down my docker was restarted. Now I am getting:

220322 15:48:56 mysqld_safe Logging to '/config/database/e85a96cd166a.err'. 220322 15:48:56 mysqld_safe Starting mysqld daemon with databases from /config/database /usr/bin/mysqld_safe_helper: Can't create/write to file '/config/database/e85a96cd166a.err' (Errcode: 13 "Permission denied") 220322 15:48:57 mysqld_safe Logging to '/config/database/e85a96cd166I am using php 7.2, I didn't change anything. After new boot docker was doing some package updates, which crashed the whole thing probably. I did update to the owncloud docker via unraid interface, but error stays the same. Any clues?

-

Thanks ich777 for compiling these modules for all of us

-

1

1

-

-

I am almost sure that this message means that module is not compiled for kernel used by unraid 6.9.2. Pay attention that I have compiled modules for unraid 6.8.3 and 6.9.0. I am still using unraid 6.9.0 as I don't want to waste time for upgrading my unraid server. It works fine right now. So you need to use docker called unraid-kernel-helper if it is already avaliable for 6.9.2 and compile ac and battery modules by yourselfe. Script will stay the same, it will work if modules load correctly.

-

Hi nice to know u like it. I used docker called:

unraid-kernel-helper

Then I set to manual compile or sth. like that. Then I did my own make menuconfig - like it is described in the manual for this docker. Then I compiled with make commands. At the end i have copied over ssh battery modules.

-

I am trying to find disk temperature with snmpwalk, but I cannot. What oid it suppose to be or what string should i search for to find disk temperature?

-

Hi guys,

I want to announce my simple ntp server, that can provide docker host localtime over ntp protocol.

What does it do?

This simple NTP server delivers time over ntp protocol to dummy devices like ip cameras, cheap routers that cannot handle time zone changes and day light saving correctly. With this container You can set your dummy device to use UTC time with no daylight saving and then point it to sychronize time ovet ntp protocol. This container will handle timezone correctly and deliver local time LTC to dummy device instead of UTC time (like regular ntp server does).

Install under unraid.

Go to docker tab in your unraid panel. At the bottom click add container. Then configure like at the image bellow. Replace ip with your own network ip address. Make sure selected ip is not taken by other device.

After successful you will see:

Point your dummy device to sync time over ntp.

This server localtime can be different, than docker host. Local time zone is provided by TZ variable.

Link to dockerhub: https://hub.docker.com/repository/docker/k4cus/ntpserverltc

This is my first docker, so maybe It is not very clean implementation.

-

Hi,

I have written an ntp server which can serve local time LTC instead of UTC. It is usefull for dummy devices, that can not handle time zone, day light and so on. I am using it with ip cameras, that always messed their clocks even when synchronized with ntp server.

I this case you can set utc zone with no day light saving and connect to my ntp server. My ntp server takes local time for OS so it is always handled correctly by the os.

I would like to share this docker image, I have it running on my unraid. I was reading about making docker and I have docker hub account, but it is unclear for me howto make proper unraid image.

It is even more unclear howto make it easy to install on unraid via community plugin, so paths and configuration option appear in unraid automatically?

Can You provide some good tutorial? There are many tutorials, but very few that are reliable

-

This docker has a little bug. /root/.ssh/ dir schould be mounted and persistent, as after container reinstall known_hosts and private keys are lost. As workaround I am using:

ssh -i /config/id_rsa -o UserKnownHostsFile=/config/known_hosts -p 222 [email protected]and my settings in rsnaphost.conf:

ssh_args -i /config/id_rsa -o UserKnownHostsFile=/config/known_hosts -p 222 rsync_short_args -az # My mysql dump on my debian machine: backup_exec ssh -i /config/id_rsa -o UserKnownHostsFile=/config/known_hosts -p 222 [email protected] "mysqldump --defaults-file=/etc/mysql/debian.cnf --all-databases| bzip2 > /root/mysqldump.sql.bz2" # Then backup whole /root folder backup [email protected]:/root your.server.ip/ # and etc www vmain folders - it is ISPCONFIG machine backup [email protected]:/etc/ your.server.ip/ backup [email protected]:/var/www/clients your.server.ip/ backup [email protected]:/var/vmail your.server.ip/Of course to install private key ssh login option do:

# Only once to generate key ssh-keygen # do this for each server where you want to install your private key ssh-copy-id -i /config/id_rsa -p 222 [email protected]To make this post complete:

- it is required to modify crontabs:

root@nas:/mnt/user/appdata/rsnapshot-backup/crontabs# cat root # do daily/weekly/monthly maintenance # min hour day month weekday command */15 * * * * run-parts /etc/periodic/15min 0 * * * * run-parts /etc/periodic/hourly 0 2 * * * run-parts /etc/periodic/daily 0 3 * * 6 run-parts /etc/periodic/weekly 0 5 1 * * run-parts /etc/periodic/monthly # rsnapshot examples 0 0 * * * rsnapshot daily 0 1 * * 1 rsnapshot weekly 0 2 1 * * rsnapshot monthly- and in rsnapshot.conf:

######################################### # BACKUP LEVELS / INTERVALS # # Must be unique and in ascending order # # e.g. alpha, beta, gamma, etc. # ######################################### retain daily 7 retain weekly 4 retain monthly 3-

1

1

-

On 3/5/2021 at 3:38 PM, sota said:

I'd appreciate any updates on this if you ever move forward. I'm going to want to bring online several more cameras (8+ total), and activating motion detection on all of them for shinobi would be almost required to keep data storage to a manageable level. knowing what GPU would be needed to handle that kind of load would be beneficial.

Hi guys,

after 5 days of struggling with shinobi I must say that this docker is the best one I have found if You want to use nvidia gpu. I can explain quickly.

This docker is based on Ubuntu 18.04 - which has cuda-10.2, which is the last version of cuda supporting cuda capable cards 3.0.

Here is a list, check your gpu: https://developer.nvidia.com/cuda-gpus

This docker has gpu working out of a box - ffmpeg + yolo !

My recommendation to setup camera are:

Advantage is the performance. I can see ffmpeg working on gpu. There is no cpu usage.Sorry I made mistake when measuring cpu usage. Even if ffmpeg show it is running on gpu it still uses a lot of cpu. Be aware that when you decode using gpu, then in recording section you cant use gpu, or when you set recording to use gpu codec, then in input section you have to set to use cpu.In conlusion, when I have added all 5 cameras 2 of them keep disconnecting. Also cpu load is not less than when using zoneminder. Only Yolo plugin seams to benefit from gpu usage a lot. For these reason I will keep using zoneminder as it is more reliable for me, all cameras work fine.

To asses update matters. Run console and type sh UPDATE.sh

Then your shinobi will be updated. Of course if you change anything in container settings it will reverse back to original version from image. That is how dockers are superior over lxc or openvz

One of the use case for my todays code:

-

On 7/2/2015 at 3:03 AM, bmfrosty said:

LXC - Linux Containers is an alternative to docker that relies on the same underlying infrastructure as docker. The big difference is that it's built around system containers instead of application containers.

This would be great for things that have more more complex setups like torrent clients + vpn that are difficult under Docker. Lighter weight than KVM for larger system containers that one might want for supporting tools like ffmpeg and other file maintenance utilities.

And I fully agree with this guy.

On 7/3/2015 at 4:49 PM, jonp said:Correct, lxc isn't something I think we'd ever consider. Seems like there would be a very niche fit for this and not worth the implementation effort.

And I fully disagree, I am even disgusted :D:D:D

-

You right, maybe it is only my that find things 10 times harder to make them work in docker than in regular linux machine

Zoneminder is not the only application. Any complicated application with heavy dependence on libraries particular version or data version will fail on docker or will be not worth the effort of porting to docker.

Zoneminder is not the only application. Any complicated application with heavy dependence on libraries particular version or data version will fail on docker or will be not worth the effort of porting to docker.

One hint I can give is - use ubuntu 18.04 as base. Cuda version 10.2 supports cuda compatibility 3.0, which is important for users to use older, but still efficient gpus. Nvidia dropped compatibility in cuda 11.2 - it is cc 5.0 or 6.0 now, so useless for many people. We don't want to buy 1000 dollars gpu just to run ffmpeg on it and maybe tensorflow

Running ffmpeg on my quadro K2000M takes 1% for one camera, on cpu 15% (intel i7) for decoding, so it is worth to struggle.

-

Hi,

I would like to share with community my new modification. Maybe limetech will include this option in unraid.

To the point. When complicated application, with many system dependencies has to be deployed or developed it is convenient to use virtual machine. However virtual machine has extra overtread. Also dedicated RAM or GPU has to be attached to this VM only. With docker one GPU can be used in several containers, also ram is shared dynamically. It is nothing new as openvz can do that very well. Unfortunately unraid is lacking openvz. On the other hand in microservices approach present in docker environment it is assumed that only data is persistent and whole operating system libraries, application libraries are included in image and can be erased and restored from the image at any time in any version. It works well only when application designer is aware of this.

However there are other use cases, when docker approach will fail:

- In development, when using application that depends heavily on libraries to be in one particular version – e.g. shinobi with yolo, tensorflow + cuda.

- Very often an effort or cost of adjusting application to work in docker philosophy is to high.

- When migrating physical machine or virtual machine.

- When one gpu should accelerate several applications.

For this reason I have created small feature for unraid to make container persistent. Unraid is working in agreement with docker philosophy, therefore at any parameter change in unraid’s gui or when update is released it will erase whole container and recreate it. My code makes container detached from main repo and persistent across parameter edit.

To make it work user.script plugin is required. Then my script should be copied to folder “/boot/config/plugins/user.scripts/scripts/persistentDockerContainers” and scheduled to be run once after array is started.

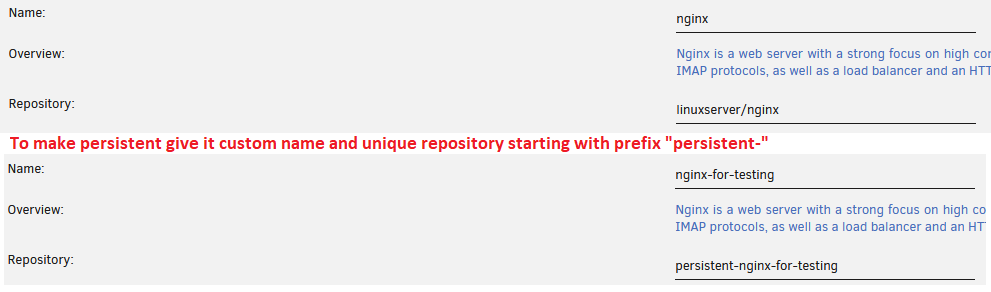

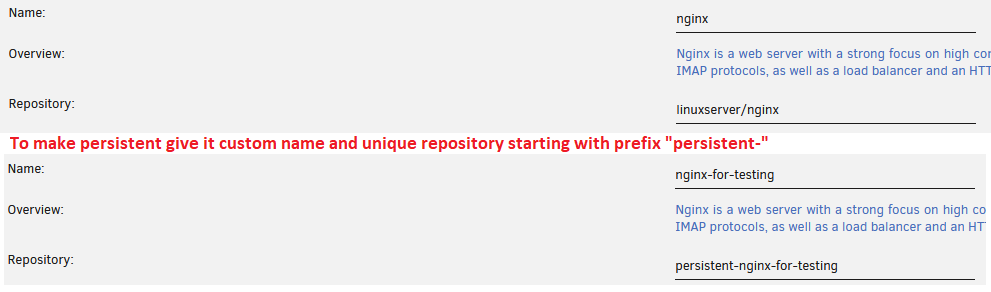

How to use my code?

You need to edit repository field, by adding “repository-“ prefix. Each container that is used as persistent should be having unique repository field !!! It is also advised that name of the container should be changed to unique, because unraid has undesirable behavior that, when another container is installed via community apps and container with the same name exists it will be deleted without any warning.

In short, to make container persistent:

1. Change name to something unique.

2. Set unique repository name starting with prefix “persistent-“

That is all folks. Give me some feedback if You find it useful please. I don’t know if I should continue posting my solutions, as it takes a lot of time. I will do it only if it is useful for community.

Best Regards,

Kacper

p.s.

For people who are reasonable not to run someones code without checking it:

root@nas:~# diff CreateDocker.php CreateDocker.php.org 108,126d107 < < // check what is name of the container to image, important when changing container name < if ($existing && $DockerClient->doesContainerExist($existing)) {$ContainerNameToImage = $existing; } < else if ($DockerClient->doesContainerExist($Name)){ $ContainerNameToImage = $Name; } < else{ $ContainerNameToImage = ""; < } < < // do the image < if ($DockerClient->doesContainerExist($ContainerNameToImage) && (strpos($Repository, "persistent-") !== false)){ < // repository name must by unique for presistent containers - this not guaranteed if user creates several containers from one repository and makes all containers persistent < $oldContainerInfo = $DockerClient->getContainerDetails($ContainerNameToImage); < if (!empty($oldContainerInfo) && !empty($oldContainerInfo['State']) && !empty($oldContainerInfo['State']['Running'])) { < // attempt graceful stop of container first < $startContainer = true; < stopContainer($ContainerNameToImage); < } < $DockerClient->commitContainer($ContainerNameToImage, $Repository); // commit persistent container < } < root@nas:~# diff DockerClient.php DockerClient.php.org 772,777d771 < public function commitContainer($id, $repo) { < $this->getDockerJSON("/commit?container=$id&repo=$repo", 'POST', $code); < $this->flushCache($this::$containersCache); < return $code; < } < -

I agree it would be great to have system containers avaliable. I find docker extremely complicated and taking a lot of effort to do really simple things. I don't understand the idea of dockers and when I see how zoneminder integration with ES is painfull in dockers I am supprised people force other people to use dockers.

Openvz was really cool, now lxc does the same as openvz. Would be greate to have it in unraid.

It is much easier to install everything from source code in system container than find or create docker.

-

Hi guys,

how to configure shinobi in such a way, that when I download video date and time will be overlayed on the video? I wan't it to behave like regular DVR. Video is quite useless for the police if there is no timestamp on it

-

Hi,

I would like to ask maybe off topic question. Unfortunatelly I can't register to zoneminder forum, I am marked as spamer regardles It was my first visit there in my life

My question is:

When I download video via: export or export video option, the mp4 image has no date and time on it. How to make zoneminder add date and time on the video?

Thanks,

Kacper

-

Thanks for Your reply. Backup option is cool. I didn't know about it

-

-

OK thank you.

-

-

And it will wait for docker to shutdown and array to close gracefully?

-

Hi,

I have created mini howto with script that actually does the job for me. Look here:

I am sure it can be done better way, e.g. as a plugin.

I have read that in kernel there are is other battery module (sbs), that will work with other, maybe newer laptops. My solution uses ac and battery modules and works with thinkpads and probably many more laptops.

Making universal plugin might require community testing on different machines or reading docs for sbs kernel power module.

Anyway, I have accomplished what I wanted and shared my results

Thanks for help.

Thanks for help.

I have added kernel modules for unraid 6.9.0. In this post I will attach sbs module, if anyone has pc that is using sbs then maybe can share some knowledge.

Best regards,

Kacper

-

1

1

-

-

Hi,

I am using Thinkpad W530 Laptop for unraid server. Today, after fruitful discussion on this forum I made a user.script that will shutdown unraid when laptop battery is bellow 30% and ac is off.

Plugin user.script

is required to use my solution.

All You need to do is to extract attached archive to folder: /boot/config/plugins/user.scripts/scripts/shutdownWhenBatteryDepleted

root@nas:/boot/config/plugins/user.scripts/scripts/shutdownWhenBatteryDepleted# ls -l total 64 -rw------- 1 root root 2716 Mar 6 09:41 ac.ko.xz -rw------- 1 root root 6700 Mar 6 09:41 battery.ko.xz -rw------- 1 root root 177 Mar 6 11:20 description -rw------- 1 root root 1018 Mar 6 12:54 script root@nas:/boot/config/plugins/user.scripts/scripts/shutdownWhenBatteryDepleted#It is important not to change script name, as path to *.ko.xz module is hardcoded in the script file.

When this is done, You must test script, by running it manually. Then after You make sure it works in Your system You can enable the scheduler. Script will write log using logger command when shutdown is issued.

On first run script will load ac and battery kernel modules.

In the attached figure I am running script every one minute, but maybe every 2 minutes would be sufficient. You can enter */2 * * * * to run script every 2 minutes. Use this website https://crontab.guru to find Your preferred schedule.

Use at Your own risk! For testing purpose I suggest commenting out (sign #) command /sbin/poweroff in my script.

I am still unsure if /sbin/poweroff is proper way to shutdown VMs and docker gracefully. Waitng for support to answere my question, as I was unable to find it on the Internet.

Best Regards,

Kacper

shutdownWhenBatteryDepleted_unraid6_8_3.zip shutdownWhenBatteryDepleted_unraid6_9_0.zip

-

2

2

-

[support] dlandon - ownCloud

in Docker Containers

Posted

One more question, can You post Your:

root@nas:/mnt/disk1/owncloud/config/www/owncloud# ls -l

total 540

-rw-r--r-- 1 root users 8859 Jan 12 15:29 AUTHORS

-rw-r--r-- 1 root users 411639 Jan 12 15:29 CHANGELOG.md

-rw-r--r-- 1 root users 34520 Jan 12 15:29 COPYING

-rw-r--r-- 1 root users 2425 Jan 12 15:29 README.md

drwxrwxrwx 51 nobody users 4096 Mar 23 15:13 apps

drwxr-xr-x 2 nobody users 6 Dec 10 2020 apps-external

drwxrwxrwx 2 nobody users 79 Mar 23 15:10 config

-rw-r--r-- 1 root users 4618 Jan 12 15:29 console.php

drwxr-xr-x 16 root users 335 Jan 12 15:30 core

-rw-r--r-- 1 root users 1717 Jan 12 15:29 cron.php

-rw-r--r-- 1 root users 31204 Jan 12 15:29 db_structure.xml

-rw-r--r-- 1 root users 179 Jan 12 15:29 index.html

-rw-r--r-- 1 root users 3518 Jan 12 15:29 index.php

drwxr-xr-x 6 root users 79 Jan 12 15:29 lib

-rwxr-xr-x 1 root users 283 Jan 12 15:29 occ

drwxr-xr-x 2 root users 23 Jan 12 15:29 ocm-provider

drwxr-xr-x 2 root users 55 Jan 12 15:29 ocs

drwxr-xr-x 2 root users 23 Jan 12 15:29 ocs-provider

-rw-r--r-- 1 root users 3135 Jan 12 15:29 public.php

-rw-r--r-- 1 root users 5618 Jan 12 15:29 remote.php

drwxr-xr-x 4 root users 39 Jan 12 15:29 resources

-rw-r--r-- 1 root users 26 Jan 12 15:29 robots.txt

drwxr-xr-x 12 root users 209 Jan 12 15:29 settings

-rw-r--r-- 1 root users 2231 Jan 12 15:29 status.php

drwxr-xr-x 6 nobody users 150 Nov 14 2019 updater

-rw-r--r-- 1 root users 280 Jan 12 15:29 version.php