-

Posts

192 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by Drider

-

-

1 hour ago, bryce2113 said:

Sounds like it was added as a request back in 2017 but no word on implementation.

I've been looking for this for a long, long ... long time.

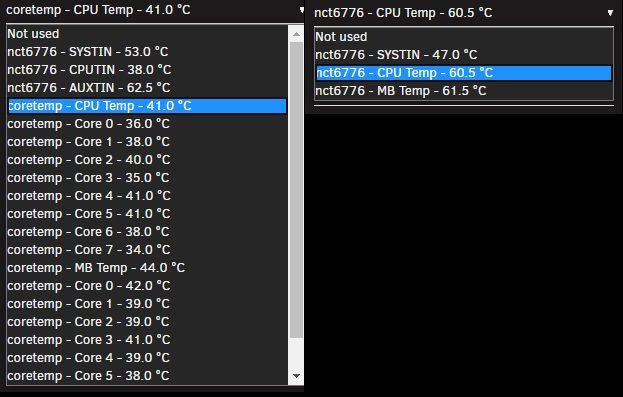

Side note I made the mistake of re-detecting my driver tonight, and lost all temp reading I was previously showing on my 32 cores. Now I can only select 1 of 3 options CPU/MB/SYSTIN

Currently runnig latest update, Attached a screen to show how it was compared to now.

-

Wow, haven't bought RAM in a couple years since I picked up 2 Kits of Dominator Platinum SE.

Prices are no joke right now...

Thanks for the link.

-

This will all be High end, top of the line components in the system.

I'm a long time Dominator Platinum user. How's the stability there?

-

Thinking of doing a TRI/TRII (1950x/2950x) for a client.

Mainly going to be Data Storage / Backup Archiving and a VM host for a Domain Controller w/ Active Directory, & Client Workstations. Likely some light docker apps like Crashplan, and Cloud sharing, (Need to look into that one too).

I'd like to read up on the issues, and work around associated with the TR platform and unRAID.

Would any of you fine folks be able to provide some links to Threads here in the forums that I might be able to start wrapping my head around?

-

23 hours ago, Taddeusz said:

I’m using ASUSWRT Merlin so I’m not sure this will work for you, but this is what I use.

https://gist.github.com/dd-han/09853f07efdf67f0f4af3f7531ac7abf

I actually have a Merlin flashed ASUS router on the network, so this should work.

Thanks!

-

On 5/16/2018 at 10:31 AM, Taddeusz said:

Not sure about ddclient but I figured out how to add a script to my router to do the same thing, and it uses their v4 API.

Just found the my DDClient is failing to update cloudflare today.

You wouldn't happen to be using DD-WRT, and if so provide some guidance on the script your using?

-

On 5/18/2018 at 4:36 AM, tr0910 said:

My use is purely business too. I have Win10 N (stripped of the media junk) running in 12gb of disk space, but it definitely grows with updates. A major update needs another 10gb minimum during the updating process so I usually go with 30gb for lightweight VM's but I have some that run in 20gb. Being able to easily grow the VM disk space would be nice.

I take back my previous statement, I was padding my estimate.

With 8.1 Enterprise, after all current updates, and a disk clean up, the VM's run at 23GB total disk size.

This is with Office 2013 Professional Plus, and a few other applications including all of their updates.

-

1 hour ago, tr0910 said:

So unRaid is not the bare metal hypervisor? unRaid is a vm itself inside Hyper-V? Would you consider using unRaid as the bare metal hypervisor?

Nope, and to be clear I run Windows Hyper-V core 2016. I prefer the light weight nature for the Hyper-V system, and having the disk, and resources distributed through Hyper-V. It's really a preference, and I couldn't tell you if there's really any discernible difference in Hyper-V vs unRAID VM management, at least nothing in my use case. I will say if I were to build a box that was to be used as a hub for gaming, much like LTT's examples, THEN I would be running unRAID VM Manager for sure. Again, my use is purely business, so the need is just not there.

1 hour ago, tr0910 said:Win 8 was a virus that never got loaded on my machines (LOL). I transitioned from Win7 which I loved to Win10. I don't have any horror stories so far, but I have several dual 2670 rigs, on Intel 2600cp2 mb, and one of them is getting more duty as a bare metal hypervisor of Win10 RDP clients under unRaid. So far so good, but I only have less than 6 so far. With 128gb RAM, there is really no limit for me to continue this process. I have never let Win10 start with low RAM and then allow it to grow exponentially like you are. I usually just set them up with 8gb and forget about it. Maybe I should play around with your dynamic memory model???

Windows 8.1, (not 8), was a highly underrated OS. It mainly got a bad wrap because of the Metro theme and start button. Well simple Classic Shell, and magically it works just like windows 7. I prefer 8.1 over 7, especially the enterprise versions as the patches and advancements are quite apparent in daily use. 8.1 really was a lightweight and efficient OS. My total installation package with current updates sits around 27GB after a disk clean when updates are completed. Not to mention the far more stable daily use over Windows 10 right now. If you plan to run quite a few VM's in one system, and you can get your hand on Enterprise versions of windows, it's definitely the path you want to go. Not only is the memory assigned dynamically, but the HDD space can be as well. SO if your VM starts to run out of disk space in a year, you can expand it right there in the Hyper-V manager.

1 hour ago, tr0910 said:My main complaint with unRaid as a bare metal hypervisor is requiring VM's to be shut down before you can stop the array. That is a pain and requires scheduling to make sure it doesn't affect other people...

Well, you pretty much can't escape this in any VM host. I mean you CAN shut down the Hyper-V and just terminate all VM's in that instant, but it's not recommended as it's pretty much the same as pulling the plug on a system. At least unRAID has a built in safety forcing you to perform a shut down of the VM's before it destroys them. In most other VM managers, you get an "are you sure?" warning, but that's it.

-

17 hours ago, tr0910 said:

Interesting use. Why 8.1 instead of Win10? All accessed via RDP? What are you using for RDP clients? How much memory for each VM?

And finally, what problems did you have to resolve to make this stable enough for your business.

I'm not a fan of Windows 10 in the business environment, at least not yet.

I see proof everyday Windows 10 is still in infancy, uncontrollable updates wreak havoc in a business environment, and still a lot of compatibility issues with different software.

All are accessed via RDP, with a mixture of windows based PC's, nComputing N300 thin clients, and Dell Wyse Terminals.

The RAM depends on the designated use of the system, and with Enterprise edition of windows 8.1 it's dynamic based on the load of each individual VM.

So for example, I have 5 VM's that startup with 512MB of RAM, but have the ability to request up to 12GB based on load demands. In reciprocation, when the demand lowers it releases the memory back to the system.

I've been working with Hyper-V for going on 5 years now, so I'm pretty familiar with it.

The only unknown really was getting unRAID setup in a VM, but really it wasn't a problem.

-

On 5/10/2018 at 12:04 AM, squark said:

It think you'll find its pretty hard to pass the USB through to the VM.

I'm not exactly sure what prompted your comment, but it was never my intent to pass USB through to any VM's. This is a headless system, for business use running 14 Windows 8.1 Enterprise VM for my business, and employees, with an unRAID VM used as the local Storage/shares.

-

4 hours ago, CHBMB said:

I'd also keep using the command line too, rather than the plugin.

No question there, always used the script, always will.

-

I am a long time user of preclear since since the late unRAID v4 days.

Personally I've always used the script, as when I started to first use the plugin, I never was able to see the output of disks being processed, and the reports when finished never provided the detail I was used to from the original script, (Not to mention they were blank.)

I've always just stuck to the scripts, and used the notification options built in.

I am however in the same boat, that I use preclear to fully stress test new disks whether they will end up in the array or not. I also run an I.T. consultation business, where I again utilize the script on new PC HDD's, before installing them in client machines. 3 Passes of preclear with an extended SMART test, sure does give the required piece of mind, and has helped us to put fires out before there was even a sign of smoke. (All done while Family and Friends enjoy streaming PLEX on the same server)

My Question(s):

Since I just built the newest server, it's been a while since I've updated the script I use, (Current Server on unRAID v6.1.9, New Server on v6.5.0) , and of course I want to bring the script I've grown to depend on.

-

What version of the script is recommended if I plan to only use the script?

- I would assume the one that is being maintained, @gfjardim's, however I don't see anywhere I can get just the script?

(Forgive me if I missed it, I only picked up on this thread about 15 pages back, note: I do have my settings to show the maximum post per page, and it was quite a lot of posts!)

- Should I even be worried about using the @gfjardim script, does the script itself have revisions/updated for later versions of unRAID, or is it merely updated for plugin purpose?

- Lastly: If it is recommended to get one of the base @Joe L., or @SSD scripts, With or without the notification update, is the patch Detailed in these posts required?

I do want to thank @Joe L., @SSD, @gfjardim, @dlandon, @Squid, @Frank1940, and @CHBMB. I've used this script for years now. Both in, and out of unRAID, on systems destine to become workstations, servers, Security/Surveillance systems, laptops, and personal computers for both personal, and professional business clientele. Anything with a Hard Drive, really. This script has become one of, if not the most, valuable tools in our arsenal for new setup, system reload, and HDD reliability diagnostic. There my be better tools, but I've yet to be failed by this. Again, my hat off, head bowed, and knee bent, ... Thank you.

-

What version of the script is recommended if I plan to only use the script?

-

1 hour ago, GilbN said:

For ombi you need version 3.0.2165 or later. Are you sure the ombi api key is correct?

And did you try and add the url base if you use that?

You did add it to custom html and not css right?

For Organizr support I highly recommend joining the discord. https://organizr.us/discord much easier to support live ?

I'm sorry I should've been more clear:

I'm not sure if the repo is due for an update. I found a bunch of posts saying they have the same error, and the, (Devs?), replies are it's an Ombi issue and under fix.

I am running v3.0.3185, and API key verified correct.

No URL Base as I use subdomains, and for now I'm just testing locally, ... (Haven't even gotten to remote testing

I might have added to css ... yup .. looking at it, definitely added to css. I may need to slow down just a little.., or less coffee at +2:30AM

I believe I will be taking up your discord offer some point this weekend. I love to learn, and grasp on my own, gives a great feeling of accomplishment, but this is just taking too damn long..

-

1 hour ago, saarg said:

You have to wait until I add the template to our repository. For some reason I forgot to add it.

Ah I see, I'll keep an eye out. Thanks!

-

1 hour ago, aptalca said:

To update ip on cloudflare, you need to use a separate app, script or device. I believe you said you had ddwrt on your router. That probably handles that. If not, we have a ddclient docker that will do it.

I do have DD-WRT, but as much as I wish I knew the script to place in the router, it looks like I'll go with DDClient, as I'm sure it will be more my pace of understanding to configure.

Unless of course you could point me in the right direction ... ?

Thanks!

-

13 hours ago, GilbN said:

Domain.com/ombi was just an example. Ombi.domain.com works just fine.

Adding.htpasspw will make users have to log in twice yes. Using organizr they will only log in once with their plex credentials.

I've been going through your blogs, and I must say thank you. You have a TON of good information in there. It looks like I'll be following 90% of what you've posted, as you're setup is pretty much what I desire. I've installed Organizr, and I've been playing with it a bit, look slike I'll be jumping on the bandwagon.

I only have a couple problems with organizr:

- I get this error at the top of my homepage if I have Ombi request turned on, I'm not sure if it's because Ombi needs an update.

- (It's the only answer I've found as of end of March 2018 from support posts on GitHub)

Notice: Undefined offset: 0 in /config/www/Dashboard/functions.php on line 5067 Notice: Undefined offset: 0 in /config/www/Dashboard/functions.php on line 5067

- I'm trying to use unBlurr vBeta as a Theme, and all work except when I try to add:

For Plex Users who want the chat button to go to the chat tab instead of the chat sidebar

It places a bar over the entire homepage blocking the top of the page and specifically the save button. I have to use adblocker to kill the item in order to regain control.

Sorry for the off topic questions, I know they should be placed elsewhere.

It looks lie I have a lot more reading, trial and error to go through, but at least I have a good reference point.

Don't you take down that blog anytime soon!

I'm still a bit puzzled on how to get DDNS to update directly to cloudflare, if you could be so kind as to answer:

- Is there a docker or plugin that I need to install specifically for this, or will I be be needing to go a custom script route?

- Should I be using a service like DNS-O-Matic ?

Maybe I'm just missing it within the LetEncrypt container..

-

13 hours ago, GilbN said:

For Ombi you can setup .htpasswd and have fail2ban ban the ip after x amount of failed logins. Fail2ban is already setup to do that with [nginx-http-auth]. I would add ignoreip = x.x.x.x/24 so you don't ban yourself. Like this.

I already have my jail.local configured, as present in my original post. It's just a matter on turning it on.

- Would .htpasswd be recommended on top of Ombi using Plex account sign in?

- Would turning on .htpasswd with PLex user authentication in Ombi cause my users to have to sign in twice? If so would .htpasswd be recommended over Ombi Plex user sign on?

13 hours ago, GilbN said:Or you could setup Organizr and use server authentication so that only users that are logged in to organizr can access domain.com/ombi. And setup fail2ban on the organizr login page.

With Organizr users that log in will automatically be logged into ombi/plex using SSO. https://imgur.com/a/rcwq6rg

You can also setup geoblocking, that will block any country of your choosing.

Not sure about organizr, just started looking into it, and it's intriguing, but not quite ready to undertake that project yet..

Referenced in my original post I've setup as subdomain. Not sure how that would play out with your suggestion as domain.com/ombi, and prefer not to use my base business domain.

geoblocking is definitely a must, and thank you for referencing this. I had no idea it existed, and will get implemented asap.

-

Thank you for your reply, it's very informative, and definitely gives a better understanding of what I'm trying to accomplish with DNS/DDNS.

2 hours ago, aptalca said:There are a lot of questions here so I'll take a stab and try to answer a few. But before I start I should let you know, it seems you took a lot of these configs from guides or other people. They are all so heavily modified that it is difficult for me to help troubleshoot. Nginx is a highly capable and thus complicated piece of software. For specific nginx config questions, I would recommend asking the folks who wrote the specific configs you're using.

You're right I did go through what felt like 5000 forums posts and hundreds of configs, splicing together the files I currently use. I believe I have a good base, providing some security, (again I'm not sure as to how much beyond I need), and not mucking up with too much unneeded. I have posted the same security related questions on the forums I found information outside of Lime.

2 hours ago, aptalca said:First of all, I don't quite understand why you came up with such a confusing domain name forwarding structure. To me it seems all you needed was to use your main domain for business, and set A records for certain subdomains that point to your home server. If you're already using cloudflare for managing those dns records, I'm sure your ddwrt router can update those subdomains on cloudflare with ip changes. You shouldn't need to use the freedns ddns as an intermediate

I was not aware I could cut out the intermediary DDNS by using CloudFlare. I've always been used to using DDNS with some kind of update client, I didn't know CloudFlare could do this automatically.

- I'll have to do some digging to understand how this is properly configured, as I'm a little foggy what host my subdomain A record should point to, I assume my current dynamically assigned IP?

- I'm not entirely sure how I'd get a client updating the, as the router I use has a field for custom DDNS service, but I'm not sure where to even begin with that. I guess Some more searching may shed some light.

2 hours ago, aptalca said:In letsencrypt, you can do one of two things, 1) set the url as mydomain.com, set the subdomains as matt,matt2,matt3 and set only_subdomains to true. That way it won't try to validate mydomain.com but you can add as many subdomains as you want, or 2) set the url to matt.mydomain.com and set subdomains to 1,2,3 and you'll get a cert that covers 1.matt.mydomain.com etc.

I believe I understand what you're saying here, and it sounds like all I need to do is set only_subdomains true and this will accomplish what I'm looking for in both only allowing mattflix.ouritservice.com, while refusing connections the the other subdomains pointed here (Given my default and subdomain site-confs are correct), as well as allowing me to use seperate site conf files.

I think I've had the lightbulb "ahh" moment. .. at least I hope.

Now, if anyone could provide some insight into the security side of this using Ombi and Plex user logins.

Thanks for your response!

-

I've gotten everything working so far for use with Ombi on unRAID 6.1.9, (I'll eventually be moving everything over to the sister server v6.5.0)

My questions now are really focused on security.

I have this server on my home network, with a few business servers running on the same network, and some business data even in the same unRAID server.

I'm hoping I may list my setup configurations here, and someone may be able to answer a few questions.

(All sensitive information fields have been redacted)

DDNS

DDNS is handled through freedns.afraid.org where mysub.strangled.net resolves to my home dynamically agssigned IP provided by my ISP.

The DDNS update is maintained by the DDNS updater in my DD-WRT flashed router.

DNS

The domain I use is actually a split domain, as the base domain mydomain.com points to an external business mail server. I set up a separate sub-domain for use, we'll call it: mattflix.mydomain.com. This is a CNAME using cloudflare DNS, that resolves to my DDNS domain provided by freedns. This setup looks like so:

- mattflix.mydomain.com --> mysub.strangled.net --> external IP at home.

Questions:

I assume because I am using a sub-domain of mydomain.com and not the base domain, this is what would be limiting me to only being able to use one letsencrypt/nginx/site-conf file (default) instead of what I've seen in this thread about using multiple files one for each subdomain?

- I've tried every possible way I could find, and think of, to make this work, with the default, without, with main server block in the default, and separate in each site conf.. But every time I have more than one site-conf file it kills the page and gives a connection refused. (This does the same if I try to list more than one sub-domain /location in the default file.

I'm just confirming a suspicion here, and I know I can switch to mydomain.com/service over subdomains, or just buy a dedicated base domain.com. Just want to confirm that is the solution or I'm doing something wrong.

Also: I currently have a couple subdomain.mydomains.com that resolve to the same DDNS destination. The problem is they all translate to mattflix.mydomain.com.

- I'd like if possible to only allow specifically mattflix.mydomian.com resolve, and any other valid subdomain.mydomain.com either time out, or error. I tried but just couldn't get it, I played around with the server listen block, and I suspect I'm just missing something in there?

Port Forwarding / LetsEncrypt Docker Container Setup

Firewall port forwarding is standard: External --> Internal 80 ---> 81 | 443 --> 444

/letsencrypt/nginx/site-confs/default

## Source: https://github.com/1activegeek/nginx-config-collection/blob/master/apps/ombi/ombi.md server { listen 80; server_name mattflix.mydomain.com; return 301 https://$host$request_uri; } server { listen 443 ssl; server_name mattflix.mydomain.com; ## Set root directory & index root /config/www; index index.html index.htm index.php; ## Turn off client checking of client request body size client_max_body_size 0; ## Custom error pages error_page 400 401 402 403 404 /error.php?error=$status; #SSL settings include /config/nginx/strong-ssl.conf; location / { ## Default <port> is 5000, adjust if necessary proxy_pass http://myipaddress:38084; ## Using a single include file for commonly used settings include /config/nginx/proxy.conf; proxy_cache_bypass $http_upgrade; proxy_set_header Connection keep-alive; proxy_set_header Upgrade $http_upgrade; proxy_set_header X-Forwarded-Host $server_name; proxy_set_header X-Forwarded-Ssl on; } ## Required for Ombi 3.0.2517+ if ($http_referer ~* /) { rewrite ^/dist/([0-9\d*]).js /dist/$1.js last; }/letsencrypt/nginx/strong-ssl.conf

## Source: https://github.com/1activegeek/nginx-config-collection ## READ THE COMMENT ON add_header X-Frame-Options AND add_header Content-Security-Policy IF YOU USE THIS ON A SUBDOMAIN YOU WANT TO IFRAME! ## Certificates from LE container placement ssl_certificate /config/keys/letsencrypt/fullchain.pem; ssl_certificate_key /config/keys/letsencrypt/privkey.pem; ## Strong Security recommended settings per cipherli.st ssl_dhparam /config/nginx/dhparams.pem; # Bit value: 4096 ssl_ciphers ECDHE-RSA-AES256-GCM-SHA512:DHE-RSA-AES256-GCM-SHA512:ECDHE-RSA-AES256-GCM-SHA384:DHE-RSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-SHA384; ssl_ecdh_curve secp384r1; # Requires nginx >= 1.1.0 ssl_session_timeout 10m; ## Settings to add strong security profile (A+ on securityheaders.io/ssllabs.com) add_header Strict-Transport-Security "max-age=63072000; includeSubDomains; preload"; add_header X-Content-Type-Options nosniff; add_header X-XSS-Protection "1; mode=block"; #SET THIS TO none IF YOU DONT WANT GOOGLE TO INDEX YOU SITE! add_header X-Robots-Tag none; ## Use *.domain.com, not *.sub.domain.com when using this on a sub-domain that you want to iframe! add_header Content-Security-Policy "frame-ancestors https://*.$server_name https://$server_name"; ## Use *.domain.com, not *.sub.domain.com when using this on a sub-domain that you want to iframe! add_header X-Frame-Options "ALLOW-FROM https://*.$server_name" always; add_header Referrer-Policy "strict-origin-when-cross-origin"; proxy_cookie_path / "/; HTTPOnly; Secure"; more_set_headers "Server: Classified"; more_clear_headers 'X-Powered-By'; #ONLY FOR TESTING!!! READ THIS!: https://scotthelme.co.uk/a-new-security-header-expect-ct/ add_header Expect-CT max-age=0,report-uri="https://domain.report-uri.com/r/d/ct/reportOnly";

/letsencrypt/nginx/proxy.conf

client_max_body_size 10m; client_body_buffer_size 128k; #Timeout if the real server is dead proxy_next_upstream error timeout invalid_header http_500 http_502 http_503; # Advanced Proxy Config send_timeout 5m; proxy_read_timeout 240; proxy_send_timeout 240; proxy_connect_timeout 240; # Basic Proxy Config proxy_set_header Host $host:$server_port; proxy_set_header X-Real-IP $remote_addr; proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; proxy_set_header X-Forwarded-Proto https; proxy_redirect http:// $scheme://; proxy_http_version 1.1; proxy_set_header Connection ""; proxy_cache_bypass $cookie_session; proxy_no_cache $cookie_session; proxy_buffers 32 4k;

This all gets me an A+ on securityheaders.io which is great and all, but what does that actually do for me?

I'm concerned about brute force, DDOS, and some thug trying to muscle into my server.

I've been trying to read up on fail2ban and it's implementation, however from what I've found, because I'm using this for Ombi, and it authenticates off of the users Plex account, this bypasses the fail2ban?

I've tried monitoring the fail2ban status however I get this error when I try to check the status:

docker exec -it LetsEncrypt bash root@d3fc185ce9d5:/$ fail2ban-client -i Fail2Ban v0.10.1 reads log file that contains password failure report and bans the corresponding IP addresses using firewall rules. fail2ban> status nginx-http-auth Failed to access socket path: /var/run/fail2ban/fail2ban.sock. Is fail2ban running? fail2ban>

I know the first time I ran the command, everything reported, though it reported no activity.

/letsencrypt/fail2ban/jail.local

# This is the custom version of the jail.conf for fail2ban # Feel free to modify this and add additional filters # Then you can drop the new filter conf files into the fail2ban-filters # folder and restart the container [DEFAULT] # ##"bantime" is the number of seconds that a host is banned. bantime = 259200 # ## A host is banned if it has generated "maxretry" during the last "findtime" seconds. findtime = 600 # ## "maxretry" is the number of failures before a host get banned. maxretry = 3 [ssh] enabled = false [nginx-http-auth] enabled = true filter = nginx-http-auth port = http,https logpath = /config/log/nginx/error.log # ignorip = myipaddress.0/24 [nginx-badbots] enabled = true port = http,https filter = nginx-badbots logpath = /config/log/nginx/access.log maxretry = 2 [nginx-botsearch] enabled = true port = http,https filter = nginx-botsearch logpath = /config/log/nginx/access.log ## Unbanning # ## SSH into the container with: # docker exec -it LetsEncrypt bash # ## Enter fail2ban interactive mode: # fail2ban-client -i # ## Check the status of the jail: # status nginx-http-auth # ## Unban with: # set nginx-http-auth unbanip 77.16.40.104 # ## If you already know the IP you want to unban you can just type this: # docker exec -it letsencrypt fail2ban-client set nginx-http-auth unbanip 77.16.40.104I know there's no such thing at perfectly 100% secure, but with port 80 & 443 being open, and only relying on Ombi/Plex password security, I just feel like my ass is hanging in the wind.

Any guidance would be most appreciated.

-

4 hours ago, NotYetRated said:

Do those support TRIM for SSD's? I've got 3 SSD's in the system currently...

Hmm.. I'm not sure.

I'm finalizing setup of my newer machine now, so I can test it out.

I planed to run Microsoft Hyper-V Core, with unRAID as a VM inside, so I' not sure if that will effect the results or not...

I should know more this weekend.

-

7 hours ago, jonathanm said:

Not using only one container. But, because of the way docker works, you could spin up a second delugevpn container, name it differently, change the host ports to something unique, point it to a different endpoint, and use that privoxy port. Dockers share resources, so it would take practically no resources to operate. You could ignore the second instance of deluge if you wanted, or use it to split your torrent load to the different endpoints also.

Intriguing: Is it possible to make Sonarr split it's requests to multiple DelugeVPN containers?

Apologies if this has been asked an answered here, I skipped right to the end today.

-

14 hours ago, GilbN said:

I have some guides on https://technicalramblings.com

https://technicalramblings.com/blog/how-to-setup-organizr-with-letsencrypt-on-unraid/

It's for Organizr but has lots of sub directory examples.

If you want live support I recommend checking out the Organizr discord. https://organizr.us/discord

We help people from scratch getting all set up with a domain and reverse proxy everything everyday.

Thank you so much for your offer, it's a delightful change from the normal response I find on the infrequent posts for help I place here in the forums.

I forced my way though 16 hours of reading posts, (10 invested before my original post), here in the forums, and trial and error after the initial response to my inquiry was basically met with the same information inquired by my posting. It's always frustrating learning new things with unRAID. Spending countless hours scouring Threads that are 100's of pages long, to finally piece together an understanding of a site-conf file, (and change a Cloudflare SSL setting I've still not seen mentioned), is just .... nerve wracking.Especially looking at it now in a completed working form, and seeing it's literally a 10 minute job. If only a quick reference of working files were stickied at the top of a thread, and not needing pieced together through 1800 posts.. (Many examples I found were conflicting, and took a lot of time to find correct syntax) ... and I know I've looked before, but am I not seeing where a Search Thread, or discussion option is?.. I don't even find it in the advanced search... Searching the entire forum for a specific item is ... Futile.

Anyway, I was able to get to the point of a 502 error, and from there I backtracked to one of these posts I'd read having the same issue, and resolving. (setting proxy_pass to http and not https, again conflicting posts in this thread mostly showing https)

I own a Business to Business consulting firm, and I really would love to start offing the benefits of unRAID to our clientele, but the support system is just infuriating. I just can't risk the time that could be potentially lost in troubleshooting answers in the bottomless abyss of these forums.

Disclaimer to those that might think I'm being to harsh: No, I'm not a linux expert, Yes, I know what the search button does, and I typically don't even post until I've worn the thing out. Yes I HAVE learned many things from this forum. Yes, I understand every setup is different, and with different variables.

Though I'm not an expert in all things I.T. I have enough natural talent in the field that I mostly piece things together by deciphering working examples.

I'm sorry for the rant, I guess I'm just very analytical, and wish there was a better learn "on your own support system" for unRAID, or at least a more organized way of finding key information. Time is quite valuable.

Thanks again for your offer of assistance.

(It's late, and been a long day, I'm sure there's a few typos in this post, my apologies.)

-

Between my two servers I have 6 HBA controllers:

In each system I have one Intel RS2WC80 and two Dell PERC H310's. All are LSI 9211-8i controllers Flashed to IT mode, and working great at full 6GB Transfer speeds.

The Dells are a little more of a pain to flash to IT, but still relatively straight forward. (It's dealing with UEFI BiOS that takes the most time.)

The H310's can be picked up on eBay fairly cheap now ~$50.00 or less..

EDIT: Just re-read your post, and realized you looking for PCI. Yeah ... do they even list those as antiques anymore?

-

On 3/12/2018 at 12:54 PM, NotYetRated said:

Anyone here using a ASrock EP2C602? I am having some difficulty getting full speed out of my SSD's on any of the 14 ports. They are topping out at around 225MB/s. If I throw them on my LSI 9220-8i, I get the full ~550MB/s, however lose TRIM support.

I assume you're using the ASRock - EP2C602-4L/D16, if so I own two systems utilizing this board.

Of the 14 SATA ports onboard, only 2 are full SATA III, (at least in unRAID, as the Marvell controlled ports have an issue in unRAID, I can't remember specifically what it was).

I run 3 LSI 9211-8i's in IT mode, so all but my 2 cache SSD's are on the controllers.

-

1

1

-

Dynamix - V6 Plugins

in Plugin Support

Posted

I did try this, no fix. However interestingly enough, two reboots later, and it's back to what it was.