-

Posts

224 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by bobokun

-

-

I got two CRC Errors on my new drive that I was preclearing /dev/sdd and then I got a Preclear Fail on Post-Read. I'm not sure what I should do...should I try to replug sata cables again and preclear the drive again? Here is the preclear log and new diagnostics...

ppreclear_disk_JEHBR8AN_21792.txtunnas-diagnostics-20190924-2118.zip

-

I added a new hard drive and was preclearing it. (Planning on replacing my parity)

I wanted to move all the contents of my disk4 to disk5 since I was getting very slow read/write speeds on disk 4 ( Based on diskspeed docker)

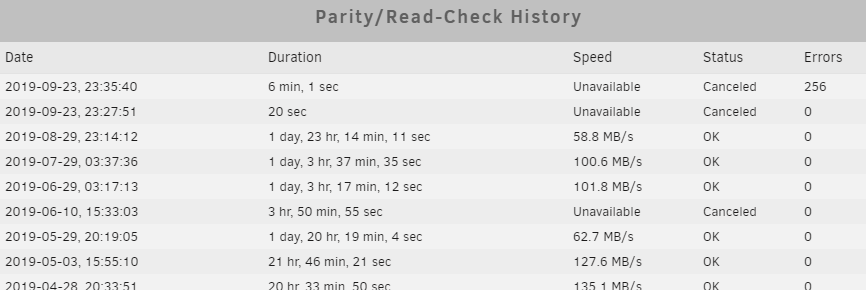

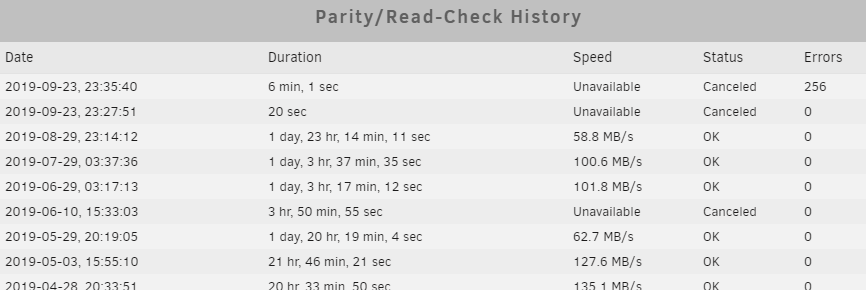

I was using the unbalance plugin to move all the contents from Disk4 to disk5 and it was moving at like 1-3MB/s. After waiting for 30minutes I got a bunch of notifications saying that the arrray has errors (Array has 6 disks with read errors) and Disk 5 had an error (Disk dsbl) alert. My Unraid GUI became unresponsive and I couldn't SSH into it as well. I force rebooted my server as that was my only option...When I rebooted I saw that disk5 had an x on it saying there was an error. I tried to rebuilt parity but my server froze again and right before it froze I saw that there were a bunch of messages saying "xfs metadata i/o error xfs_trans_read_buf_map unraid". I rebooted and now the x is back in disk 5 and errors on my last parity check. I'm performing a Read-Check. I'm scared to continue doing anything including preclearing my new drive and I'm not sure what is going on.

Can you please check over my logs. thank you

-

19 minutes ago, jonathanm said:

Yeah, don't do that. The whole point of this plugin is to keep tabs on seldom used archival type files, files that should never change. Active files should not even be monitored, it's a waste of resources.

I understand and that's why I've added them on my exclude folders (as displayed on the screenshot) but it's still being monitored? I must be configuring something wrong but I'm not sure how I should fix it.

-

I've been trying to set up file integrity to get it to work but I'm not sure if I'm doing this correctly or not. I keep on getting BLAKE2 key mismatch on my libvirt.img and a lot of my nextcloud files: See example:

BLAKE2 hash key mismatch (updated), /mnt/disk1/nextcloud/.htaccess was modified BLAKE2 hash key mismatch (updated), /mnt/disk1/nextcloud/appdata_ocdoy1vwt49l/appstore/apps.json was modified BLAKE2 hash key mismatch (updated), /mnt/disk1/nextcloud/appdata_ocdoy1vwt49l/appstore/categories.json was modified BLAKE2 hash key mismatch (updated), /mnt/disk1/nextcloud/appdata_ocdoy1vwt49l/appstore/future-apps.json was modified BLAKE2 hash key mismatch (updated), /mnt/disk5/vm backup/libvirt.img was modifiedAre these true errors?? I have these excluded and clearing it but every time integrity check runs it always finds these files mismatched.

Here are my settings:

-

I've been using unraid for about 2 years now and I love the fact that it makes it so easy to set up docker containers and have everything running! Happy Birthday Unraid!!

-

5 minutes ago, bastl said:

Deleting "/config/www/nextcloud/apps/viewer/cypress" and "/config/www/nextcloud/apps/viewer/cypress.json" as mentioned on github fixed it for me.

https://github.com/nextcloud/server/issues/16229

Made a backup of the folder and the json file, just in case.

Deleting those files/folders worked for me as well. Thanks!

-

-

5 minutes ago, bobbintb said:

My guess would be you are using the python 2 version of xmlrpclib and rutorrent is using version 3 of xmlrpclib. I could be wrong. I just stick ti python 2.7 so I am not familiar with cross version issues.

How did you get it to run on python 2.7 in the docker container? As we saw the docker container only contains python 3 and python 2 wasn't installed. Did you install it manually inside the docker container to get it to work? Since we are both running the same container I would assume that it should work for myself if you managed to get it to work

-

18 hours ago, bobbintb said:

No, you should save the script outside the docker container. There really is no reason for the average user to be messing with the internals of the container. That's what the shared folder that saves config files is for. The docker container is meant to be immutable so that it can easily deployed to other environments or wiped out and recreated.

Looking a little closer at the script, a couple of things comes to mind. Does the rutorrent container even have python installed? I'm away from my server so I can't check. I'm fairly certain it does but I'm not 100%. If it does it needs to be the same version, or at least from the 2.x family. Python 2 and 3 are vastly different. I'm fairly certain the container has python 2.7 installed but I'm not able to check right now. If it doesn't have Python installed in the Docker container, installing it with nerdtools won't do anything because it doesn't have anything to do with the Docker container.

The other thing is the permissions from the script. It looks like it is importing xlmrpclib and if it doesn't have permissions to do so it may cause issues.

Lastly, how did you copy the script? One common issue when dealing with scripts is creating the script in Windows and copying it to Linux. Windows handles line endings differently than Linux. Linux uses what is called a "line feed" for new lines in text files and Windows uses a "carriage return" and "line feed". So the script may look the exact same to the naked eye but break because there could be a line ending that it is breaking on. If you copied it in Windows and pasted it into Notepad and saved it to your server, you should make sure and remove the carriage returns. This may be information you already know but I'm not sure of your skill level so I thought I'd mention it. If that's the case you can Google how to remove the carriage returns (notepad remove carriage return). You'll probably need to download another text editor because I don't think Windows Notepad can do this. That would actually be the first thing I would check.

I do have the script saved outside the container, and I also checked the python installed in the docker container is not 2.7 but 3.6.8. I do have the same docker container installed however I think the reason why it's showing 2.7 installed is because I'm running the script from unraid and not within the docker container. In unraid itself I have python 2.7 installed and it's connecting to rutorrent using the server_url variable in the script. It could be a permission issue but I'm not sure how to give permissions access to the script. When I copied the script I pasted it directly in unraid using the userscripts plugin I can edit the script directly.

When running the script in a terminal window in unraid (not in the docker container) I am seeing the full error message, whereas previously it was truncated.

Traceback (most recent call last):

File "./script", line 18, in <module>

torrent_name = rtorrent.d.get_name(torrent)

File "/usr/lib64/python2.7/xmlrpclib.py", line 1243, in __call__

return self.__send(self.__name, args)

File "/usr/lib64/python2.7/xmlrpclib.py", line 1602, in __request

verbose=self.__verbose

File "/usr/lib64/python2.7/xmlrpclib.py", line 1283, in request

return self.single_request(host, handler, request_body, verbose)

File "/usr/lib64/python2.7/xmlrpclib.py", line 1316, in single_request

return self.parse_response(response)

File "/usr/lib64/python2.7/xmlrpclib.py", line 1493, in parse_response

return u.close()

File "/usr/lib64/python2.7/xmlrpclib.py", line 800, in close

raise Fault(**self._stack[0])

xmlrpclib.Fault: <Fault -506: "Method 'd.get_name' not defined"> -

Just now, bobbintb said:

What I mean is maybe it somehow got corrupted or is incomplete. Since it's a docker, it would have nothing to do with nerdtools or any of the packages it installs. You shouldn't have to do anything outside of docker. Really, the whole point of docker is to be self contained and independent and just work out of the box. Have you tried reinstalling the docker image? Maybe something went wrong when it pulled the docker image.

Oh should I be saving this script inside the docker container? I Have the script saved outside and I was using the userscripts plugin to run it. Maybe that's why I'm experiencing the issue? If you have saved the script in the docker container how did you schedule a cron job inside the docker container to run the script?

-

On 6/10/2019 at 7:36 PM, jbartlett said:

It's detecting an error of some kind. The number breaks down to "Avg Speed|Min Read Speed|MaxReedSpeed" - so this particular scenario should not be possible.

Please click the "Create Debug File" link at the bottom of the page and then click on "Create Debug File".

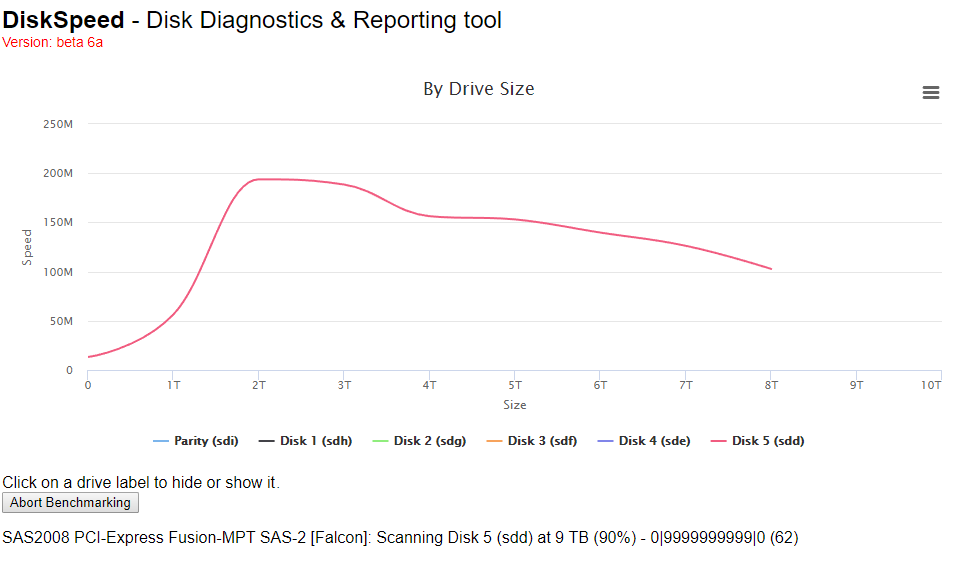

I realized what I did wrong, I forgot to rescan the controllers after I swapped the sata cable to connect to my motherboard rather than the SAS2008 controller which is why I was getting those errors when benchmarking.

-

-

Are these all windows tools? Is there a way to run these using docker containers or plugins? Or should I run it in Windows VM.

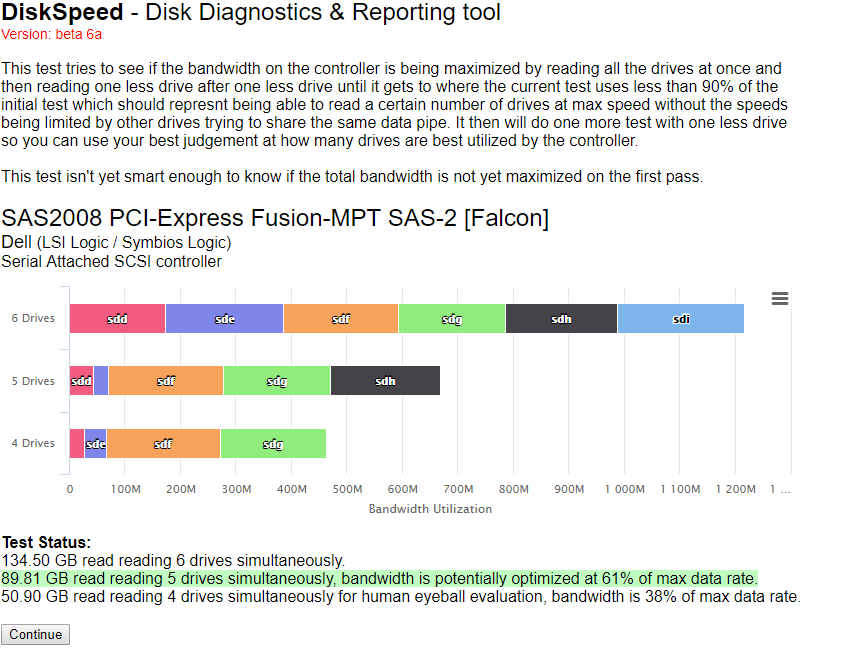

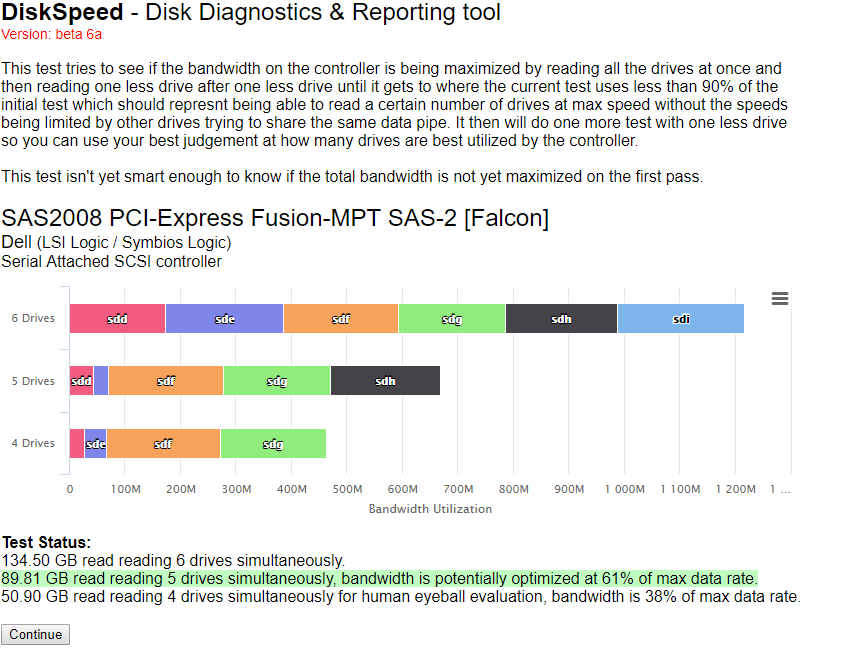

I've been using disk4 since before I installed my dell H310 and even with my dell H310 installed it was running fast, parity checks were fine. It's when I added two additional new drives (10TB x 2) is when the issues started happening. I'm wondering if there is an issue with my H310 controller since it is running 6 drives on it (2 SAS to sata cables, 4 sata connections on one and 2 on the other).

-

36 minutes ago, jbartlett said:

It's detecting an error of some kind. The number breaks down to "Avg Speed|Min Read Speed|MaxReedSpeed" - so this particular scenario should not be possible.

Please click the "Create Debug File" link at the bottom of the page and then click on "Create Debug File".

Sent you a PM with the debug file. Thanks

-

3 hours ago, jbartlett said:

That tells me that an external process wasn't causing the issue. Try replacing the cables and trying different ports. If it keeps happening, your only option is to replace the drives.

I wouldn't use shucked drives for anything critical. They're not the highest quality in my opinion.

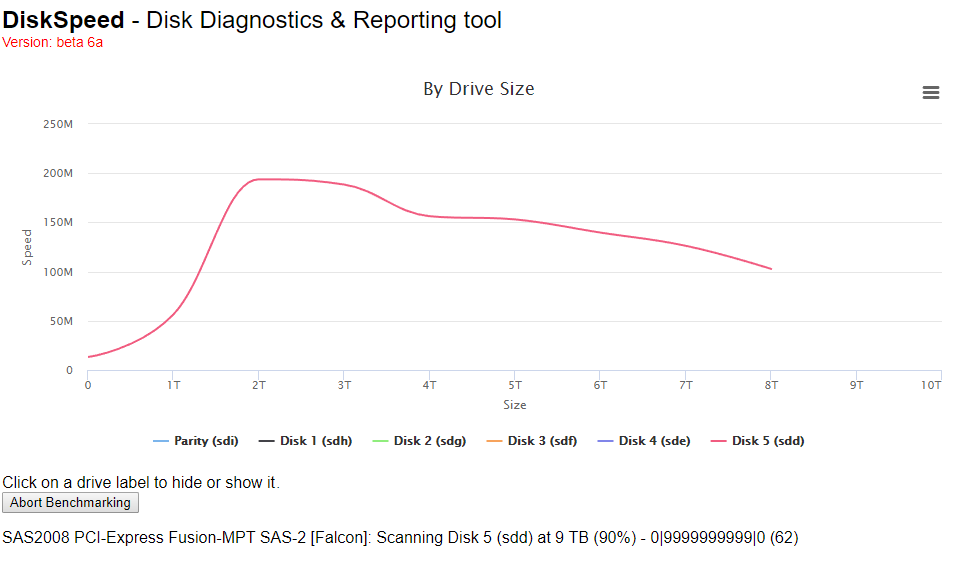

Disk4 isn't a shucked drive. I tried to replace the sata cable for disk4 and parity and connect it directly to the motherboard instead of the DELL H310 controller but now when I run diskSpeed it hangs on disk5 with the message below. Now I'm wondering if there is an issue with my H310 card or if it's my SAS to SATA cables.

SAS2008 PCI-Express Fusion-MPT SAS-2 [Falcon]: Scanning Disk 5 (sdd) at 9 TB (90%) - 0|9999999999|0 (64)

Not sure if this helps?

-

On 5/31/2019 at 12:03 PM, bobbintb said:

I think it's more likely the fault at the bottom. Maybe xmlrpclib didn't import correctly.

How do I fix the xlmrpclib? Is this something that needs to be downloaded outside the docker? I have nerdtools plugin installed and python-2.7.15-x86_64-3.txz installed. Is there anything else additional I need to install?

-

Here are new diags while performing parity check. I took these diags as it slowed down to 2.7MB/S

-

On 5/30/2019 at 4:35 PM, jbartlett said:

Have you ran more than one benchmark and are the drops repeatable?

Yes I tried running it multiple times and the outcome is the same

-

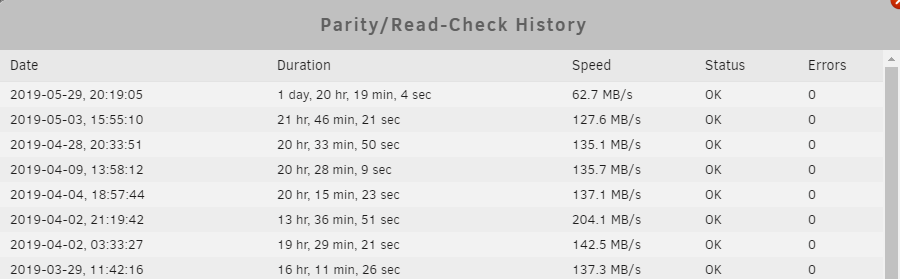

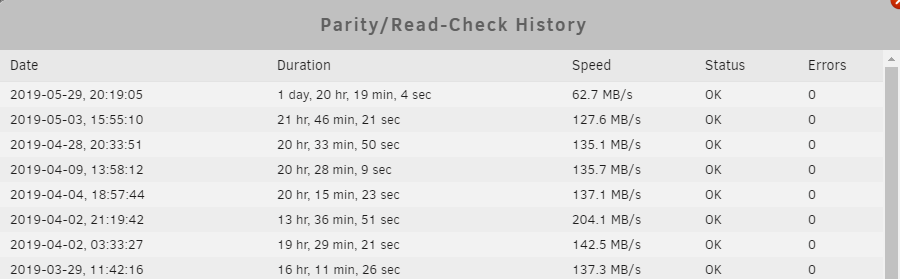

Hi , I recently had a parity check which took a lot longer than usual. I ran a disk benchmark and found out that the drives for parity and disk4 are really slow but SMART reports seem to show that it's fine. Not sure what might be the cause of the slow parity check. It took almost double the amount of time as it normally does. Please see the attached diagnostics.

-

4 minutes ago, johnnie.black said:

Post SMART reports for parity and disk4.

Please see attached

HGST_HDN728080ALE604_R6GS94LY-20190530-1805 disk4 (sde).txt WDC_WD100EMAZ-00WJTA0_JEGW7S5N-20190530-1805 parity (sdi).txt

-

I recently ran a benchmark after seeing my parity check take a lot longer than normal. All my drives have been precleared and are less than 1 years old. My parity especially is less than 2 months old and it's shucked so I can't simply RMA it. Is there something I can do? I'm not sure why the speeds suddenly drop so much.

-

On 5/24/2019 at 1:54 PM, bobbintb said:

I haven't used that script but I've used curl in a bash script to send those same commands to it and it deletes it and removes it. What is the error you are getting?

Traceback (most recent call last): File "/tmp/user.scripts/tmpScripts/rtorrent Cleanup/script", line 18, in torrent_tracker_status = rtorrent.d.get_message(torrent) File "/usr/lib64/python2.7/xmlrpclib.py", line 1243, in __call__ return self.__send(self.__name, args) File "/usr/lib64/python2.7/xmlrpclib.py", line 1602, in __request verbose=self.__verbose File "/usr/lib64/python2.7/xmlrpclib.py", line 1283, in request return self.single_request(host, handler, request_body, verbose) File "/usr/lib64/python2.7/xmlrpclib.py", line 1316, in single_request return self.parse_response(response) File "/usr/lib64/python2.7/xmlrpclib.py", line 1493, in parse_response return u.close() File "/usr/lib64/python2.7/xmlrpclib.py", line 800, in close raise Fault(**self._stack[0]) xmlrpclib.Fault:This is the error I'm getting. I think specifically "torrent_tracker_status = rtorrent.d.get_message(torrent)"

-

15 hours ago, bobbintb said:

I used the same port as the webui, 90 in my case.

I tried that and I'm getting errors. Are you using plugin Userscripts to execute the script? At the beginning of the script did you put #!/usr/bin/python? I'm wondering if there is anything else that I missed.

What version of python are you using?

-

Disk 5 Disabled After Unclean Shutdown

in General Support

Posted

I have a miniSAS to 4 sata cable that it was plugged into. Should I replace the whole mini SAS to 4 port cable?