-

Posts

166 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by Andiroo2

-

-

I’ve uninstalled a few plugins and stopped my VM…things have stabilized for now. I will see if I can get this through the night and if so, will look at the VM config. I wonder if there is some VFIO call or conflict that is causing issues.

More to come…

-

Latest Unraid version installed, moved from a stable system on 10th get Intel to a new 13th gen Intel. New CPU/MB/RAM, Memtest86 passed without issues.

Having issues keeping the system online for more than a few hours. Everything works when it's up, but then it just dies. I have syslog server activated with logs attached, and diagnostics as well. Looking for where to start...thanks!192.168.1.10 syslog.txt

-

Love my R5. AMA.

-

OK I increased my ARC to 1/4 of RAM instead of the default 1/8. Will see what happens.

-

-

Background: I have 5x 2TB NVMe SSDs in a RaidZ1 pool (non encrypted, some datasets have compression and some do not), used as my cache. I'm sitting between 80% and 90% utilization at any given time (8TB usable, ~1TB free).

Moving large files within the pool is very slow compared to when I was running the same drives in a BTRFS Raid1 pool (5TB usable). Moving a 5GB file from folder A to folder B within the cache gives me consistently less than 500MB/s transfer speed (usually much less), while I was seeing around 1.5GB/s on BTRFS.

Not sure what I can check here. Could this be due to the higher pool utilization? I have a 10th gen i7 and 48GB of RAM, and the system isn't taxed. The PCIe bus is 3.0 and all NVMe's have DRAM.

Thanks for your suggestions!

-

Odd issue today...trying to move files off my ZFS cache and...nothing is happening. I've had Mover Tuning for years without issue. I have tried changing a LOT of these settings to see if anything changes and nothing has worked so far. I haven't tried disabling Mover Tuning yet because I don't want to clear the cache pool out at this point.

My Mover Tuning settings:

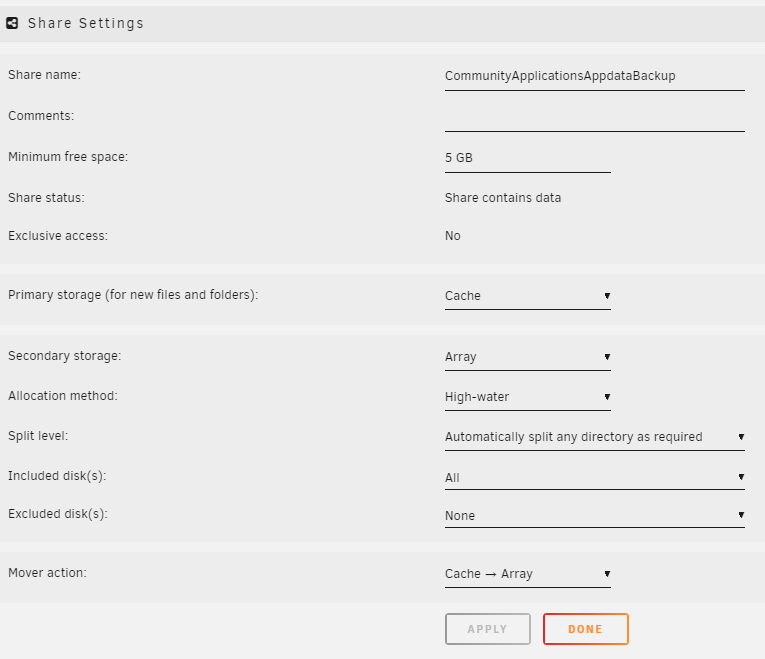

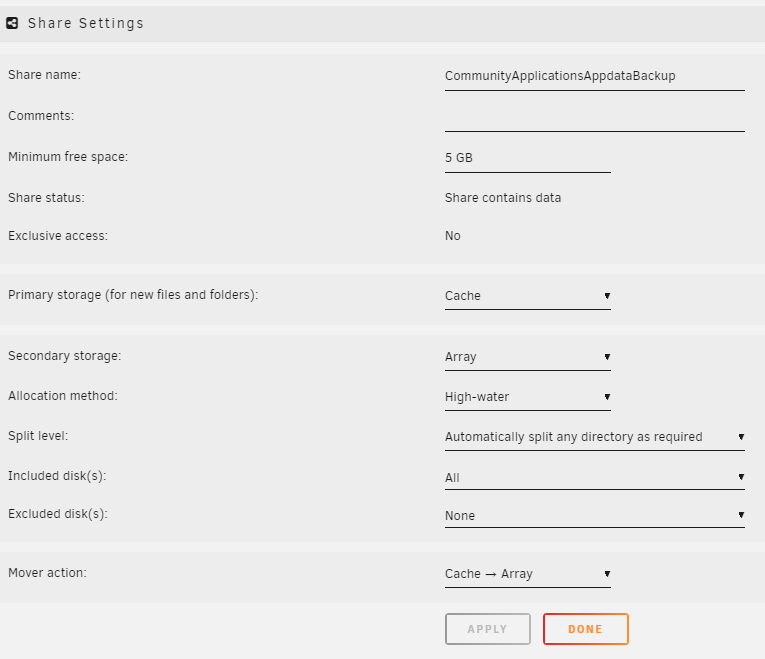

My share overview is attached as well.

If I run the dedicated "move all from this share" in a shares settings, Mover works. When I run mover via Move Now (or via the schedule), the log shows this:

Nov 22 06:43:37 Tower emhttpd: shcmd (206793): /usr/local/sbin/mover |& logger -t move & Nov 22 06:43:37 Tower root: Starting Mover Nov 22 06:43:37 Tower root: Forcing turbo write on Nov 22 06:43:37 Tower root: ionice -c 2 -n 0 nice -n 0 /usr/local/emhttp/plugins/ca.mover.tuning/age_mover start 45 5 0 "/mnt/user/system/Mover_Exclude.txt" "ini" '' '' no 100 '' '' 95 Nov 22 06:43:37 Tower kernel: mdcmd (46): set md_write_method 1I don't get the ****staring mover*** line in the log.

When I run mover manually from a share's settings page, I get a much nicer looking set of logs:

mvlogger: Log Level: 1 mvlogger: *********************************MOVER -SHARE- START******************************* mvlogger: Wed Nov 22 05:52:42 EST 2023 mvlogger: Share supplied CommunityApplicationsAppdataBackup mvlogger: Cache Pool Name: cache mvlogger: Share Path: /mnt/cache/CommunityApplicationsAppdataBackup mvlogger: Complete Mover Command: find "/mnt/cache/CommunityApplicationsAppdataBackup" -depth | /usr/local/sbin/move -d 1 file: /mnt/cache/CommunityApplicationsAppdataBackup/ab_20231120_020002-failed/my-telegraf.xml file: /mnt/cache/CommunityApplicationsAppdataBackup/ab_20231120_020002-failed/my-homebridge.xml ...Running a find command shows plenty of files older than the specified age that should be caught by the mover:

root@Tower:~# find /mnt/cache/Movies -mtime +45 /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023).eng - 1080p.srt /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023).eng -.srt /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023) - 1080p.fr.srt /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023).eng.HI -.srt /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023) - 1080p.es.srt /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023) - 1080p.en.srt /mnt/cache/Movies/Movies/BlackBerry (2023)/BlackBerry (2023) - 1080p.mp4 /mnt/cache/Movies/Movies/80 for Brady (2023) /mnt/cache/Movies/Movies/80 for Brady (2023)/80 for Brady (2023) WEBDL-2160p.es.srt /mnt/cache/Movies/Movies/Toy Story 4 (2019)/Toy Story 4 (2019).mkv /mnt/cache/Movies/Movies/Toy Story 4 (2019)/Toy Story 4 (2019).en.srt /mnt/cache/Movies/Movies/Toy Story 4 (2019)/Toy Story 4 (2019).en.forced.srt /mnt/cache/Movies/Movies/Toy Story 4 (2019)/Toy Story 4 (2019).es.srt /mnt/cache/Movies/Movies/Toy Story 4 (2019)/Toy Story 4 (2019).fr.srtDiagnostics attached in case it helps. Thanks!

-

11 hours ago, dlandon said:

I've added a parameter in the settings that controls the number of events displayed so you don't have to scroll through so much data to find what you want. It displays the last 'X' number of file events for each disk or share. Update to the latest version to try it out.

This is so great. Thank you!!

-

Is there a way to reduce the time scale for each disk that is listed in the plugin? When I go to the disk listing to see what's causing a disk to spin up, i have to scroll through disk activity from up to 24h ago. I only want to see activity in the last hour or so. Can I specify this? Thanks!

-

Fascinating...I wonder where these values came from. I had several shares with huge values for the minimum free space (400-700GB). It actually caused overflow from the cache to the array for several shares and answers some other questions I had.

Thanks for the fast turnaround!

-

Diagnostics attached.

Min free space on cache is set to 0, with warning and critical thresholds set to 95% and 98%, respectively.

-

Seeing a ton of these messages in the logs:

Nov 3 04:14:17 Tower shfs: share cache full Nov 3 04:14:17 Tower shfs: share cache full Nov 3 04:14:20 Tower shfs: share cache full Nov 3 04:14:20 Tower shfs: share cache full Nov 3 04:14:21 Tower shfs: share cache full Nov 3 04:14:21 Tower shfs: share cache full Nov 3 04:14:21 Tower shfs: share cache full Nov 3 04:14:22 Tower shfs: share cache full Nov 3 04:14:22 Tower shfs: share cache full Nov 3 04:14:33 Tower shfs: share cache fullCache is ZFS, sitting around 95% full with > 400GB free. Curious to know what process thinks the cache pool is full.

Mover is set to run at 95% usage via Mover Tuning, so I expect it will run soon, but would like to know if something is failing and throwing these messages.

-

Aha, I run mover on this share a couple times per week. Would this "destroy" the dataset once it empties it out?

-

I have some interesting lines in my log every day at noon:

Sep 28 12:00:01 Tower shfs: /usr/sbin/zfs unmount 'cache/CommunityApplicationsAppdataBackup' Sep 28 12:00:01 Tower shfs: /usr/sbin/zfs destroy 'cache/CommunityApplicationsAppdataBackup' Sep 28 12:00:01 Tower root: cannot destroy 'cache/CommunityApplicationsAppdataBackup': dataset is busy Sep 28 12:00:01 Tower shfs: retval: 1 attempting 'destroy' Sep 28 12:00:01 Tower shfs: /usr/sbin/zfs mount 'cache/CommunityApplicationsAppdataBackup'This folder is the first dataset/folder alphabetically in my cache pool, which is a RaidZ1 setup. This dataset is not part of an auto-snapshot or auto-backup (I recently set up automated snapshots for my appdata and domains datasets via the SpaceinvaderOne tutorial). Here's the view from ZFS Master:

The destroy is failing (thankfully?) but I'd like to know what's trying to kill it.

-

On 9/18/2023 at 3:59 PM, Andiroo2 said:

I will kiss you if this makes the list! Purely consensual, of course...

Just installed the latest version of the plugin. Thank you thank you thank you!

-

On 9/6/2023 at 8:33 PM, Iker said:

I was wondering if you would consider a setting/button that allows the option for manual update only: Yes, I was finally able to get some time for working on the next update, and that's one of the planned features.

I will kiss you if this makes the list! Purely consensual, of course...

-

1

1

-

-

On 10/27/2022 at 12:09 PM, hugenbdd said:

How to ignore a SINGLE file

1.) Find the path of the file you wish to ignore.

ls -ltr /mnt/cache/Download/complete/test.txt

root@Tower:/# ls -ltr /mnt/cache/Download/complete/test.txt

-rwxrwxrwx 1 root root 14 Oct 27 11:32 /mnt/cache/Download/complete/test.txt*

2.) Copy the complete path used.

/mnt/cache/Download/complete/test.txt

3.) Create a text file to hold the ignore list.

vi /mnt/user/appdata/mover-ignore/mover_ignore.txt

4.) Add a file path to the mover_ignore.txt file

While still in vi press the i button on your keyboard.

Right click mouse.

5.) Exit and Save

ESC key, then : key, then w key, then q key

6.) Verify file was saved

cat /mnt/user/appdata/mover-ignore/mover_ignore.txt

"This should print out 1 line you just entered into the mover_ignore.txt"

7.) Verify the find command results does not contain the ignored file

find "/mnt/cache/Download" -depth | grep -vFf '/mnt/user/appdata/mover-ignore/mover_ignore.txt'

/mnt/cache/Download is the share name on the cache. (Note, cache name could be different if you have multiple caches or changed the default name)How to ignore Muliple files.

1.) Find the paths of the files you wish to ignore

ls -ltr /mnt/cache/Download/complete/test.txt

ls -ltr /mnt/cache/Download/complete/Second_File.txt

2.) Copy the complete paths used to a separate notepad or text file.

/mnt/cache/Download/complete/test.txt

/mnt/cache/Download/complete/Second_File.txt

3.) Copy the paths in your notepad to the clip board.

Select, the right click copy

4.) Create a text file to hold the ignore list.

vi /mnt/user/appdata/mover-ignore/mover_ignore.txt

5.) Add the file paths to the mover_ignore.txt file

While still in vi press the i button on your keyboard.

Right click mouse.You should now have two file paths in the file.

6.) Exit and Save

ESC key, then : key, then w key, then q key

7.) Verify file was saved

cat /mnt/user/appdata/mover-ignore/mover_ignore.txt

"This should print out 2 lines you just entered into the mover_ignore.txt"

8.) Verify the find command results does not contain the ignored files

find "/mnt/cache/Download" -depth | grep -vFf '/mnt/user/appdata/mover-ignore/mover_ignore.txt'

/mnt/cache/Download is the share name on the cache. (Note, cache name could be different if you have multiple caches or changed the default name)

How to Ignore a directory

instead of a file path, use a directory path. no * or / at the end.

This may cause issues if you have other files or directories named the similar but with extra text./mnt/cache/Download/complete

*Note /mnt/cache/Download/complete will also ignore /mnt/cache/Download/complete-old

*I use vi in this example instead of creating a file in windows, as windows can add ^m characters to the end of the line, causing issues in Linux. This would not be an issue if dos2unix was included in unRAID.

**Basic vi commands

This post should be stickied.

-

On 9/6/2023 at 3:05 PM, KluthR said:

Exactly. And check whats wrong with your backup by checking the backup log.

No errors that I can see in the backup log (regular and debug). I created a debug log to share with you, ID 8eed7224-7ebd-4120-872c-6e3afb6c0459 in case you can see something I cannot.

-

-

Where are the logs for each backup stored?

-

Not by Unraid. I have achieved something similar a couple of different ways:

- I use the Plexcache python script to move all On Deck media to the cache for my Plex users. This is really handy.

- I have a huge cache (8TB NVMe) and I set mover tuning to keep it full (95%) before running Mover, and then only move files that are 3 months old.

-

3 minutes ago, PsychoRS said:

I'm having the same problem (no HW transcoding with HDR Tonemapping enabled) with latest versions... can you tell us exactly what release of LSIO container are you using and what VERSION tag are you using? Thanks

-

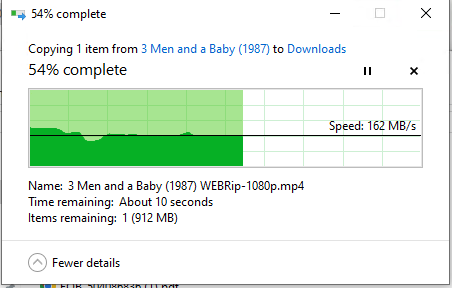

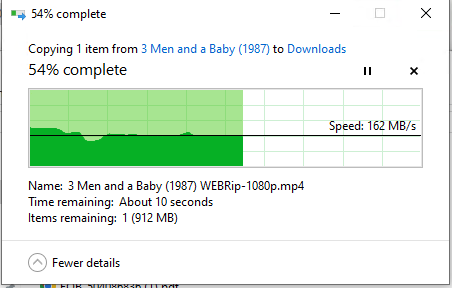

On 9/5/2023 at 10:25 AM, Andiroo2 said:

Plex docker is no longer hardware transcoding on Intel Quick Sync as of v1.32.6.7468. No changes otherwise...docker updated after backing up last night and now I see crazy CPU usage due to 4K transcodes using CPU instead of GPU.

I rolled back to a stable version and it works again…see attached.

-

GUI Crashing after MB/CPU Upgrade

in General Support

Posted

Ram Memtest overnight and had errors this AM. Pulled one stick of memory and started up again without issues in the log. Ordered a new set to arrive later today and will re-test.