-

Posts

162 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Report Comments posted by BurntOC

-

-

Will do. It might be tomorrow before I can test, but I'll try to not let it slide beyond that.

-

@bonienl With another several weeks passing, and 6.12.10 released I thougth I'd circle back again. Any ideas on how to address this issue?

-

Just doing another weekly check in @bonienl. I reached out via support and they said you've got this. I take it at this point you guys have verified there's an error in the upgrade (docker network downgrade, I guess, I dunno anymore) process and are trying to figure out a way to address in 6.12.9 or 6.13?

-

Good to hear. Can you recreate @bonienl? It would be nice to confirm before I have to do another production upgrade test and possible rollback. I'm ready to get off 6.11.5 but stopped dead on this server.

-

-

Thanks for looking at it. I do believe there is some real "bug" here - it's always worked/upgraded fine before but as you said I know there were some changes. Odd that my other similar one works. I'd stumblined on some discussion of deleting local-kv.db to reset the docker network stuff but I didn't want to go near doing stuff like that if I can help it - unless y'all advise me to. We all use stuff running off this server most of the day so it's impactful if I screw it up.

-

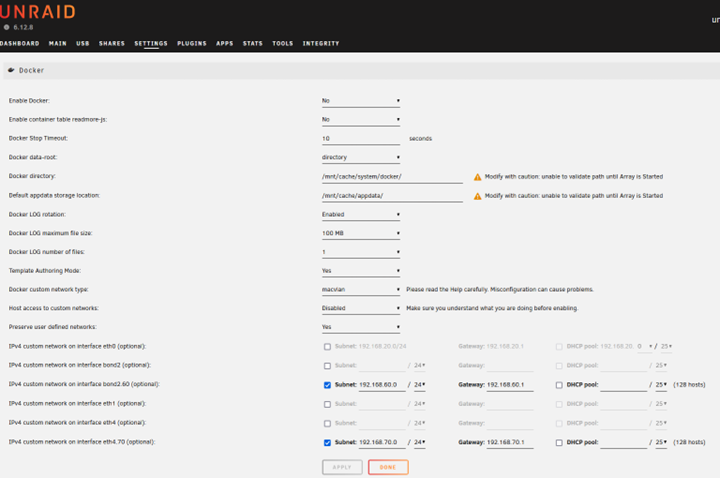

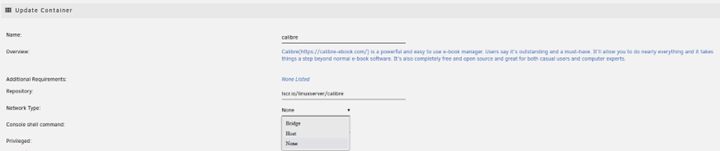

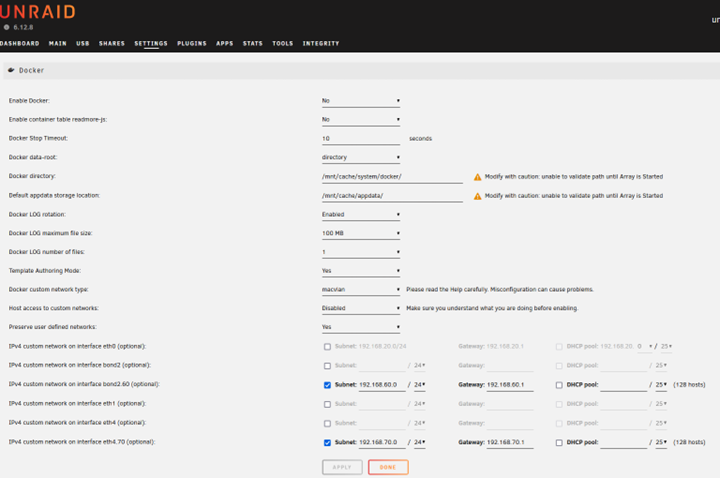

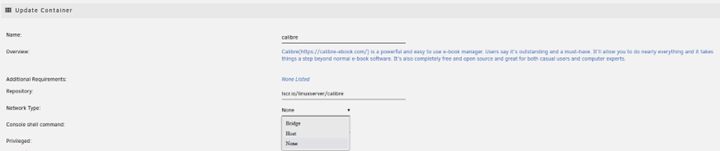

I just upgraded again and the problem remains. Downgraded to get my containers back up and I forgot to run a "docker network ls", but I did do a screencap of the docker settings (think it looks the same except 6.12.8 up top), a screencap of me trying to edit the network on on existing container (confirmed that I only get those options on any new containers as well), and I did run a diagnostics. Hope this helps ID the cause.

-

Will do. It will probably be this evening to keep a few of my services up this afternoon.

-

-

-

6 hours ago, bonienl said:

Something went wrong in the creation of the docker networks.

Feb 16 10:53:04 unraid-outer root: Error response from daemon: network dm-ac543d521cd9 is already using parent interface vhost2.60 Feb 16 10:53:08 unraid-outer root: Error response from daemon: network dm-c962d0d87feb is already using parent interface vhost4.70Can you post a screenshot of your docker settings page (advanced mode)

And post the result of this command

docker network lsYessir! Give me a few, I'll jump on that right now!

-

Okay, I was away on business for several days and just used it to step away from this for a bit. I'm back and I've really got to ask - seriously? I have 3 paid Unraid server licenses, I attached diagnostics, and marked this as an Urgent issue, and in 5 days I can't get an acknowledgement and some help? Love Unraid and @limetech, but really guys, that's crap. I have a server stuck on 6.11.5, a version you've deprecated CA and functionality for to presumably encourage upgrades, and no one can address an issue where the upgrade blows away multiple networks?

-

-

All right, good chat.

-

UPDATE - Nope, switching to macvlan to do the update still results in Unraid acting like that eth4.40 interface isn't there. I'm sure if I turned bridging on the interface it would show up, but I didn't have to do that before so....

-

Okay, so happy to report that after a hard power cycle I was able to get it to boot. I was surprised to see that despite it saying it was going to take me down only to 6.12.4 it did in fact restore me to 6.11.5.

The bad news is since it updated my CA plugin it now won't load it because I'm not on 6.12.x, but I was able to paste the URL in for the 9.30.2023 version to get it back.

Anyone got ideas oon how to work my way out of whatever the heck happened to my networks and Docker configs during the upgrade? I just tried going to 6.12.6 first and it has the same problem. I'm guessing, as I've attempted this before, that if I leave it macvlan and do the upgrade it would work, then maybe I can try to switch to ipvlan, but the whole thing smacks of some sort of issue with the migration script.

-

Same here.

-

Yup, seeing the same here with 6.11.5 and FF.

-

Awesome. Time to try again it seems.

-

Anyone know if 6.10-rc3 fixed this issue?

-

Thanks for reporting this - and for the workaround. Wish I had seen this before I started messing with vfio and messed up my server - triggering a reload and me screwing up lots of other stuff, LOL. In any case, this is still an issue on 6.10rc2.

-

I meant to add that I also noticed this is happening on my other Unraid server I'd upgraded from 6.9.2 to 6.10rc2 as well (though this one I re-setup from scratch). From provisioning on the Management Access page it reports cert info from Let's Encrypt, but when I look at the browser it is confirming that it, too, is using the self-signed cert.

-

Changed Status to Solved

Final update: 2 threads (this and the original support request), 6 days here with no response hereI do really, really like Unraid and I don't regret buying a license. Kinda regretting buying the 2nd one now though, but maybe I'll luck out and not run into any major issues and the point will be moot. Hope so. In any case, I want to add this update for posterity.

Current uptime is 3 days, 17+ hours, well beyond what it had been as of late. I believe the solution was that I pulled the 1 stick of RAM that didn't match the others out of the system. Even though the run of memtest had passed maybe subsequent ones would fail, but the stick has performed perfectly fine in other multifunction server installs. In the config I had here it just seems it was enough of a mismatch that it caused an error.

-

Updating 6.11.5 to 6.12.8 - container custom networks all gone on dropdowns

in Stable Releases

Posted

@bonienl I'm really sorry to hear about the other challenges. - understood. @Adam-M I attempted both, and neither worked. In the first scenario, I could see that the appropriate networks were still displayed for each container in the Docker screen , but none were started and attempting to start gave a "execution error". I attempted to edit a container to select the network again but the only one shown besides the builtin (host, IIRC) was bond0. I forgot to take diagnostics.

I downgraded, then tried the second approach. Same thing re: the containers not starting and execution error. I forgot to edit to see what they showed, but I'd expect it was similar. I did take a diagnostic, which I've attached here. I've had to downgrade back again because of the importance of these containers to a lot of our daily work.

unraid-outer-diagnostics-20240416-1758.zip