TODDLT

Members-

Posts

914 -

Joined

-

Last visited

Converted

-

Gender

Male

-

Location

Charleston, SC

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

TODDLT's Achievements

Collaborator (7/14)

6

Reputation

-

Does anyone worry about the age of a flash? It occurs to me I'm still on my original, of 12 years. Not any parts to break but still. A USB 3 might load and update faster. Yes/no? Any good suggestions for a robust, USB 3 flash? Plenty out there, but I know they need to have a digital ID number to work with Plex.

-

Not sure how I missed this one, but.... Thanks!

-

I've downloaded both of these, have the server running, but can't figure out how to conifgure the server for remote access.. or provide the server address/name for Subsonic. Any hints?

-

Plex doesnt show up on carplay. I'd be fine if it was music only.

-

I've been out of town for a couple days, but will check both of these out. Thanks.

-

I know there are a number of ways to stream music from an unRaid server. I primarily use Plex for this. However, I am looking for an application that can run on unRaid, and works with a client that is Apple Car Play ready. IE i would like to access my home music collection via Apple CarPlay. Plex is not CarPlay friendly likely because no one would support a driver being able to watch movies Any advise or direction to investigate is apprecaited.

-

Hoping for a little help with this. This is about the closet thing to "portable" I have found. https://smile.amazon.com/gp/product/B09WQC44B3/ref=ox_sc_act_title_7?smid=A2IQNZSH0ZS9OH&psc=1

-

TODDLT started following Advise on Compact Design (Backup)

-

I'm considering building a 2nd rig as a backup unit only. - minimal demand, only storage - capacity for 3 to 4 drives - want it small enough to pick up and walk out the door. I've been considering an external RAID enclosure that holds 3-4 drives, but expanding it would mean starting over. So that thought pushed me to just making a small unRaid backup rig. My concern with building an unraid rig is making it "portable" as a backup unit My current rig is 43 TB with 28 used. I was thinking of using 2 - 18 TB drives, pretty cheap right now and that would cover me for a while. Options are just use a RAID 0 Esata enclosure... OR... an unraid rig... thoughts?

-

I have lost everything ("failed to load ldlinux.c32")

TODDLT replied to stillnocake's topic in General Support

This is SO true, a harsh reality sometimes, but can be a real struggle to balance. Everything on my server that is truly irreplaceable and would be problematic if I lost... is backed up, in more than one place I might add, one of which is detached. However, that makes up such a small piece of the big picture. I have ~ 500 GB of truly can't lose it kind of stuff, and another 500GB of stuff that is compact enough it's not worth the risk. The rest of it comprises another 27 TB and grows regularly. Its mostly Plex media and most of that I have the original media for, but it would take me months to re-rip it all. The DVR component is growing and would be gone for good. It wouldn't change my life but I'd still probably cry if I lost it, or at least throw a thing or two through the TV . This thread has me thinking I need to just bite the bullet on a couple drives to back it up. I could probably find a couple drives that would hold it in my desktop for $600 and as I say that it seems ridiculous that I haven't. A multidrive NAS box would be nice though and I guess I need to look at that option too. I guess I know what I'm doing after dinner -

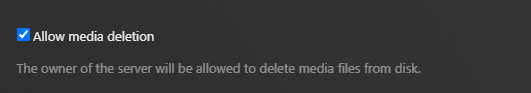

Thanks, I actually just found "docker safe new permissions" and ran it. Appears to have worked. I will post a message specific to Plex and see if anyone knows how to modify settings on how it sets up permissions for DVR recordings. I am clear that it is only folders created by the DVR that have this permission issue. I believe it has to do with the below setting in Plex. This allows you to delete a file from inside the plex GUI, but from the note underneath I am guessing that it is adjusting access permissions when the save to location is created by Plex (and this only happens with the DVR). Thanks for the time.

-

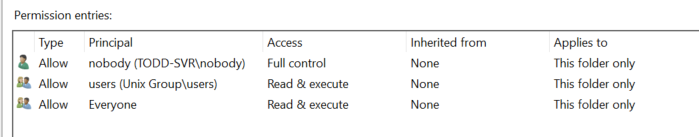

OK, but the problem is that only "nobody" has access to modify those files/folders. I don't have a userID of nobody to access with Where it shows that only "nobody" has read/write access, I can't create or delete anything. Where it shows that "Everyone has read/write access, I can delete, create folders etc.. How do I change the permissions

-

My primary use of unRaid, is for Plex, and one of my Plex uses is as a DVR. So whenever Plex records something new it creates a new folder to put it in. Somewhere along the line Plex started limiting everyone but the "owner" to read access when it creates a folder. I believe this MAY have coincided with the time I turned on the ability to delete files within plex, but not totally sure about that. The trouble is, the "owner" for plex is a user called "nobody" which doesnt actually exist except from Plex, so I can't actually manually move or deleted files/folders that were created by the Plex DVR. I have included snips of those folder permission as they appear to me for information. There is no user called "nobody" in my unraid user settings. So two fundamental questions. 1. How do you globally reset user permissions on a given drive or folder or share within unraid. All my DVR goes to one specific drive so if I could push down an update of user permissions it would solve the current issue. 2. Is there a way inside Plex (on unraid) to change the assigned username? (I will probably ask this one in a more plex specific form as well). If it used my username for example than this would not be a problem. Thanks for any help. These are permissions for a recently created folder by Plex. When I try to modify the permissions from my windows machine it denies access. These are properties for a folder that has been on the server for a long time. I have no idea how "nobody" was created as a user, but control is not limited.

-

I have Plex installed on my unRaid server and will be without internet for some unknown period of time.. until they re-string the cable line where a tree recently removed it. I was trying to change the server settings to allow local connections without authentication so at least we can watch Plex content in the house. Since the server cant see the internet, I'm not having to do this manually if i can. I did read at https://www.howtogeek.com/303282/how-to-use-plex-media-server-without-internet-access/ There is a configuration/preferences file that contains "allowedNetworks" and adding the range "192.168.68.1/255.255.255.0" will let any local address work. Then I see for Linux installed plex the preferences / settings are in "Preferences.xml" I found that file on unRaid, but nothing within it for "allowednetworks" So.... does anyone know how to manually change Plex on UnRaid to allow local connections without authentication?