Nooke

-

Posts

38 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by Nooke

-

-

post your xml please

do you have the fps drops as soon as you move your mouse?

if so, go to your mouse tool and change the frequency to 125Hz and check if it's better.

I had that issue when running without "hypervisor" cpu flag.

so better provide some more information about your system (VM xml, system devices, drivers etc)

cheers

-

pretty simple - I just changed the cpu topology.

I used the following

<cpu mode='host-passthrough' check='none'> <topology sockets='1' cores='6' threads='2'/> <cache mode='passthrough'/>

and now I'm using this instead

<cpu mode='host-passthrough' check='none'> <topology sockets='2' cores='3' threads='2'/> <cache mode='passthrough'/>

that made my L3 cache benchmark skyrocket.

and overall smoothness of the windows 10 vm is improved.

kinda have the most fps ever in cs:go and world of warcraft (stable!)

-

I had some serious fps drops and stutter in win 10 (not only in gaming).

felt sluggish since last win 10 update.

anyways I kinda fixed it.

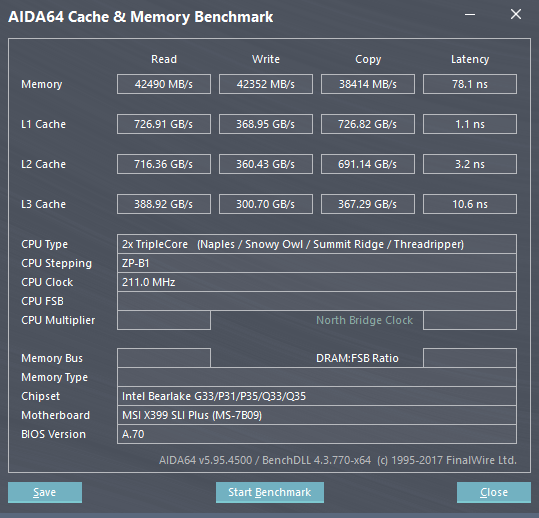

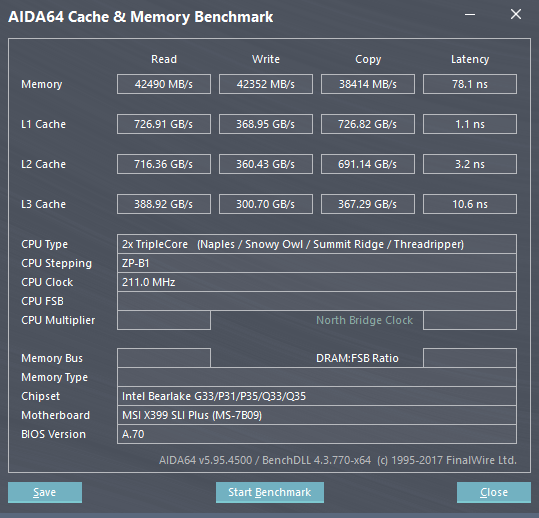

L3 cache benchmarks looking fine now:

L3 read 388GB/s

L3 write 300GB/s

L3 copy 367GB/s

L3 latency 10.6ns

(6core 12threads)

that's more like the results I would have expected at the beginning.

If anyone else has some issues regarding this - could share my findings.

-

I did - no difference.

-

1 hour ago, jordanmw said:

Check this out:

Thanks for sharing but I don't have a core pairing issue here.

I'm using cores only from 1 numa node (eg node1, cores 6-11,18-23).

Numa Node 1 is connected to my GPU aswell as my NMVe SDD (see lstopo attached)

So my problem here is that with cores from 1 node depending on core assignments in win10 I get totally screwed benchmarks for L3 Cache performance in AIDA64.

-

Hi,

so I have some serious differences in L3 cache performance for my win10 vm depending on cores being used.

there must be something fishy...

My current kernel options:

append processor.max_cstate=1 nvme_core.default_ps_max_latency_us=0 kvm_amd npt=1 nested=1 amd_iommu=on isolcpus=6-11,18-23 nohz_full=6-11,18-23 rcu_nocbs=6-11,18-23 pcie_acs_override=downstream,multifunction vfio_iommu_type1.allow_unsafe_interrupts=1 initrd=/bzroot

Here my current xml for 4core 8thread.

Only difference for 5c/10t and 6c/12t would be the core-count, emulatorpin

Using unraid 6.8.0-rc3

latest windows 10 1903

as I'm still having issues with my system, appreciate any support

cheers

Nooke

-

Have to mention, most ppl will saturate their WAN in downstream not in upstream.

Actually you can't shape the downstream at all (except in your local environment).

QoS only works E2E and normal Internet Links don't have any QoS for customers.

So I don't see the real benefit in using this pfSense solution except you have issues with your upstream bandwidth getting used too much.

cheers

-

I made some benchmarks aswell.

Not much difference between 440fx and Q35.

https://docs.google.com/spreadsheets/d/1hhP34z_fSDPy2Uu2umJ1xfq5Zd4_F5IRchqAkUyiXJw/edit?usp=sharing

-

you have the wrong machine type. this PCIe speed fix is for Q35 as 440FX has no PCIe Ports.

Switch to Q35

-

3 minutes ago, bastl said:

@Nooke I'am currently testing a lot of different settings. Main VM runs still on i440fx and i have a second template as Q35 configured with the same devices passthrough as my main VM. So far it looks promising. Still trying to find the best settings for numa settings pinning etc. Will report back later.

looking forward to it

thanks mate!

-

I'm on i440FX and dunno if it's worth it to switch to Q35.

I had serious issues before with Q35 and i440FX is working kinda good right now.

Switching template to Q35 broke my system the last time and I had to start by zero.

So would like to know if there is much of a difference before starting from scratch again

Anyone could do some comparison with both machine types on RC4 and share?

Specs in signature.

-

Can anyone share some testing or benchmark for i440FX vs Q35 with this new PCIe patch?

Would love a comparison.

-

23 minutes ago, bastl said:

@Nooke can you please link the level1techs forums entry? I can't find it.

sure,

https://forum.level1techs.com/t/increasing-vfio-vga-performance/133443/84

there you go

-

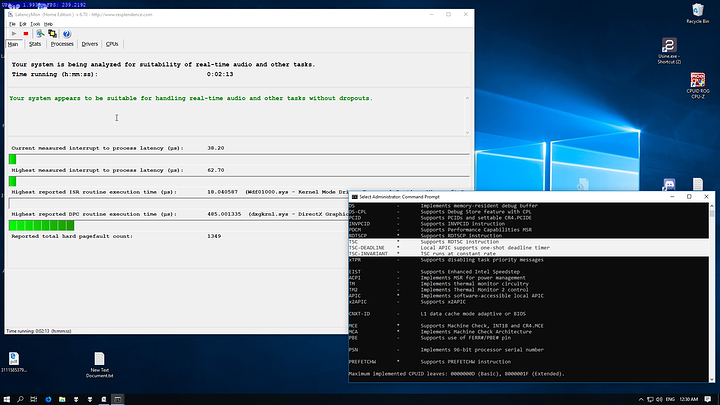

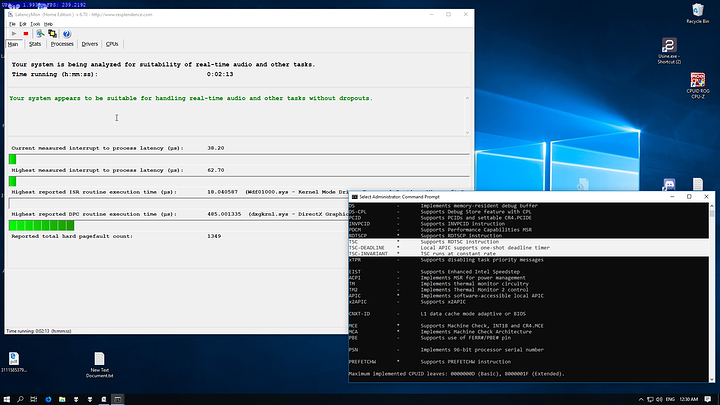

well after upgrading to 6.7-rc3 I am in the same boat as you guys.

already had latency issues before but it was manageable.

I tried to go with TSC instead of HPET/Hypervclock. Latency dropped by alot but whole Windows VM became sluggish/unresponsive.

As of right now I can't even get back to my 6.6 VM cause with rc3 update my flashdisk got corrupted and old VM xml is lost.

Regarding the PCIe speed fix you are talking about, it's the one from level1techs right?

If so, that one is only useful for Q35 machine type.

Actually I can't even manage to get my DDR4 Latency back to 80-90ns..always sitting at 130-150ns even so I'm only allocating memory on the numa node where my cpu cores are pinned to.

In level1techs forum there was a guy with hell like low Latency

Even with his XML not possible to reach those latency on fresh install...sad

-

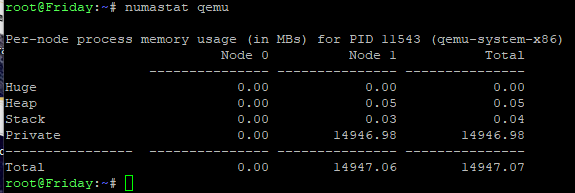

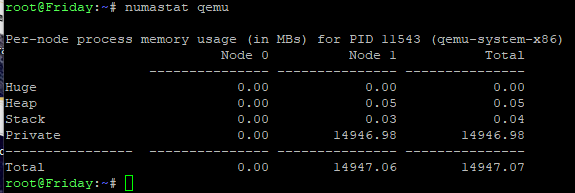

1 hour ago, bastl said:

numastat qemuShows current running VMs and their use of RAM. As i said earlier, you can tell the VM with the numatune option which node to use the memory from but it looks like it doesn't. There are always a couble megs used from the other node.

First VM is set to use 8GB and 4 cores from node0, second VM is set to use 16GB and 14 cores from node1.

<memory mode='strict' nodeset='1'/>

Strict should limit the VM only to a specific node. But it doesn't. "prefered" or "interleaved" doesn't change anything for me.

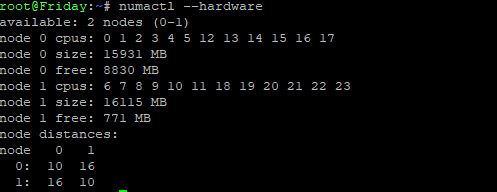

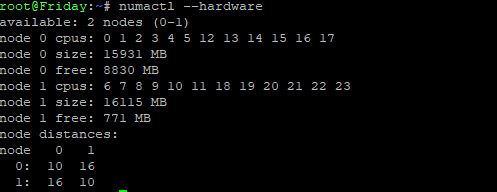

I guess you took too much RAM on your VM.

I'm running with strict memory and my Gaming VM is only using stuff from the node I set it to.

What you have to keep in mind, if you have like 32 GB overall with 4x8 GB sticks and have those in normal quad channel mode (motherboard manual). you can't take exactly 16GB for a VM.

Because even without running any VM there is some small amount of memory already used from each node.

so just check how much memory is available before starting the VM with "numactl --hardware" and then just use that much and not more.

cheers

-

On 11/16/2018 at 9:05 PM, DZMM said:

I even emailed msi and they said no, although the reply was a bit short so I'm not 100% certain the person understood the question

Go into BIOS -> OC -> Advanced DRAM Configuration.

Scroll down to "Misc Item" and look for "memory interleaving". Change this from "auto" to "channel" and you are in NUMA mode.

I have the MSI X399 SLI Plus myself you should definately check if your GPU and additional M.2 devices are running in PCIe Gen 3 (GPU-Z etc).

Mine always fallback to PCIe Gen 1 / 2 for the devices which drastically reduced my performance.

Had a support Ticket on MSI open and after 4 different BIOS versions, they could fix the issue.

cheers

-

1

1

-

-

If not I will return the Mobo and get another one (maybe the AsRock).

But this will be kinda expensive cause I have to change the water cooling block aswell

-

Samsung 970 Evo (latest FW)

Zotac 1080 TI Blower

Both devices work in PCIe 3.0 when I did a fresh clear CMOS, so no issue on those devices at all.

Issue just pops up as soon as I change CPU Multiplier - doesn't matter if I switch it back to default

-

Thanks for sharing the infos

I'm using PCIe and M.2 slots directly connected to the Die inside the VM. Anyways I tried with native Windows 10 and got the same issue.

So I suspect this is a Hardware or Software failure with the MSI X399 SLI Plus.

Hopefully the MSI support can help in this case - as of right now I've just got a new BIOS Version (BETA I guess) from them which didn't help.

cheers

-

I've seen that you have an ASRock x399 Board instead of MSI.

I guess you have a fixed CPU multiplier right?

Can you check via GPU-Z if your GPU is running at PCIe 3.0 ?

MSI provided a new BIOS update to my support request but the issue persists.

As soon as I set the CPU multiplier or BLCK from "auto" to somethign else the GPU and my NVMe SSD going back to PCIe 2.0

Even after changing back to "auto" or loading defaults via BIOS menu doesn't fix that.

I have to clear CMOS to get PCIe 3.0 back.

Just want to figure out if that is a regular x399 issue or only on my specific Motherboard.

cheers

Nooke

-

Some new information from my side.

I've found an issue but can't seem to fix it.

I checked the PCIe Speed of my GPU and my NMVe Drive.

Both running in PCIe Gen 2.

GPU

root@Friday:~# lspci -s 42:00.0 -vv | grep Lnk LnkCap: Port #0, Speed 5GT/s, Width x16, ASPM L0s L1, Exit Latency L0s <512ns, L1 <16us LnkCtl: ASPM Disabled; RCB 64 bytes Disabled- CommClk+ LnkSta: Speed 2.5GT/s, Width x16, TrErr- Train- SlotClk+ DLActive- BWMgmt- ABWMgmt- LnkCtl2: Target Link Speed: 5GT/s, EnterCompliance- SpeedDis- LnkSta2: Current De-emphasis Level: -3.5dB, EqualizationComplete-, EqualizationPhase1-NVMe

root@Friday:~# lspci -s 41:00.0 -vv | grep Lnk LnkCap: Port #0, Speed 8GT/s, Width x4, ASPM L1, Exit Latency L1 <64us LnkCtl: ASPM Disabled; RCB 64 bytes Disabled- CommClk+ LnkSta: Speed 5GT/s, Width x4, TrErr- Train- SlotClk+ DLActive- BWMgmt- ABWMgmt- LnkCtl2: Target Link Speed: 8GT/s, EnterCompliance- SpeedDis- LnkSta2: Current De-emphasis Level: -3.5dB, EqualizationComplete-, EqualizationPhase1-I already tried fiddling around with pcie_aspm=off and using pcie_aspm.policy=performance instead.

Changing PCH State in Bios to force gen3. Seems nothing is working.

The GPU is plugged in the first PCIe Slot.

The NMVe drive is installed right below this PCIe Slot.

Is it possible that the Link is not working as PCIe Gen 3 cause both share the same PCIe Lanes?

Couldn't find any documentation regarding the M.2 port and PCIe port

UPDATE:

seems the BIOS on the MSI x399 SLI Plus is bugged.

As soon as I choose a specific CPU multiplier the PCIe Gen switches from 3.0 to 2.0

Even changing back to Auto not restoring normal behaviour.

I tested all kind of settings and could point out it that it has to be CPU multiplier and bkcl frequency.

CMOS clear is necessary to get PCIe 3.0 functionality back. Restoring in BIOS to default settings didn't work for me.

cheers

-

alright will do some screenshots later on.

diagnostics attached

-

Maybe it is worth it If I make some screenshots from BIOS settings?

Dunno if anyone else in here has that particular Board

-

2 minutes ago, bastl said:

I still don't get these custom edits. There are a couple TR users here in the forum and i never saw these used by someone. 🙄

Another thing you might can test. In my case passing through a Samsung 960 NVME i first had a couple issues with it where it showed not the performance it might have shown. I didn't tested any games back than or did any other benchmarks than read and write tests. The default driver loaded was the windows one. I had to install Samsungs Magician Tool and the driver from Samsung to fix that. The read and write speeds randomly dropped with the windows driver.

I have the Samsung driver installed

There are alot of edits if you check SpaceinvaderOne's youtube or posts here.

Libvirt Hooks Support

in General Support

Posted

hi there,

is it possible to use libvirt hooks inside unRaid?

What I want to do is run some specific bash scripts right before the start of a VM.

And running another script as soon as the VM is stopped.

on the libvirt documentation about hooks for my use case the "/etc/libvirt/hooks/qemu" would be the right one.

but this is already a customized php script from unRaid and if I replace it with a bash script for my needs I would break the gui VM start/stop ability as it seems.

VFIO-Tools on github has the specific qemu hook part to maintain hooks on a per vm basis.

https://github.com/PassthroughPOST/VFIO-Tools

would appreciate any support because I need that to allocate on the fly hugepages right before the VM is started.

I could do that by starting my allocation bash script inside /boot/config/go but that would only do one part of the job (pre vm boot, not post shutdown) and I can't use any array specific parts for evaluating memory usage (eg /etc/libvirt is not accessable before array startup).

cheers