tr0910

Members-

Posts

1449 -

Joined

-

Last visited

Converted

-

Gender

Undisclosed

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

tr0910's Achievements

Community Regular (8/14)

43

Reputation

-

XFS_Repair was able to find 2nd superblock on 5 of the 6 affected disks. But only about 2/3 of the files were recovered, and they all were put in lost and found. It will be a dog's breakfast to get anything good. But at least we don't have a total failure. I do have another backup that I will be pulling from far away that should be able to recover all files. Moral of the story, your backups matter. 2nd moral, human error is a much bigger source of data loss than anything else.

-

Thanks, the best advice when something goes wrong, is take a deep breath and do nothing until you've thought it all through. I should have this backed up, but it will be difficult to access. I'll have to think carefully.....

-

I have been doing this for over 10 years with unRaid and this is my first big user error. I was moving a bunch of full array disks between servers and instead of recalculating parity, I made a mistake and unRaid started wiping the disks. These 5 disks are all XFS and I only allowed it to go for less than 10 seconds, but it was enough to wipe the file system and now unRaid thinks these are unmountable. I would really like to bring back the XFS file system........ Does anyone have any suggestions? (while I beat myself with a wet noodle)

-

@jortanAfter a week, this dodgy drive had got up to 18% resilvered, when I got fed up with the process. Dropped a known good drive into the server and pulled the dodgy one. This is what it said: zpool status pool: MFS2 state: DEGRADED status: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state. action: Wait for the resilver to complete. scan: resilver in progress since Mon Jul 26 18:28:07 2021 824G scanned at 243M/s, 164G issued at 48.5M/s, 869G total 166G resilvered, 18.91% done, 04:08:00 to go config: NAME STATE READ WRITE CKSUM MFS2 DEGRADED 0 0 0 mirror-0 DEGRADED 0 0 0 replacing-0 DEGRADED 387 14.4K 0 3739555303482842933 UNAVAIL 0 0 0 was /dev/sdf1/old 5572663328396434018 UNAVAIL 0 0 0 was /dev/disk/by-id/ata-WDC_WD30EZRS-00J99B0_WD-WCAWZ1999111-part1 sdf ONLINE 0 0 0 (resilvering) sdg ONLINE 0 0 0 errors: No known data errors It passed the test, 4 hours later we have this: zpool status pool: MFS2 state: ONLINE status: Some supported features are not enabled on the pool. The pool can still be used, but some features are unavailable. action: Enable all features using 'zpool upgrade'. Once this is done, the pool may no longer be accessible by software that does not support the features. See zpool-features(5) for details. scan: resilvered 832G in 04:09:34 with 0 errors on Tue Aug 3 07:13:24 2021 config: NAME STATE READ WRITE CKSUM MFS2 ONLINE 0 0 0 mirror-0 ONLINE 0 0 0 sdf ONLINE 0 0 0 sdg ONLINE 0 0 0 errors: No known data errors

-

Yep, and just as it passed 8% we had a power blink from a lightning storm and I intentionally did not have this plugged into the UPS. It failed gracefully, but restarted from zero. I have perfect drives that I will replace this with, but why not experience all of ZFS quirks since I have a chance. If the drive fails during resilvering I won't be surprised. If ZFS can manage resilvering without getting confused on this dingy harddrive, I will be impressed.

-

@glennv @jortan I have installed a drive that is not perfect and started the resilvering (this drive has some questionable sectors). Might as well start with a worst possible case and see what happens if resilvering fails. (grin) I have docker and VM running from the degraded mirror while the resilvering is going on. Hopefully this doesn't confuse the resilvering. How many days should a resilvering take to complete on a 3tb drive? Its been running for over 24 hours now. zpool status pool: MFS2 state: DEGRADED status: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state. action: Wait for the resilver to complete. scan: resilver in progress since Mon Jul 26 18:28:07 2021 545G scanned at 4.30M/s, 58.6G issued at 473K/s, 869G total 49.1G resilvered, 6.75% done, no estimated completion time config: NAME STATE READ WRITE CKSUM MFS2 DEGRADED 0 0 0 mirror-0 DEGRADED 0 0 0 replacing-0 DEGRADED 0 0 0 3739555303482842933 FAULTED 0 0 0 was /dev/sdf1 sdi ONLINE 0 0 0 (resilvering) sdf ONLINE 0 0 0 errors: No known data errors

-

(bump) Has anyone done the zpool replace? What is the unRaid syntax for the replaced drive? Zpool status is reporting strange device names above.

-

unRaid has a dedicated following, but there are some areas of general data integrity and security that unRaid hasn't developed as far as it has with Docker and VM support. I would like Open ZFS baked in at some point, and I have seen some interest from the developers, but they have to get around the Oracle legal bogeyman. I have seen no discussion around snapraid. Check out ZFS here.

-

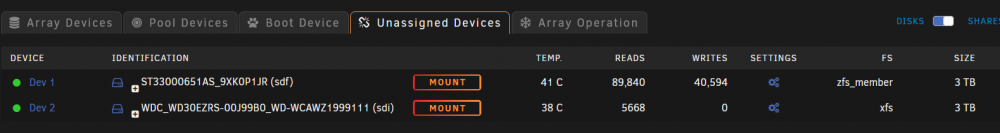

I need to do a zpool replace but what is the syntax for using with unRaid? I'm not sure how to reference the failed disk? I need to replace the failed disk without trashing the ZFS mirror. A 2 disk mirror has dropped one device. Unassigned devices does not even see the failing drive at all any more. I rebooted and swapped the slots for these 2 mirrored disks, and the same problem remains. The failure follows the missing disk. zpool status -x pool: MFS2 state: DEGRADED status: One or more devices could not be used because the label is missing or invalid. Sufficient replicas exist for the pool to continue functioning in a degraded state. action: Replace the device using 'zpool replace'. see: https://openzfs.github.io/openzfs-docs/msg/ZFS-8000-4J scan: scrub repaired 0B in 03:03:13 with 0 errors on Thu Apr 29 08:32:11 2021 config: NAME STATE READ WRITE CKSUM MFS2 DEGRADED 0 0 0 mirror-0 DEGRADED 0 0 0 3739555303482842933 FAULTED 0 0 0 was /dev/sdf1 sdf ONLINE 0 0 0 My ZFS had 2 x 3tb Seagate spinning rust for VM and Docker. BothVM's and Docker seem to continue to work with a failed drive, but aren't working as fast. I have installed another 3tb drive that I want to replace the failed drive with. Here is the unassigned devices with the current ZFS disk and a replacement disk that was in the array previously. I will do the zpool replace but what is the syntax for using with unRaid? Is it zpool replace 3739555303482842933 sdi

-

Great $995 Intel MB/CPU/RAM Bundle on US eBay if Looking to Upgrade

tr0910 replied to Hoopster's topic in Good Deals!

Yeah, same here. -

Great $995 Intel MB/CPU/RAM Bundle on US eBay if Looking to Upgrade

tr0910 replied to Hoopster's topic in Good Deals!

Pass through of iGPU is not often done, and not required for most Win10 use via RDP. Mine was not passed through. -

Great $995 Intel MB/CPU/RAM Bundle on US eBay if Looking to Upgrade

tr0910 replied to Hoopster's topic in Good Deals!

I don't have this combo, but a similar one. Windows 10 will load and run fine with the integrated graphics on mine. I'm using Windows RDP for most VM access. The only downside is that that video performance is nowhere near bare metal. Perfectly usable for Office applications, Internet browsers and totally fine for programming, but weak for anything where you need quick response from keyboard and mouse such as gaming. The upside is that RDP will run over the network so no separate cabling for video or mouse. For bare metal performance a dedicated video card for each VM is required, and then you need video and keyboard / mouse cabline. -

(SOLVED) [6.9.2] Help understanding preclear results

tr0910 replied to hoppers99's topic in General Support

Every drive has a death sentence. But just like Mark Twain, "the rumors of my demise are greatly exaggerated". It's not so much the number of reallocated sectors that is worrying, but whether the drive is stable and is not adding more reallocated sectors on a regular basis. Use it with caution, (maybe run a second preclear to see what happens) and if it doesn't grow any more bad sectors, put it to work. I have had 10 yr old drives continue to perform flawlessly, and I have had them die sudden and violent deaths much younger. Keep your parity valid, and also backup important data separately. Parity is not backup. -

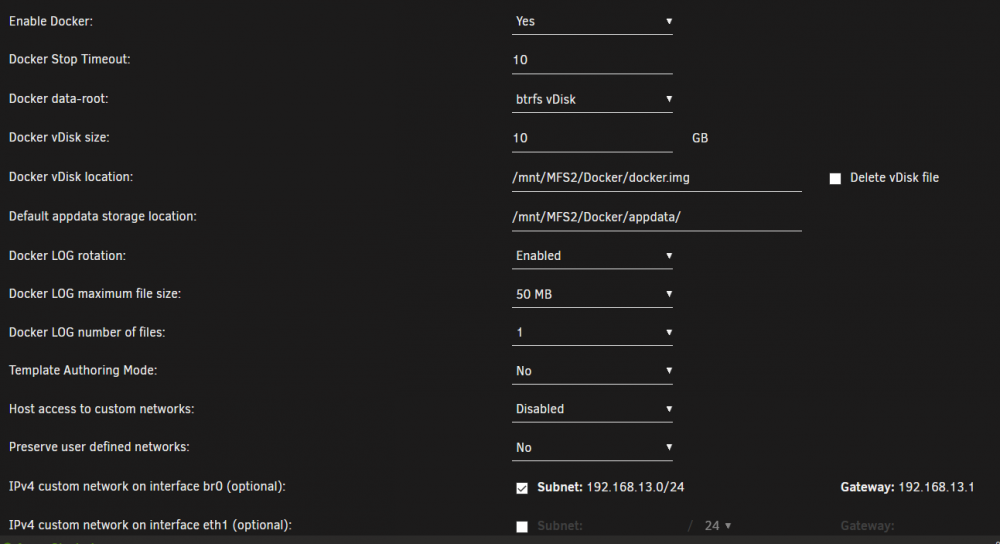

I've attempted to move the docker image to ZFS along with appdata. VM's are working. Docker refuses to start. Do I need to adjust the BTRFS image type? Correction, VM's are not working once the old cache drive is disconnected.

-

ZFS was not responsible for the problem. I have a small cache drive and some of the files for docker and VM's still comes from there for startup. This drive didn't show up on boot. Powering down, and making sure this drive came up, resulted in VM and docker behaving normally. I need to get all appdata files moved to ZFS and off this drive as I am not using it for anything else.