-

Posts

450 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by francrouge

-

-

On 11/4/2022 at 10:29 AM, Bjur said:

I have the same problem ever after manually using the permission script from bolognaise. Any thoughts?

did you fine a solution getting the samething

-

Hi guys

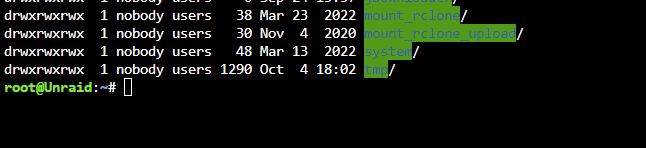

quick question when you choose the "move" feature is it suppose to keep the folders in rclone upload share

My script uploads the file with folder but does not delete the empty folder locally after

#!/bin/bash ###################### ### Upload Script #### ###################### ### Version 0.95.5 ### ###################### ####### EDIT ONLY THESE SETTINGS ####### # INSTRUCTIONS # 1. Edit the settings below to match your setup # 2. NOTE: enter RcloneRemoteName WITHOUT ':' # 3. Optional: Add additional commands or filters # 4. Optional: Use bind mount settings for potential traffic shaping/monitoring # 5. Optional: Use service accounts in your upload remote # 6. Optional: Use backup directory for rclone sync jobs # REQUIRED SETTINGS RcloneCommand="move" # choose your rclone command e.g. move, copy, sync RcloneRemoteName="gdrive_media_vfs" # Name of rclone remote mount WITHOUT ':'. RcloneUploadRemoteName="gdrive_media_vfs" # If you have a second remote created for uploads put it here. Otherwise use the same remote as RcloneRemoteName. LocalFilesShare="/mnt/user/mount_rclone_upload" # location of the local files without trailing slash you want to rclone to use RcloneMountShare="/mnt/user/mount_rclone" # where your rclone mount is located without trailing slash e.g. /mnt/user/mount_rclone MinimumAge="15m" # sync files suffix ms|s|m|h|d|w|M|y ModSort="ascending" # "ascending" oldest files first, "descending" newest files first # Note: Again - remember to NOT use ':' in your remote name above # Bandwidth limits: specify the desired bandwidth in kBytes/s, or use a suffix b|k|M|G. Or 'off' or '0' for unlimited. The script uses --drive-stop-on-upload-limit which stops the script if the 750GB/day limit is achieved, so you no longer have to slow 'trickle' your files all day if you don't want to e.g. could just do an unlimited job overnight. BWLimit1Time="01:00" BWLimit1="10M" BWLimit2Time="08:00" BWLimit2="10M" BWLimit3Time="16:00" BWLimit3="10M" # OPTIONAL SETTINGS # Add name to upload job JobName="_daily_upload" # Adds custom string to end of checker file. Useful if you're running multiple jobs against the same remote. # Add extra commands or filters Command1="--exclude downloads/**" Command2="" Command3="" Command4="" Command5="" Command6="" Command7="" Command8="" # Bind the mount to an IP address CreateBindMount="N" # Y/N. Choose whether or not to bind traffic to a network adapter. RCloneMountIP="192.168.1.253" # Choose IP to bind upload to. NetworkAdapter="eth0" # choose your network adapter. eth0 recommended. VirtualIPNumber="1" # creates eth0:x e.g. eth0:1. # Use Service Accounts. Instructions: https://github.com/xyou365/AutoRclone UseServiceAccountUpload="N" # Y/N. Choose whether to use Service Accounts. ServiceAccountDirectory="/mnt/user/appdata/other/rclone/service_accounts" # Path to your Service Account's .json files. ServiceAccountFile="sa_gdrive_upload" # Enter characters before counter in your json files e.g. for sa_gdrive_upload1.json -->sa_gdrive_upload100.json, enter "sa_gdrive_upload". CountServiceAccounts="5" # Integer number of service accounts to use. # Is this a backup job BackupJob="N" # Y/N. Syncs or Copies files from LocalFilesLocation to BackupRemoteLocation, rather than moving from LocalFilesLocation/RcloneRemoteName BackupRemoteLocation="backup" # choose location on mount for deleted sync files BackupRemoteDeletedLocation="backup_deleted" # choose location on mount for deleted sync files BackupRetention="90d" # How long to keep deleted sync files suffix ms|s|m|h|d|w|M|y ####### END SETTINGS ####### ############################################################################### ##### DO NOT EDIT BELOW THIS LINE UNLESS YOU KNOW WHAT YOU ARE DOING ##### ############################################################################### ####### Preparing mount location variables ####### if [[ $BackupJob == 'Y' ]]; then LocalFilesLocation="$LocalFilesShare" echo "$(date "+%d.%m.%Y %T") INFO: *** Backup selected. Files will be copied or synced from ${LocalFilesLocation} for ${RcloneUploadRemoteName} ***" else LocalFilesLocation="$LocalFilesShare/$RcloneRemoteName" echo "$(date "+%d.%m.%Y %T") INFO: *** Rclone move selected. Files will be moved from ${LocalFilesLocation} for ${RcloneUploadRemoteName} ***" fi RcloneMountLocation="$RcloneMountShare/$RcloneRemoteName" # Location of rclone mount ####### create directory for script files ####### mkdir -p /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName #for script files ####### Check if script already running ########## echo "$(date "+%d.%m.%Y %T") INFO: *** Starting rclone_upload script for ${RcloneUploadRemoteName} ***" if [[ -f "/mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/upload_running$JobName" ]]; then echo "$(date "+%d.%m.%Y %T") INFO: Exiting as script already running." exit else echo "$(date "+%d.%m.%Y %T") INFO: Script not running - proceeding." touch /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/upload_running$JobName fi ####### check if rclone installed ########## echo "$(date "+%d.%m.%Y %T") INFO: Checking if rclone installed successfully." if [[ -f "$RcloneMountLocation/mountcheck" ]]; then echo "$(date "+%d.%m.%Y %T") INFO: rclone installed successfully - proceeding with upload." else echo "$(date "+%d.%m.%Y %T") INFO: rclone not installed - will try again later." rm /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/upload_running$JobName exit fi ####### Rotating serviceaccount.json file if using Service Accounts ####### if [[ $UseServiceAccountUpload == 'Y' ]]; then cd /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/ CounterNumber=$(find -name 'counter*' | cut -c 11,12) CounterCheck="1" if [[ "$CounterNumber" -ge "$CounterCheck" ]];then echo "$(date "+%d.%m.%Y %T") INFO: Counter file found for ${RcloneUploadRemoteName}." else echo "$(date "+%d.%m.%Y %T") INFO: No counter file found for ${RcloneUploadRemoteName}. Creating counter_1." touch /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/counter_1 CounterNumber="1" fi ServiceAccount="--drive-service-account-file=$ServiceAccountDirectory/$ServiceAccountFile$CounterNumber.json" echo "$(date "+%d.%m.%Y %T") INFO: Adjusted service_account_file for upload remote ${RcloneUploadRemoteName} to ${ServiceAccountFile}${CounterNumber}.json based on counter ${CounterNumber}." else echo "$(date "+%d.%m.%Y %T") INFO: Uploading using upload remote ${RcloneUploadRemoteName}" ServiceAccount="" fi ####### Upload files ########## # Check bind option if [[ $CreateBindMount == 'Y' ]]; then echo "$(date "+%d.%m.%Y %T") INFO: *** Checking if IP address ${RCloneMountIP} already created for upload to remote ${RcloneUploadRemoteName}" ping -q -c2 $RCloneMountIP > /dev/null # -q quiet, -c number of pings to perform if [ $? -eq 0 ]; then # ping returns exit status 0 if successful echo "$(date "+%d.%m.%Y %T") INFO: *** IP address ${RCloneMountIP} already created for upload to remote ${RcloneUploadRemoteName}" else echo "$(date "+%d.%m.%Y %T") INFO: *** Creating IP address ${RCloneMountIP} for upload to remote ${RcloneUploadRemoteName}" ip addr add $RCloneMountIP/24 dev $NetworkAdapter label $NetworkAdapter:$VirtualIPNumber fi else RCloneMountIP="" fi # Remove --delete-empty-src-dirs if rclone sync or copy if [[ $RcloneCommand == 'move' ]]; then echo "$(date "+%d.%m.%Y %T") INFO: *** Using rclone move - will add --delete-empty-src-dirs to upload." DeleteEmpty="--delete-empty-src-dirs " else echo "$(date "+%d.%m.%Y %T") INFO: *** Not using rclone move - will remove --delete-empty-src-dirs to upload." DeleteEmpty="" fi # Check --backup-directory if [[ $BackupJob == 'Y' ]]; then echo "$(date "+%d.%m.%Y %T") INFO: *** Will backup to ${BackupRemoteLocation} and use ${BackupRemoteDeletedLocation} as --backup-directory with ${BackupRetention} retention for ${RcloneUploadRemoteName}." LocalFilesLocation="$LocalFilesShare" BackupDir="--backup-dir $RcloneUploadRemoteName:$BackupRemoteDeletedLocation" else BackupRemoteLocation="" BackupRemoteDeletedLocation="" BackupRetention="" BackupDir="" fi # process files rclone $RcloneCommand $LocalFilesLocation $RcloneUploadRemoteName:$BackupRemoteLocation $ServiceAccount $BackupDir \ --user-agent="$RcloneUploadRemoteName" \ -vv \ --buffer-size 512M \ --drive-chunk-size 512M \ --max-transfer 725G --tpslimit 3 \ --checkers 3 \ --transfers 3 \ --order-by modtime,$ModSort \ --min-age $MinimumAge \ $Command1 $Command2 $Command3 $Command4 $Command5 $Command6 $Command7 $Command8 \ --exclude *fuse_hidden* \ --exclude *_HIDDEN \ --exclude .recycle** \ --exclude .Recycle.Bin/** \ --exclude *.backup~* \ --exclude *.partial~* \ --drive-stop-on-upload-limit \ --bwlimit "${BWLimit1Time},${BWLimit1} ${BWLimit2Time},${BWLimit2} ${BWLimit3Time},${BWLimit3}" \ --bind=$RCloneMountIP $DeleteEmpty # Delete old files from mount if [[ $BackupJob == 'Y' ]]; then echo "$(date "+%d.%m.%Y %T") INFO: *** Removing files older than ${BackupRetention} from $BackupRemoteLocation for ${RcloneUploadRemoteName}." rclone delete --min-age $BackupRetention $RcloneUploadRemoteName:$BackupRemoteDeletedLocation fi ####### Remove Control Files ########## # update counter and remove other control files if [[ $UseServiceAccountUpload == 'Y' ]]; then if [[ "$CounterNumber" == "$CountServiceAccounts" ]];then rm /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/counter_* touch /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/counter_1 echo "$(date "+%d.%m.%Y %T") INFO: Final counter used - resetting loop and created counter_1." else rm /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/counter_* CounterNumber=$((CounterNumber+1)) touch /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/counter_$CounterNumber echo "$(date "+%d.%m.%Y %T") INFO: Created counter_${CounterNumber} for next upload run." fi else echo "$(date "+%d.%m.%Y %T") INFO: Not utilising service accounts." fi # remove dummy file rm /mnt/user/appdata/other/rclone/remotes/$RcloneUploadRemoteName/upload_running$JobName echo "$(date "+%d.%m.%Y %T") INFO: Script complete" exit -

12 hours ago, Roudy said:

Trying to circle back on what I've missed. Have you gotten it to work? You might try running the "Docker Safe New Perms" located under the "Tools" tab and see if that helps at all. You might want to give it a restart as well. If that still doesn't work, we can try to look at your SMB settings.

Hi

I did try it but i think i need to check the smb config

Anythin hint ii should check ?

thx

-

#1 Anyone has hits to be able to play files faster.

Trying to read 1080p file 10mbits and its takes like 1 min

# create rclone mount

rclone mount \

$Command1 $Command2 $Command3 $Command4 $Command5 $Command6 $Command7 $Command8 \

--allow-other \

--umask 000 \

--uid 99 \

--gid 100 \

--dir-cache-time $RcloneMountDirCacheTime \

--attr-timeout $RcloneMountDirCacheTime \

--log-level INFO \

--poll-interval 10s \

--cache-dir=$RcloneCacheShare/cache/$RcloneRemoteName \

--drive-pacer-min-sleep 10ms \

--drive-pacer-burst 1000 \

--vfs-cache-mode full \

--vfs-cache-max-size $RcloneCacheMaxSize \

--vfs-cache-max-age $RcloneCacheMaxAge \

--vfs-read-ahead 500m \

--bind=$RCloneMountIP \

$RcloneRemoteName: $RcloneMountLocation &

#2 Also do you know if its possible to direct play throught plex or its always converting ?🤔

-

22 hours ago, Kaizac said:

Did you update to the latest stable? They fixed something with samba again.

unraid 6.11.1

rclone: 1.60.0-beta.6481.6654b6611

-

On 10/7/2022 at 3:56 PM, Roudy said:

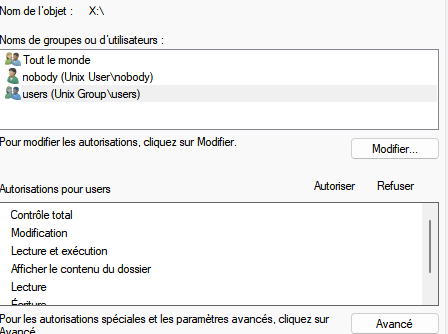

Can you temporarily make it public and see if you're able to edit the files? Want to make sure it is actually inheriting those permissions.

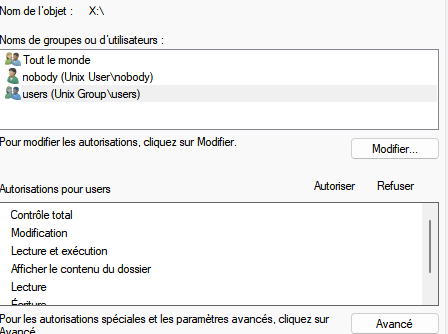

I did try right now and it does not seem to be effective.

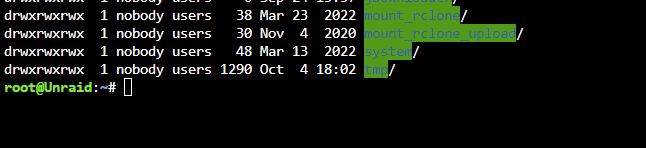

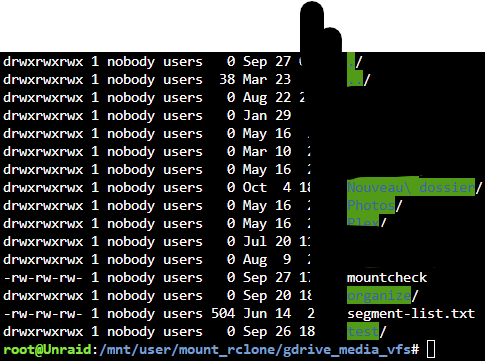

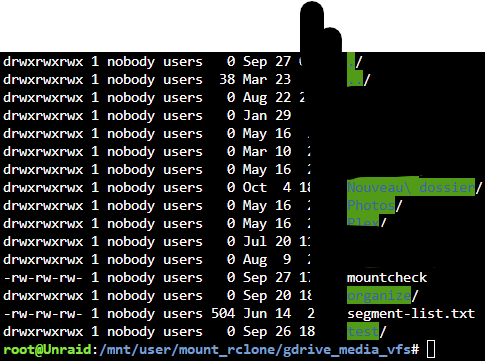

I can create files folders but i cant delete or rename them and only on my moun_rclone folder.

thx

-

-

hi all other question

about the upload script.

I'm getting this now

2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 90.241631ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 143.701353ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 222.186098ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 305.125972ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 402.588316ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 499.64329ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 589.545348ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 676.822802ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 680.141577ms 2022/10/06 05:21:57 DEBUG : pacer: Reducing sleep to 694.895337ms 2022/10/06 05:21:58 DEBUG : pacer: Reducing sleep to 307.907209ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 0s 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 78.586386ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 159.649286ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 198.168036ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 245.411694ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 330.517403ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 429.05441ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 523.306138ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 609.645869ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 690.942129ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 681.587878ms 2022/10/06 05:21:59 DEBUG : pacer: Reducing sleep to 639.166177ms 2022/10/06 05:22:00 DEBUG : pacer: Reducing sleep to 66.904708ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 0s 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 87.721382ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 187.616721ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 186.994169ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 285.041735ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 352.336246ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 449.015128ms 2022/10/06 05:22:01 DEBUG : pacer: Reducing sleep to 547.412525ms 2022/10/06 05:22:01 INFO : Transferred: 0 B / 0 B, -, 0 B/s, ETA - Elapsed time: 1h22m0.4sDo i need to worry i saw this for the last week maybe

i put my upload script on the post

thx all

-

7 hours ago, Kaizac said:

Krusader working is not surprising since it uses the root account, so it's not limited with permissions. I looked over your scripts quickly and I see nothing strange. So I think it's an samba issue. Did you upgrade to 6.11? There were changes to sambe in there, maybe check that out? Could explain why it stopped working.

Wow, I'm amazed your script for Server A worked....

You configured your Gcrypt as : gsuite:/crypt/media. But the correct method would be gsuite:crypt/media.

Then you also had your cache-dir in your A script to /cache. Did that work? In unraid you will have to define the actual path. Seems you did that correctly in the new script.

On the A script you didn't close the mount script with a "&", in the new script this is fixed already by default.

In your new script you put in --allow-non-empty as extra command, this is very risky to do. So make sure you thought about doing that.

What I find most worrying is that your crypt doesn't actually encrypt anything. Is that by choice? If you do want to switch to an actually encrypted Crypt you will have to send all your files through the crypt to your storage. It won't automatically encrypt all the files within that mount.

Your specific questions:

1. Don't use "Run script" in User Scripts. Always use run script in the background when running the script. If you use the run script option it will just stop the script as soon as you close the popup. That might explain why your mount drops right away.

2. You have the rclone commands here: https://rclone.org/commands/. Other than that you can for example use "rclone size gcrypt:" to see how big your gcrypt mount is in the cloud.

3. You can unmount with fusermount -uz /path/to/remote. Make sure you don't have any dockers or transfers running with access to that mount though, because they will start writing in your merger folder causing problems when you mount again.

yes 6.11 and win 11

i will checked to see thx a lot

-

15 hours ago, Kaizac said:

It's a pretty common issue throughout the latest topics. It's probably because the files are owned by root. Did you check the files permissions which you try to open?

hi yes on krusader i got no problem but on windows with network shares its not working anymore

i can't edit or rename delete etc. on the gdrive mount on windows my local shares are ok

I will add also my mount and upload script

Maybe i'm missing something

should i try the new permission feature you think ?

thx

-

anyone having issue with permission i can't edit any file or folder on my windows with network share

-

Hi guys

I'm getting problem with file permission inside my share every folder that were created a long time ago like years are ok but every new one i create at root of my main folder are blocked files only got 666 permission i cannot rename etc.

anyone can help me please

Unraid 6.10.3

# create rclone mount rclone mount \ $Command1 $Command2 $Command3 $Command4 $Command5 $Command6 $Command7 $Command8 \ --allow-other \ --umask 000 \ --dir-cache-time $RcloneMountDirCacheTime \ --attr-timeout $RcloneMountDirCacheTime \ --log-level INFO \ --poll-interval 10s \ --cache-dir=$RcloneCacheShare/cache/$RcloneRemoteName \ --drive-pacer-min-sleep 10ms \ --drive-pacer-burst 1000 \ --vfs-cache-mode full \ --vfs-cache-max-size $RcloneCacheMaxSize \ --vfs-cache-max-age $RcloneCacheMaxAge \ --vfs-read-ahead 500m \ --bind=$RCloneMountIP \ $RcloneRemoteName: $RcloneMountLocation &

thx

-

I upgrade my unraid this morning to 6.11

And i keep getting array undefined

i can't get the diagnostic tool to work either

-

5 hours ago, DZMM said:

I agree. The only "hard" bits on other systems is installing rclone and mergerfs. But, once you've done that the scripts should work on pretty much any platform if you change the paths and can setup a cron job.

E.g. I now use a seedbox to do my nzbget and rutorrent downloads and I then move completed files to google drive using my rclone scripts, with *arr running locally sending jobs to the seedbox and then managing the completed files on gdrive i.e. I don't really have a "local" anymore as no files exist locally but the scripts can still handle this.

hi DZMM

so just to be sure you transfert the file from youre seedbox directly to Gdrive to do encrypt ?

thx a lot

-

6 hours ago, Kaizac said:

Man I had the same and been spending a week on it, but found the solution for my situation yesterday.

Turns out it will do this when you hit the api limit/download quota on your Google drive mount which you use for your Plex.

I was rebuilding libraries, so it can happen, but this was too often for me.

Turned out Plex Server had the task under Settings > Libraries for chapter previews enabled on both when adding files and during maintenance. This will make Plex go through every video and create chapter thumbnails.

Once I disabled that the problem was solved and Plex became responsive again.

Mmm interessting

So i put mine on never it was all on planified task

I will check to see if it helps

But the fact you're telling me about the api /Ban make sense also

thx

-

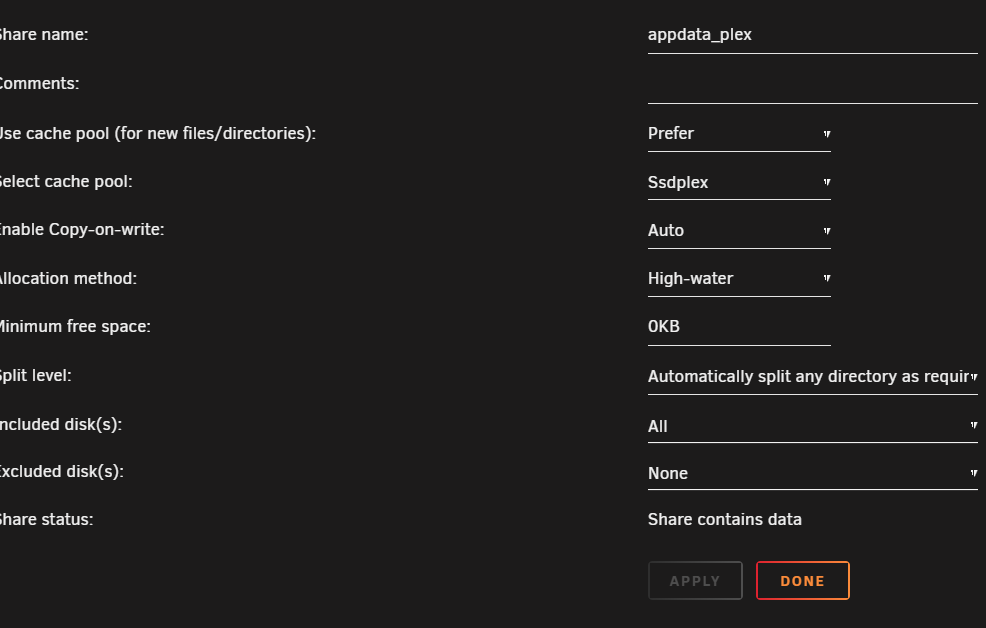

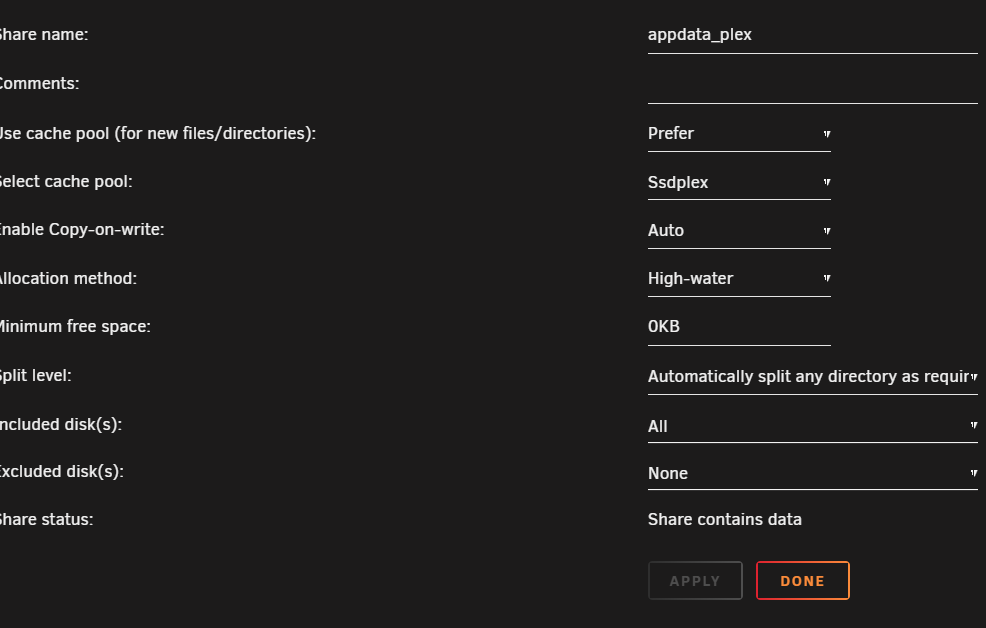

Hi guys i need help with Sqlite3: Sleeping for 200ms to retry busy DB

I currently got an ssd just for the appdata.

The folder app data seem to be creating a share with the folder.

Any idea how to fix this

I already did a optimse library etc.

Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry busy DB. Sqlite3: Sleeping for 200ms to retry Critical: libusb_init failed -

On 8/20/2022 at 4:23 AM, Kaizac said:

Still having issues? Cause for me it's working fine using your upload script. I have 80-100 rotating SA's though.

I've never been able to stop the array once I started using rclone mounts. I think the constant connections are preventing it. You could try shutting down your dockers first and make sure there are no file transfers going on. But I just reboot if I need the array down.

I do use direct mounts for certain processes, like my Nextcloud photo backups go straight into the Team Drive (I would not recommend using the personal Google drive anymore, only Team drives). I always use encrypted mounts, but depending on what you are storing you might not mind that it's unecrypted.

I use the normal mounting commands, although I currently don't use the caching ability that Rclone offers.

But for downloading dockers and such I think you need to check whether the download client is downloading in increments and uploading those or first storing them locally and then sending the whole file to the Gdrive/Team Drive. If it's storing in small increments directly on your mount I suspect it could be a problem for API hits. And I don't like the risk of corruption of files this could potentially offer.

Seeding directly from your Google Drive/Tdrive is for sure going to cause problems with your API hits. Too many small downloads will ruin your API hits. If you want to experiment with that I suggest you use a seperate service account and create a mount specifically for that docker/purpose to test. I have seperate rclone mounts for some dockers or combination of dockers that can create a lot of API hits and seperate it from my Plex mount so they don't interfere with each other.

do you have any documentation on service account ? i found some but not up to date also for teamdrive thx

-

Hi guys

i was wondering if anyone of you map there download docker to be diectly on gdrive and seed from it

Question:

#1 Do you crypt the files ?

#2 do you use hardlink

Any tutorial or additionnal infos maybe how to use it ?

thx

-

-

On 6/24/2022 at 5:50 AM, NLS said:

Will actually need some help from someone...

I got that dreadful pizza floodbot trojan recently and it made me get kicked from crafty discord. Invites don't work, I am probably banned.

Since I have no way to reach crafty team, can SOMEONE page some mod in crafty discord to let me back in? (NLS #7917)

(obviously I cleared the spambot)

i did ask the question right now waiting for someone. lets see

-

Hi guys i'm having issue with the docker ive tried to stop it reboot nothing works

2022-06-21 11:50:33,058 DEBG fd 11 closed, stopped monitoring <POutputDispatcher at 23442495733232 for <Subprocess at 23442495732560 with name start-script in state RUNNING> (stdout)> 2022-06-21 11:50:33,058 DEBG fd 15 closed, stopped monitoring <POutputDispatcher at 23442495733280 for <Subprocess at 23442495732560 with name start-script in state RUNNING> (stderr)> 2022-06-21 11:50:33,058 INFO exited: start-script (exit status 1; not expected) 2022-06-21 11:50:33,058 DEBG received SIGCHLD indicating a child quit

Any idea webgui is not working

thx

-

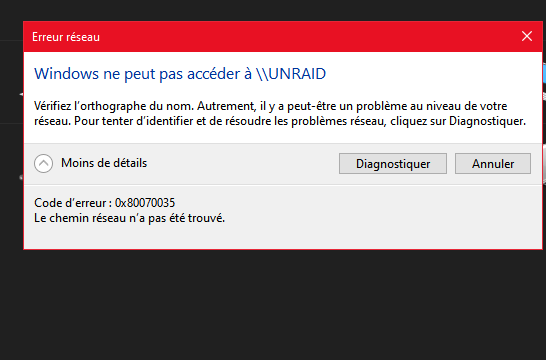

21 minutes ago, Frank1940 said:

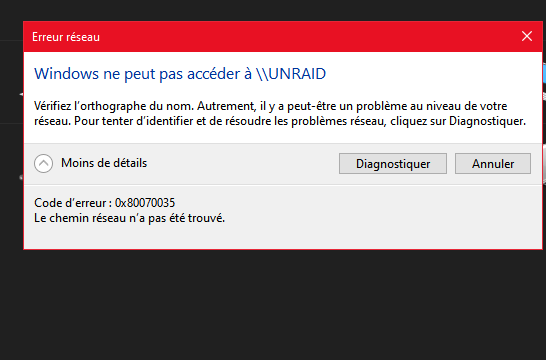

Google error 0x80070035

Do you have other computers that can establish access the files on your Unraid server?

There is a good chance that you need to follow the setup procedure in the link that I gave you. Microsoft has been increasing security features on Windows 10 and with the auto updating of the OS that they do, they will sometimes change the security settings or remove existing features/services in those updates.

To make the problem even worse, Windows error messages are often confusing and don't always indicate the real cause of the problem.

i will check youre link and no no computers are able to access it

-

10 minutes ago, Frank1940 said:

What is the error message from Win10?

Server Diagnostics?

Have you read this post and the link to the guide thread?

i added the infos sorry

-

i'm unable to connect to my shares to unraid with win10

Ive tried \\tower or with the ip

its not working for the past 3 days

any infos?

need help - HDD failing or not ?

in General Support

Posted · Edited by francrouge

Hi guys

I'm getting a lot of error from 2 hdd one in array disk 2 and one in parity disk 2

anyone can tell me if they are dying ?

SMART short self-test are ok

thx

tower-diagnostics-20221114-0418.zip

unraid-smart-20221115-0937 (1).zip unraid-smart-20221115-0937.zip