cablecutter

Members-

Posts

55 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Everything posted by cablecutter

-

Q35-3.0 vs i440fx-3.0 for Windows 10 with GPU in 2019?

cablecutter replied to cablecutter's topic in VM Engine (KVM)

For complete-ness, here is my current XML: <?xml version='1.0' encoding='UTF-8'?> <domain type='kvm' id='8'> <name>Windows 10</name> <uuid>15667ac1-2465-fe33-d840-a54b512b265b</uuid> <metadata> <vmtemplate xmlns="unraid" name="Windows 10" icon="windows.png" os="windows10"/> </metadata> <memory unit='KiB'>20971520</memory> <currentMemory unit='KiB'>20971520</currentMemory> <memoryBacking> <nosharepages/> </memoryBacking> <vcpu placement='static'>6</vcpu> <cputune> <vcpupin vcpu='0' cpuset='2'/> <vcpupin vcpu='1' cpuset='3'/> <vcpupin vcpu='2' cpuset='4'/> <vcpupin vcpu='3' cpuset='5'/> <vcpupin vcpu='4' cpuset='8'/> <vcpupin vcpu='5' cpuset='9'/> </cputune> <resource> <partition>/machine</partition> </resource> <os> <type arch='x86_64' machine='pc-i440fx-3.0'>hvm</type> <loader readonly='yes' type='pflash'>/usr/share/qemu/ovmf-x64/OVMF_CODE-pure-efi.fd</loader> <nvram>/etc/libvirt/qemu/nvram/15667ac1-2465-fe33-d840-a54b512b265b_VARS-pure-efi.fd</nvram> </os> <features> <acpi/> <apic/> <hyperv> <relaxed state='on'/> <vapic state='on'/> <spinlocks state='on' retries='8191'/> <vendor_id state='on' value='none'/> </hyperv> </features> <cpu mode='host-passthrough' check='none'> <topology sockets='1' cores='6' threads='1'/> </cpu> <clock offset='localtime'> <timer name='hypervclock' present='yes'/> <timer name='hpet' present='no'/> </clock> <on_poweroff>destroy</on_poweroff> <on_reboot>restart</on_reboot> <on_crash>restart</on_crash> <devices> <emulator>/usr/local/sbin/qemu</emulator> <disk type='file' device='disk'> <driver name='qemu' type='raw' cache='writeback'/> <source file='/mnt/user/domains/Windows 10/vdisk1.img'/> <backingStore/> <target dev='hdc' bus='virtio'/> <boot order='1'/> <alias name='virtio-disk2'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x04' function='0x0'/> </disk> <disk type='file' device='cdrom'> <driver name='qemu' type='raw'/> <source file='/mnt/user/isos/Windows_10_x64.iso'/> <backingStore/> <target dev='hda' bus='ide'/> <readonly/> <boot order='2'/> <alias name='ide0-0-0'/> <address type='drive' controller='0' bus='0' target='0' unit='0'/> </disk> <disk type='file' device='cdrom'> <driver name='qemu' type='raw'/> <source file='/mnt/user/isos/virtio-win-0.1.141-1.iso'/> <backingStore/> <target dev='hdb' bus='ide'/> <readonly/> <alias name='ide0-0-1'/> <address type='drive' controller='0' bus='0' target='0' unit='1'/> </disk> <controller type='usb' index='0' model='qemu-xhci' ports='15'> <alias name='usb'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x07' function='0x0'/> </controller> <controller type='pci' index='0' model='pci-root'> <alias name='pci.0'/> </controller> <controller type='ide' index='0'> <alias name='ide'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x01' function='0x1'/> </controller> <controller type='virtio-serial' index='0'> <alias name='virtio-serial0'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x03' function='0x0'/> </controller> <interface type='bridge'> <mac address='52:54:00:4e:e0:7e'/> <source bridge='br0'/> <target dev='vnet0'/> <model type='virtio'/> <alias name='net0'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x02' function='0x0'/> </interface> <serial type='pty'> <source path='/dev/pts/0'/> <target type='isa-serial' port='0'> <model name='isa-serial'/> </target> <alias name='serial0'/> </serial> <console type='pty' tty='/dev/pts/0'> <source path='/dev/pts/0'/> <target type='serial' port='0'/> <alias name='serial0'/> </console> <channel type='unix'> <source mode='bind' path='/var/lib/libvirt/qemu/channel/target/domain-8-Windows 10/org.qemu.guest_agent.0'/> <target type='virtio' name='org.qemu.guest_agent.0' state='disconnected'/> <alias name='channel0'/> <address type='virtio-serial' controller='0' bus='0' port='1'/> </channel> <input type='mouse' bus='ps2'> <alias name='input0'/> </input> <input type='keyboard' bus='ps2'> <alias name='input1'/> </input> <hostdev mode='subsystem' type='pci' managed='yes'> <driver name='vfio'/> <source> <address domain='0x0000' bus='0x2d' slot='0x00' function='0x0'/> </source> <alias name='hostdev0'/> <rom file='/mnt/user/isos/Zotac.GTX1070Ti.PATCHED.rom'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x05' function='0x0'/> </hostdev> <hostdev mode='subsystem' type='pci' managed='yes'> <driver name='vfio'/> <source> <address domain='0x0000' bus='0x2d' slot='0x00' function='0x1'/> </source> <alias name='hostdev1'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x06' function='0x0'/> </hostdev> <memballoon model='none'/> </devices> <seclabel type='dynamic' model='dac' relabel='yes'> <label>+0:+100</label> <imagelabel>+0:+100</imagelabel> </seclabel> </domain> -

Q35-3.0 vs i440fx-3.0 for Windows 10 with GPU in 2019?

cablecutter posted a topic in VM Engine (KVM)

Hi All, I've had a windows 10 VM running on my machine now for the past couple years. It has a Ryzen 1700 and a NVIDIA 1070 passed through and has been working...ok... Recently I've turned it into a render slave for houdini/redshift and have noticed some instability. Namely, it will crash (and crash unraid) when running heavy CPU/GPU/RAM activities. One thing that I'm exercising in particular is the amount of data I'm pushing from system ram to the GPU ram. I'm assuming that this is really heavy on the PCI-E lanes...which made me think. I'm currently using the 1440-fx-3.0 machine type - originally when I set this up I could not get pass-through working with Q35. But...from what I understand, Q35 has better support for native PCI-E whereas 1440-fx is doing some kind of emulation. It would seem that for rendering VIA GPU, unhindered PCIE would be best. Now we're several versions down the line and I'm wondering if people are using Q35 for windows 10 gaming PC's? Also, is it possible to convert a VM from one machine type to another without starting fresh? Much thanks, Rob -

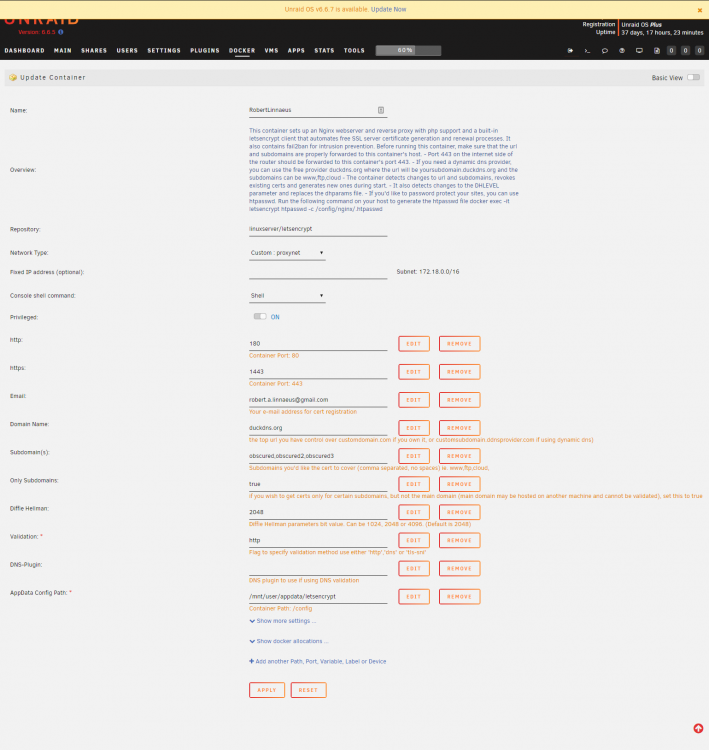

2048 bit DH parameters present SUBDOMAINS entered, processing SUBDOMAINS entered, processing Only subdomains, no URL in cert Sub-domains processed are: -d XXXXXX.duckdns.org -d XXXXXXX.duckdns.org -d XXXXXXXX.duckdns.org E-mail address entered: [email protected] http validation is selected Generating new certificate Saving debug log to /var/log/letsencrypt/letsencrypt.log Plugins selected: Authenticator standalone, Installer None Obtaining a new certificate An unexpected error occurred: There were too many requests of a given type :: Error creating new order :: too many certificates already issued for exact set of domains: linnaeus.duckdns.org,linnio.duckdns.org,lserv.duckdns.org: see https://letsencrypt.org/docs/rate-limits/ Please see the logfiles in /var/log/letsencrypt for more details. ERROR: Cert does not exist! Please see the validation error above. The issue may be due to incorrect dns or port forwarding settings. Please fix your settings and recreate the container

-

Hi all, Having an odd issue at container startup "Can't open privkey.pem for reading, No such file or directory". However, the keys are saved in the folder specified and the privileges for the files should allow letsencrypt to access them (even tried 777). Any help would be appreciated. - Congratulations! Your certificate and chain have been saved at: /etc/letsencrypt/live/XXXXXXX.duckdns.org-0001/fullchain.pem Your key file has been saved at: /etc/letsencrypt/live/XXXXXXX.duckdns.org-0001/privkey.pem Your cert will expire on 2019-06-05. To obtain a new or tweaked version of this certificate in the future, simply run certbot again. To non-interactively renew *all* of your certificates, run "certbot renew" - If you like Certbot, please consider supporting our work by: Donating to ISRG / Let's Encrypt: https://letsencrypt.org/donate Donating to EFF: https://eff.org/donate-le Can't open privkey.pem for reading, No such file or directory 22760616274792:error:02001002:system library:fopen:No such file or directory:crypto/bio/bss_file.c:72:fopen('privkey.pem','r') 22760616274792:error:2006D080:BIO routines:BIO_new_file:no such file:crypto/bio/bss_file.c:79: unable to load private key cat: privkey.pem: No such file or directory cat: fullchain.pem: No such file or directory New certificate generated; starting nginx [cont-init.d] 50-config: exited 0. [cont-init.d] done. [services.d] starting services cat: privkey.pem: No such file or directory cat: fullchain.pem: No such file or directory New certificate generated; starting nginx [cont-init.d] 50-config: exited 0. [cont-init.d] done. [services.d] starting services [services.d] done. Server ready Edit: After fiddling a bit the problem continues and now I cannot get new certs. ... Plugins selected: Authenticator standalone, Installer None Renewing an existing certificate An unexpected error occurred: There were too many requests of a given type :: Error finalizing order :: too many certificates already issued for exact set of domains: XXXXXXX.duckdns.org,linnio.duckdns.org,lserv.duckdns.org: see https://letsencrypt.org/docs/rate-limits/ Please see the logfiles in /var/log/letsencrypt for more details. Can't open privkey.pem for reading, No such file or directory 23291569253224:error:02001002:system library:fopen:No such file or directory:crypto/bio/bss_file.c:72:fopen('privkey.pem','r') 23291569253224:error:2006D080:BIO routines:BIO_new_file:no such file:crypto/bio/bss_file.c:79: unable to load private key cat: privkey.pem: No such file or directory cat: fullchain.pem: No such file or directory New certificate generated; starting nginx [cont-init.d] 50-config: exited 0. [cont-init.d] done. [services.d] starting services nginx: [emerg] BIO_new_file("/config/keys/letsencrypt/fullchain.pem") failed (SSL: error:02001002:system library:fopen:No such file or directory:fopen('/config/keys/letsencrypt/fullchain.pem','r') error:2006D080:BIO routines:BIO_new_file:no such file) [services.d] done. Server ready Server ready nginx: [emerg] BIO_new_file("/config/keys/letsencrypt/fullchain.pem") failed (SSL: error:02001002:system library:fopen:No such file or directory:fopen('/config/keys/letsencrypt/fullchain.pem','r') error:2006D080:BIO routines:BIO_new_file:no such file) nginx: [emerg] BIO_new_file("/config/keys/letsencrypt/fullchain.pem") failed (SSL: error:02001002:system library:fopen:No such file or directory:fopen('/config/keys/letsencrypt/fullchain.pem','r') error:2006D080:BIO routines:BIO_new_file:no such file) nginx: [emerg] BIO_new_file("/config/keys/letsencrypt/fullchain.pem") failed (SSL: error:02001002:system library:fopen:No such file or directory:fopen('/config/keys/letsencrypt/fullchain.pem','r') error:2006D080:BIO routines:BIO_new_file:no such file)

-

[support] Spants - NodeRed, MQTT, Dashing, couchDB

cablecutter replied to spants's topic in Docker Containers

Thanks Spants - I'm kind of a newbie when it comes to Docker. All I do is click install on the community apps - haven't really dug much deeper than that. Is there any info you could provide on editing the template itself for use with unRaid? I'd also love to build a docker version of Hass.IO for unraid. I'm currently doing a very stupid work-around of running it on a linux VM on my unraid server. -

[support] Spants - NodeRed, MQTT, Dashing, couchDB

cablecutter replied to spants's topic in Docker Containers

Hey, having an issue with the Node-Red: https://github.com/AYapejian/node-red-contrib-home-assistant/issues/52 Looks like the newest version of Node-Red needs node.js > V8 I just checked and it seems like this docker is packed with v6.14.4 -

Cache drive "unassigned" after unsafe shutdown (power loss)

cablecutter replied to cablecutter's topic in General Support

Well... I stopped the array and added the cache drive back in. Everything seems to be working fine. Still not sure why this happened, was quite scary. -

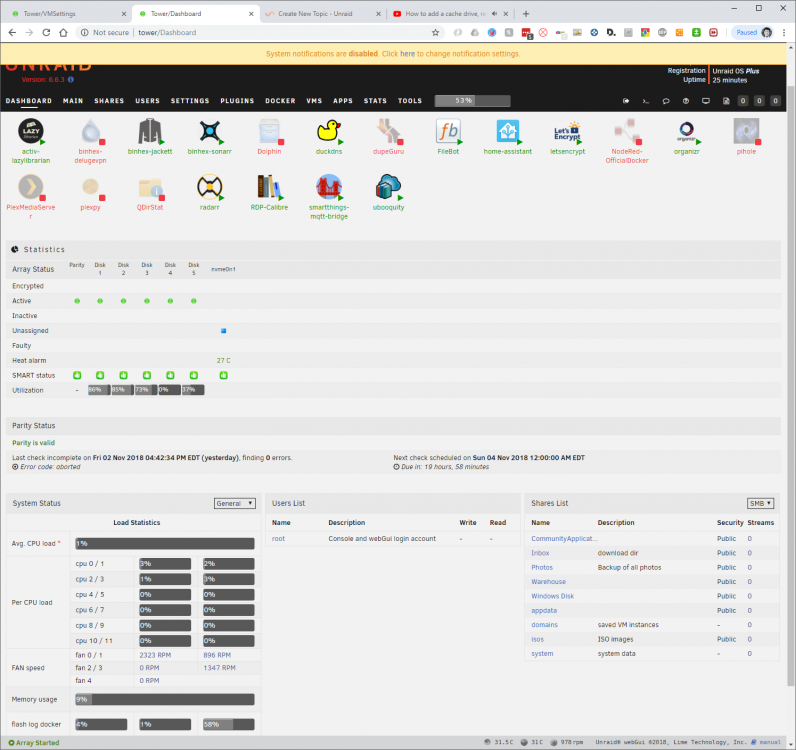

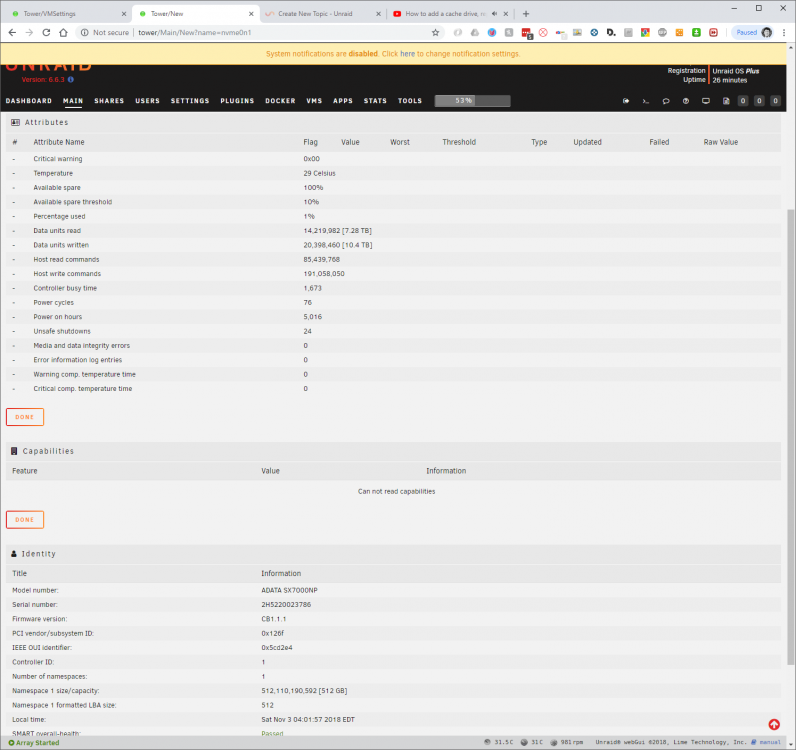

Hi all, My server has been running for months in its current configuration. I have a cache drive that is used for shuffling files and storing VM's. Unfortunately, after an unsafe shutdown yesterday (I knocked a power strip by accident) I noticed that the VM's failed to start on boot. I initially was troubleshooting this as a KVM issue, but noticed this morning that the cache drive is "Unassigned" and therefore all of the shares on that drive are showing as "empty". I don't want to lose my VM's - really don't have the time to set this all up again. How can I fix this? How can I add my cache drive back into the array without losing any data / mapping of shares? Best, Rob tower-diagnostics-20181103-0342.zip

-

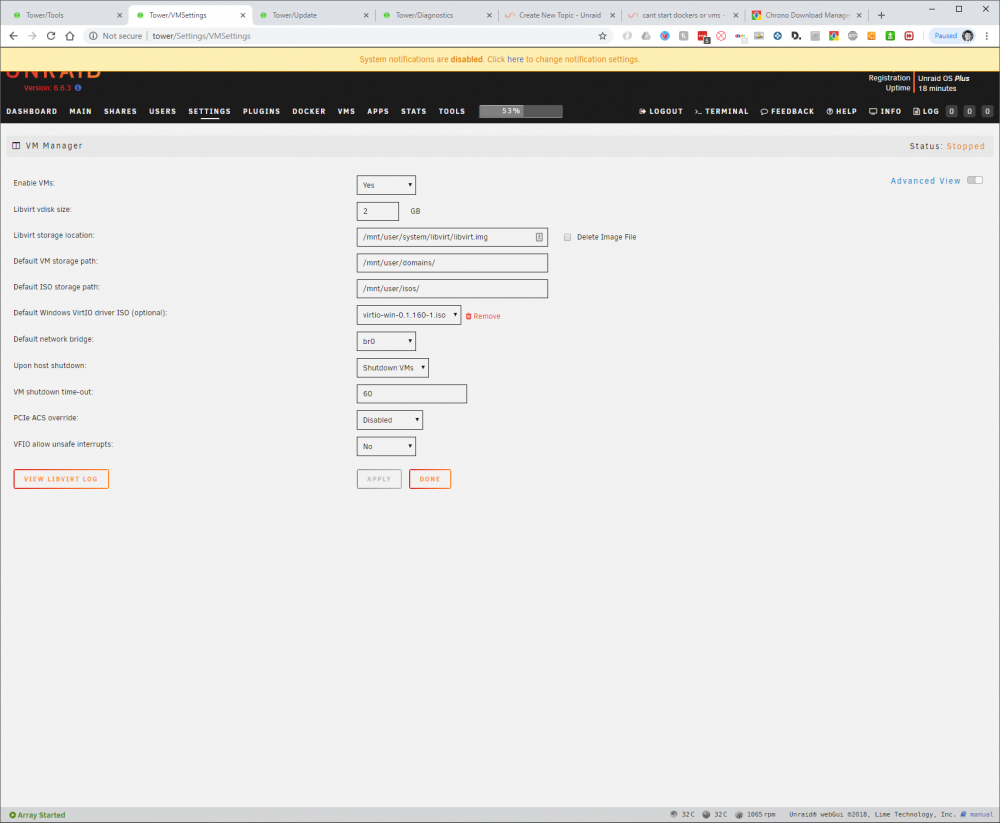

Hi All, Recently had an unclean shutdown - just tapped off the power switch by accident. After rebooting the two vm's I have set up (windows and linux) were missing. When I stopped and started the VM service it failed to start, giving the error "Libvirt Service failed to start." I am pointing to the proper ISOs folder, domains folder, and libvert image directly. I am running 6.6.3 but was running 6.6.1 when it happened. I have tried rebooting the box. On fresh boot it will show th VM's tab with no images. On restarting the VM service I will receive the error noted above. tower-diagnostics-20181102-1656.zip

-

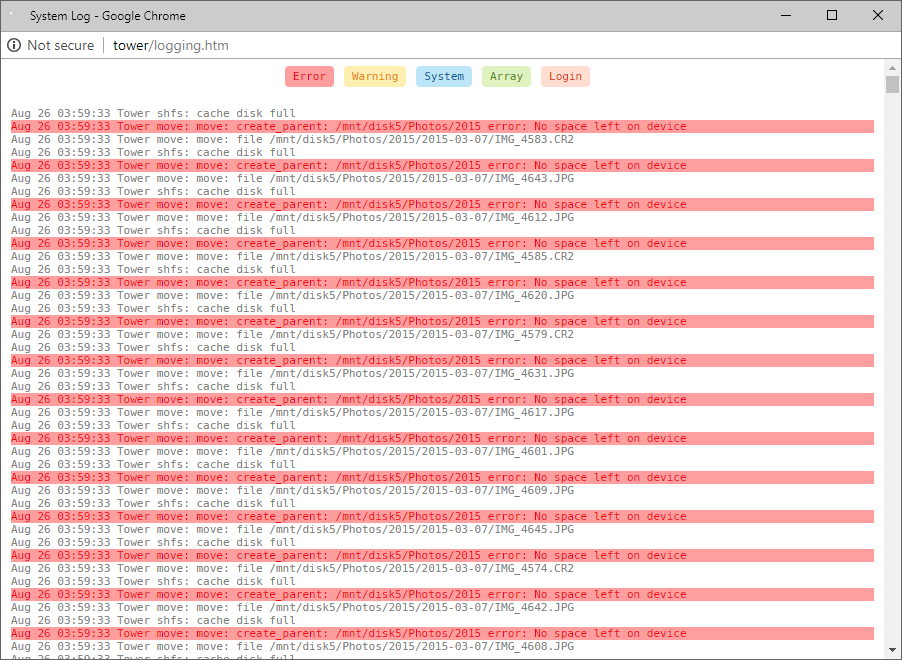

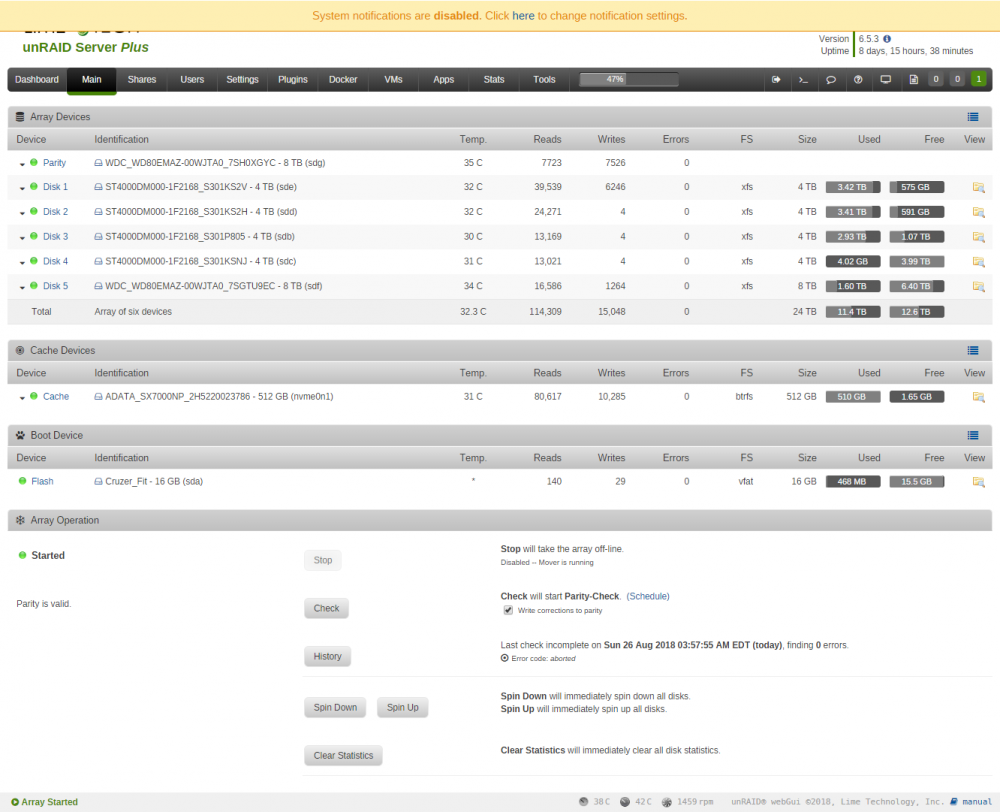

Hi All, Having an odd error with my mover. Noticed a while back that transfers were slowing down. When I checked my cache disk I saw that it was full. I turned on logs for the mover and ran it to find this error. Not really sure how to address this. Disk5 has free space, and the photos share has 12.6TB free. Photos share is set to use cache = "yes'. The cache has 2gb free. Mover log: Main Dash:

-

This is the suggestion from AMD. I have not tried it yet but am doubtful that it will resolve the issue: " First, I request you to download and install the Windows Show or Hide updates tool and run it and check if there is any graphics driver available from windows. If yes then follow the instruction to hide it. https://support.microsoft.com/en-us/help/3073930/how-to-temporarily-prevent-a-driver-update-from-reinstalling-in-windows-10 After preventing windows to install the driver automatically, I request you to please follow the below steps. Step 1 – Please use DDU Utility (Display Driver Uninstaller) by launching it in Safe mode and uninstall the previous driver. It will help you completely uninstall AMD graphics card drivers and packages from your system, without leaving leftovers behind. Step 2 – then restart your computer again and enter into the normal windows mode. Step 3 - install all the critical and recommended updates from Windows. Step 4 – Again restart the computer and then install the 18.3.1 drivers by clicking this below link. Please disconnect the network connection and disable antivirus before running the installer file. https://support.amd.com/en-us/download/desktop?os=Windows%2010%20-%2064 Thanks for contacting AMD."

-

Hi All, First - I love your videos and think I've watched almost every one. There's no way I would have Windows 10 up and running without your help! GPU: AMD RX 560 4GB (Gigabyte) v2.0 Just posting to see if anyone else has had graphical glitches in games and trouble with the HDMI audio when passing through this card. I have dumped the rom via GPU-Z and added it to the XML, however this did not have any effect. I have a more comprehensive post here: Best, Rob

-

GPU Passthrough: AMD Ryzen (no on board) + AMD RX 560

cablecutter replied to cablecutter's topic in General Support

I started a cleaner and more comprehensive post here: -

Hi All, I've dumped about 8 hours into this so far and have made almost no progress. I'm hoping to find suggestions for motherboard bios settings, linux config settings, VM xml tweaks, or anything else that might help resolve this.GPU is the Gigabyte RX 560 4GB Rev2 with AMD Bios Version 17.8.1 (have also tried v18+ with identical results). It is functional, but has intermittent issues with the HDMI Audio Driver and either fails to start games, or shows artifacts within the game.System Software:UnRaid (Linux) V6.4.1Windows 10 64bit VM (GPU Passthrough) - Isolated CPU Cores / Tried both with and Without specified GPU Rom (dumped from card with GPU-Z)MSI Util - Tried both with and without MSI enabled for all processes related to the GPUNot using the Gigabyte GPU Utility (Aorus)I have used DDU to clean the pre-existing drivers in windows safe mode whenever reinstalling device driversSystem Hardware of interest: Seasonic Prime Titanium 650W PSU Asrock x370 Taichi (Bios 4.40 - also tried 3.2 - fairly stock bios settings with the exception of enabling IOMMU groups) Ryzen 5 1600 (stock speed, no overclock) Ballistix 2x16GB DDR4 Downstream Display Devices: Denon X2300W AVR (HDMI Bypass) Samsun UN65JS8500 4k TV All connected via 2.0b spec HDMI cables (tested and working at 4k 444 2020) Radeon Display Settings: Have tried both 1080P and 4k Have tried HDR mode and Non HDR mode Am Using 444 Full range RGB, have tried 420 as well Description of Issues:Graphical errors in games or failure to launch. I have only tried 3 games so far and all have had issues: Ori and the Blind Forest, either fails to launch completely or hangs on the main screen with theme music playing. No visible graphical artifacts. System does not lock up and I am able to kill the process to return to desktop Ballpoint Universe. 3d models have artifacts where the models stretch and distort (pointy spikey distortions) and obscure the screen. The game otherwise runs fine. Bastion. Game runs fine with the exception of a thin diagonal line of colorful white noise that extends from the center of the screen to the upper right hand corner. Intermittent issue with HDMI audio failing to initialize. When first installing any Radeon driver the HDMI audio will fail to initialize, recognizing no connected audio devices (red X). Occasionally, this issue resolves itself and it is not clear why. I have also managed to get HDMI audio to initialized by enabling the MSI option associated with the HDMI audio device. Windows VM XML (Tried both with and without Rom specified - same results): <domain type='kvm'> <name>Windows 10</name> <uuid>15667ac1-2465-fe33-d840-a54b512b265b</uuid> <metadata> <vmtemplate xmlns="unraid" name="Windows 10" icon="windows.png" os="windows10"/> </metadata> <memory unit='KiB'>24641536</memory> <currentMemory unit='KiB'>24641536</currentMemory> <memoryBacking> <nosharepages/> </memoryBacking> <vcpu placement='static'>8</vcpu> <cputune> <vcpupin vcpu='0' cpuset='4'/> <vcpupin vcpu='1' cpuset='5'/> <vcpupin vcpu='2' cpuset='6'/> <vcpupin vcpu='3' cpuset='7'/> <vcpupin vcpu='4' cpuset='8'/> <vcpupin vcpu='5' cpuset='9'/> <vcpupin vcpu='6' cpuset='10'/> <vcpupin vcpu='7' cpuset='11'/> <emulatorpin cpuset='0-3'/> </cputune> <os> <type arch='x86_64' machine='pc-i440fx-2.10'>hvm</type> <loader readonly='yes' type='pflash'>/usr/share/qemu/ovmf-x64/OVMF_CODE-pure-efi.fd</loader> <nvram>/etc/libvirt/qemu/nvram/15667ac1-2465-fe33-d840-a54b512b265b_VARS-pure-efi.fd</nvram> </os> <features> <acpi/> <apic/> <hyperv> <relaxed state='on'/> <vapic state='on'/> <spinlocks state='on' retries='8191'/> <vendor_id state='on' value='none'/> </hyperv> </features> <cpu mode='host-passthrough' check='none'> <topology sockets='1' cores='8' threads='1'/> </cpu> <clock offset='localtime'> <timer name='hypervclock' present='yes'/> <timer name='hpet' present='no'/> </clock> <on_poweroff>destroy</on_poweroff> <on_reboot>restart</on_reboot> <on_crash>restart</on_crash> <devices> <emulator>/usr/local/sbin/qemu</emulator> <disk type='file' device='disk'> <driver name='qemu' type='raw' cache='none'/> <source file='/mnt/user/domains/Windows 10/vdisk1.img'/> <target dev='hdc' bus='virtio'/> <boot order='1'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x04' function='0x0'/> </disk> <disk type='file' device='cdrom'> <driver name='qemu' type='raw'/> <source file='/mnt/user/isos/Windows_10_x64.iso'/> <target dev='hda' bus='ide'/> <readonly/> <boot order='2'/> <address type='drive' controller='0' bus='0' target='0' unit='0'/> </disk> <disk type='file' device='cdrom'> <driver name='qemu' type='raw'/> <source file='/mnt/user/isos/virtio-win-0.1.141-1.iso'/> <target dev='hdb' bus='ide'/> <readonly/> <address type='drive' controller='0' bus='0' target='0' unit='1'/> </disk> <controller type='usb' index='0' model='nec-xhci'> <address type='pci' domain='0x0000' bus='0x00' slot='0x07' function='0x0'/> </controller> <controller type='pci' index='0' model='pci-root'/> <controller type='ide' index='0'> <address type='pci' domain='0x0000' bus='0x00' slot='0x01' function='0x1'/> </controller> <controller type='virtio-serial' index='0'> <address type='pci' domain='0x0000' bus='0x00' slot='0x03' function='0x0'/> </controller> <interface type='bridge'> <mac address='52:54:00:4e:e0:7e'/> <source bridge='br0'/> <model type='virtio'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x02' function='0x0'/> </interface> <serial type='pty'> <target port='0'/> </serial> <console type='pty'> <target type='serial' port='0'/> </console> <channel type='unix'> <target type='virtio' name='org.qemu.guest_agent.0'/> <address type='virtio-serial' controller='0' bus='0' port='1'/> </channel> <input type='mouse' bus='ps2'/> <input type='keyboard' bus='ps2'/> <hostdev mode='subsystem' type='pci' managed='yes'> <driver name='vfio'/> <source> <address domain='0x0000' bus='0x2d' slot='0x00' function='0x0'/> </source> <rom bar='on' file='/mnt/user/Warehouse/roms/Polaris21uefi.rom'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x05' function='0x0'/> </hostdev> <hostdev mode='subsystem' type='pci' managed='yes'> <driver name='vfio'/> <source> <address domain='0x0000' bus='0x2d' slot='0x00' function='0x1'/> </source> <address type='pci' domain='0x0000' bus='0x00' slot='0x06' function='0x0'/> </hostdev> <hostdev mode='subsystem' type='usb' managed='no'> <source> <vendor id='0x045e'/> <product id='0x0719'/> </source> <address type='usb' bus='0' port='1'/> </hostdev> <hostdev mode='subsystem' type='usb' managed='no'> <source> <vendor id='0x046d'/> <product id='0xc52b'/> </source> <address type='usb' bus='0' port='2'/> </hostdev> <hostdev mode='subsystem' type='usb' managed='no'> <source> <vendor id='0x8087'/> <product id='0x0aa7'/> </source> <address type='usb' bus='0' port='3'/> </hostdev> <memballoon model='virtio'> <address type='pci' domain='0x0000' bus='0x00' slot='0x08' function='0x0'/> </memballoon> </devices> </domain> The Asrock bios does have the following option (have not changed yet and unsure of whether it would have any effect): Launch Video OpROM Policy Select UEFI only to run those that support UEFI option ROM only. Select Legacy only to run those that support legacy option ROM only. Select Do not launch to not execute both legacy and UEFI option ROM. Attached are two DXDiag exports and the rom ripped with GPU-Z. One dxdiag with MSI disabled and HDMI audio non-functioning, the other with HDMI audio functioning and most things working (with the exception of the games described in 1). Much thanks for any help, Rob DxDiagBrokenHDMIAudio.txt DxDiag.txt Polaris21uefi.rom

-

GPU Passthrough: AMD Ryzen (no on board) + AMD RX 560

cablecutter replied to cablecutter's topic in General Support

I was able to get this working by creating a bios dump via gpu-z. The following was added to the xml: <rom bar='on' file='/mnt/user/Warehouse/roms/Polaris21uefi.rom'/> Unfortunately, this didn't solve any of my issues. I am still experiencing artifacts or crashes in games and inconsistent ability to initialize HDMI audio. I have tried the MSI Util as well without success. -

Hi all, losing hair here. I've been wrestling with an RX 560 GPU on a Windows 10 VM for days. Now I'm having trouble with my VM tab as well. After shutting down a VM I click the tab in UnRaid and nothing loads. The error log displays: Mar 5 20:16:40 Tower nginx: 2018/03/05 20:16:40 [error] 4718#4718: *6346 upstream timed out (110: Connection timed out) while reading upstream, client: 192.168.1.212, server: , request: "GET /VMs HTTP/1.1", upstream: "fastcgi://unix:/var/run/php5-fpm.sock:", host: "tower", referrer: "http://tower/Main" 192.168.1.212 is the IP of my windows machine - not the UnRaid server. My Linux config is: label unRAID OS menu default kernel /bzimage append pcie_acs_override=downstream isolcpus=4,5,6,7,8,9,10,11 rcu-nocbs=0-11 initrd=/bzroot Is this possibly related to the rcu-nocbs / ryzen? I have also reenabled c-states after a recent bios update - but this seems unrelated? I've also returned my VM xml to stock with the same results. Also booted with vnc only, same results.

-

GPU Passthrough: AMD Ryzen (no on board) + AMD RX 560

cablecutter replied to cablecutter's topic in General Support

@Handl3vogn I'm having some interesting issues with HDMI audio (device is enabled, but crashes when adding an HDMI audio device). This was resolved by installing legacy HDMI audio drivers from 2016 - Kodi is working - BUT, some games crash. I'd like to try the gpu Bios approach, but have only found Nvidia instructions. Are there any recommendations that you could give? I downloaded the GPU rom from techpowerup and edited the XML thusly GPU + HDMI Sound: <hostdev mode='subsystem' type='pci' managed='yes'> <driver name='vfio'/> <source> <address domain='0x0000' bus='0x2d' slot='0x00' function='0x0'/> </source> <alias name='hostdev0'/> <rom file='/mnt/user/isos/Gigabyte.RX560.4096.170505.rom'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x05' function='0x0'/> </hostdev> <hostdev mode='subsystem' type='pci' managed='yes'> <driver name='vfio'/> <source> <address domain='0x0000' bus='0x2d' slot='0x00' function='0x1'/> </source> <alias name='hostdev1'/> <address type='pci' domain='0x0000' bus='0x00' slot='0x06' function='0x0'/> </hostdev> The issue I'm having now is that when I try to install the driver in Windows the HOST (unraid) crashes. Best, Rob -

Thanks - gave that a shot for just the via card, but it seems to have had no effect. default menu.c32 menu title Lime Technology, Inc. prompt 0 timeout 50 label unRAID OS menu default kernel /bzimage append pcie_acs_override=id:1106:3483 initrd=/bzroot label unRAID OS GUI Mode kernel /bzimage append initrd=/bzroot,/bzroot-gui label unRAID OS Safe Mode (no plugins, no GUI) kernel /bzimage append initrd=/bzroot unraidsafemode label Memtest86+ kernel /memtest

-

I have the ASRock x370 Taichi and have issues with IOMMU groupings. First, the Bluetooth controller and Ethernet are stuck in the same group. Second the non GPU pcie slot is also in this group, so passing through a pcie card is out of the question if you don't want to pass the onboard Ethernet through as well. Very frustrating. Iommu ACS override is able to split off all sorts of inconsequential hardware, but still leaves this big lump.