pjneder

Members-

Posts

113 -

Joined

-

Last visited

Converted

-

Gender

Male

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

pjneder's Achievements

Apprentice (3/14)

0

Reputation

-

Keep certain VM's running without array started

pjneder replied to impmonkey's topic in Feature Requests

+1 vote on this. Keeping the topic alive. I am a Plus license and would happily pay more for this feature! -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

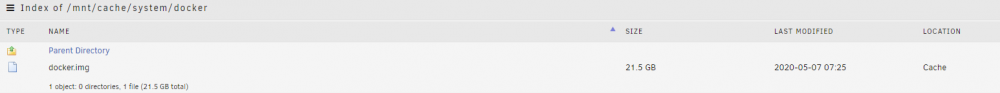

Hmmm...that is interesting. I see exactly what you mean. I first noticed this the other day in the following way. As I would load my .mkv files, I would see the cache fill up. Occasionally I would bump into a write space problem so I would manually fire Mover (probably something there I need to fix). I got used to watching the Used/Free space of the Cache. It was typically <5GB steady state. Then, while moving disks around I hit the problem of cloned UUID's which caused a mounting problem, eventually causing the Cache pool to fail to mount. I wiped the still connected disks sitting in unassigned, clearing the UUID and that issue away. Restarted the server, and then I noticed the Cache sitting at 25.8GB Used! I don't have all of the forensic evidence, but something changed in the reporting [around] when the Cache pool did not mount correctly. Hence this thread and very informative discussion. Could be, most likely, the docker.img has been 20GB (21.5GB depending on math) this entire time but it was not being shown as used in the Main screen Cache pool....until now. Guess all is copacetic after all. Further thoughts welcomed. Best!!! P.S. Thanks to this I'm likely going to take the red pill and swap my server architecture around... -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

Maybe ballooning is the wrong word. I was using that to describe the fact that the FS space consumed is listed as 21.5GB w.r.t. to how the Cache FS sees the image, even though the diags say it is only consuming 515MB. -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

Did you see the screen shots in my previous post? The cache and FS both show the consumption. Trying to figure out how to properly answer the question. -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

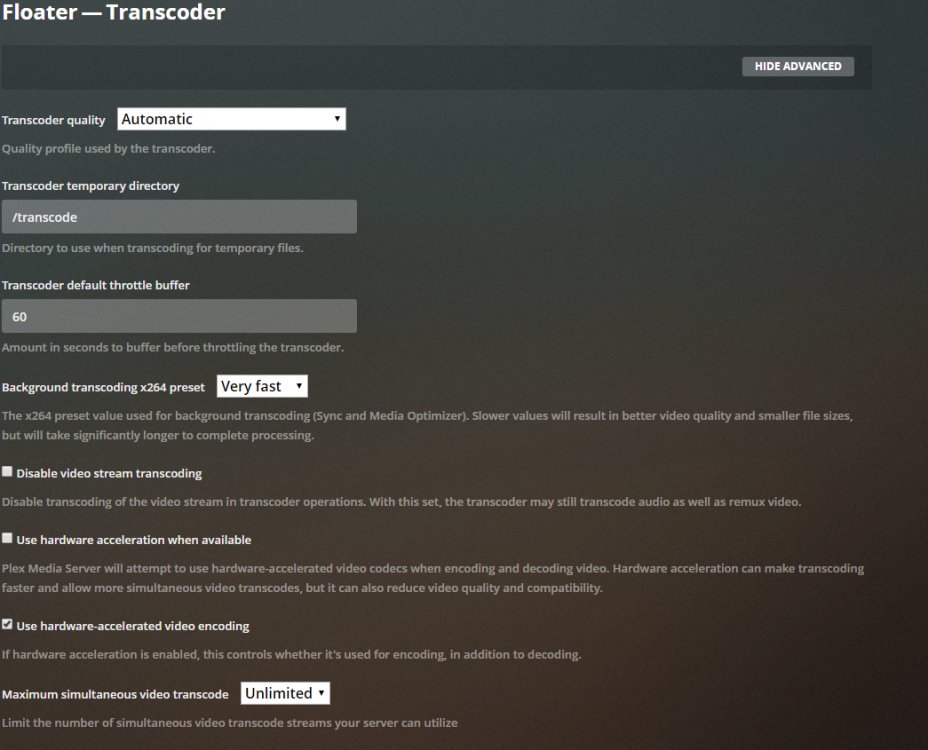

OK, fair enough. It might not be supported but essentially it works. I started this project long before unRaid became the more beautiful thing it is now with KVM, Docker and more. I host my pfSense, Ubiquity controller and more on there. However, unRaid would now allow me to do all of that so I might switch over to unRaid being the native with everything else hosting as needed. I expect you know the answers to these questions: If the /tmp to /transcode (i.e. RAM transcode) was not passing through as expected, AND I had engaged the transcode engine, would that explain the sudden jump in the docker.img size? Follow up, if I map the /transcode area to an unassigned spinning HDD (i.e. my old 1T), would that A) work B) keep the 1T spinning all of the time? Thanks!!! -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

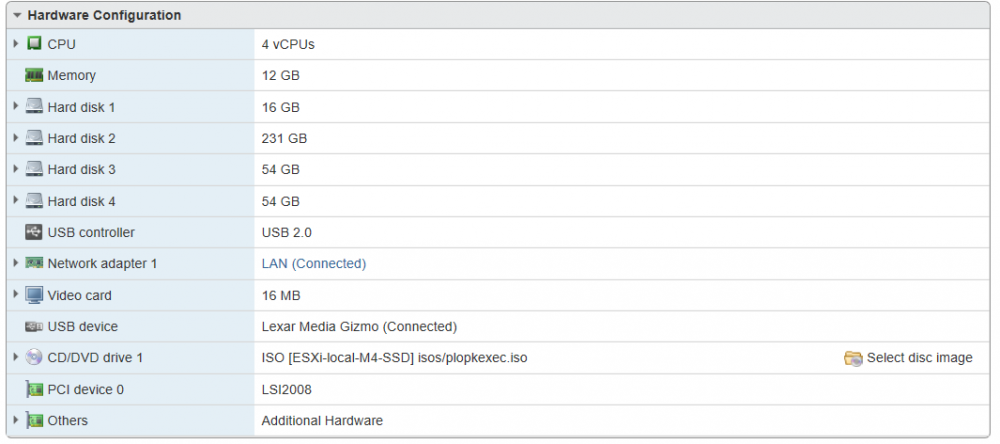

Change, yes and no. I have been changing some stuff with my array, by swapping in some larger disks. I cannot remember exactly when the size ballooned up but it was sometime in the past few days. I had been busy re-muxing my media library from .iso files to .mkv files and putting them in a totally new share just for use by Plex. The re-mux occurs on my PC, not unRaid. Then I decided it was a good time to add some storage (even though I wasn't really running low, but I did have some old 1T seagates drives I wanted to pull out). The no part comes in that I have not touched the Docker or settings except to pull updates as they came available. I also did some experimenting with playing Plex remotely to my phone & iPad. I can suspect that this would have kicked off transcoding. I had never run Plex streams remotely before, so that would also qualify as a change. Then I noticed my cache used was much larger. I ran Mover but it stayed quite big. Scratching my head I started looking around and found that docker.img had grown large. I will point what is likely obvious in the diags. This instance of unRaid is running on ESXi as a VM. 12GB of RAM allocated and access to all 4 vCPUs. What I do notice in my ESXi dashboard, which is new, is that ESXi thinks VMware tools are not installed, which is new. Not sure how worried I should be about that, but I will have to do some looking. I am pretty sure I had this working in the past. Thanks! -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

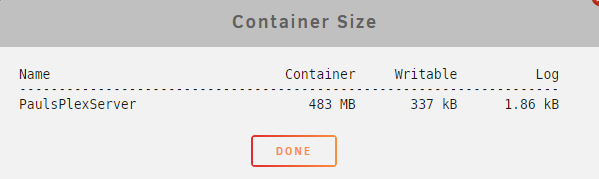

Here you go. I really appreciate the help! I had already checked the container size, because I was surprised when I saw my cache sitting at 25.8GB used. Went hunting for the cause. Found the large docker.img file, sitting at 21.5GB. floater-diagnostics-20200507-0819.zip -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

Nope. Just double checked and nope, still have the "set up plex dvr" button. Had no intentions of that with my current setup. -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

Reading up in here along the way. Was looking so hard for Plex support that I missed the general FAQ with Plex related topics. -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

root@localhost:# /usr/local/emhttp/plugins/dynamix.docker.manager/scripts/docker run -d --name='PaulsPlexServer' --net='host' -e TZ="America/Los_Angeles" -e HOST_OS="Unraid" -e 'PLEX_CLAIM'='claim-ybLt8gdqUcLu1yTDSosP' -e 'PLEX_UID'='99' -e 'PLEX_GID'='100' -e 'VERSION'='latest' -v '/tmp':'/transcode':'rw' -v '/mnt/user/Plex':'/data':'rw' -v '/mnt/user/Plex':'/data2':'rw' -v '/mnt/user/appdata/PlexMediaServer':'/config':'rw' 'plexinc/pms-docker' 0aa1f2a0f850bdfe280d07964ba0a95f47824b5455c102aacabb6c1bed02ea88 ignore the extra mapping to /data2. Just did that to get the run command to show up. -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

Gosh I hate to seem an idiot because I'm not....but here goes. Where is the run command found? It just isn't obvious to me screen tired eyes at the moment. -

Up-to-date Plex docker install help needed

pjneder replied to jkBuckethead's topic in General Support

@Hoopster I found this older thread and as you stated it was helpful even now in validating my Plex docker config. However, I will resurrect this because I have another question. As I've been getting my Plex (pass enabled) server library loaded, I've noticed an issue. My setup is basically what was mentioned above but even simpler. I map /mnt/user/Plex to /data and then organize my content within the Plex share. I use /tmp for /transcode. The problem is that my docker.img file has now ballooned to 21.5GB and Plex is the only docker I'm running. So it is all coming from there. I don't even have that many movies shoved into /mnt/user/Plex yet. I found this thread because some other thread suggested that there was "probably a mount inside the docker that should be outside" so I started hunting. I don't think that is the case but maybe I'm misunderstanding just how much appdata it creates. Hints and ideas? Thank you! -

I got this fixed. It was due to repeating UUIDs, as I had not wiped the signature from the disks and they were in unassigned but conflicted with pool disks. All sorted now. Thanks!

-

Thanks! @johnnie.black!!! running the wipefs -a on the sde and sdl was the trick to managing that. I will hopefully remember than in the future. I still have 1 more 1T to retire and slot my other 3T into that spot. I will be able to not repeat the mistake. Curious, if one immediately uses the FORMAT button on the unassigned device, will that fix this as well? i.e. is there any other recommended way to remove the drive from the config without physically doing the disconnect? My thing was that I was keeping it there in case something happen during the migration should I have needed to replace. Thanks!

-

@johnnie.black I think I have hit a similar issue as covered in this thread. I'm reading it but not exactly sure what to do, so I will tread carefully and await some advice. Attached are my anonymized diags output. Basics of my sequence: Plugged in 2x new 4T WD Red drives enumerated as sdi and sdj Pre-cleared both. Stopped array, unassigned sde (3T) from Partiy. Assigned sdi as Parity, started, rebuilt parity Stopped array, unassigned sdl (1T) and added sdj to array, started, rebuilt sdj Went to zero out the sde 3T using preclear, started having problems. Did a clean restart then the cache went unmountable and I started seeing these "cannot scan" errors. Oh yeah, all my dockers are gone now since the Cache pool didn't start. Hoping to recover the pool and not have to start over. Any pointers would be appreciated! Thanks! unRaid-pjneder-diags.zip