-

Posts

62 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Everything posted by death.hilarious

-

I was having a similar issue. When I would reboot the server, plugins would be able to install again. That is until I rebooted the server and it didn't boot properly. Turned out it was a flash drive issue, plugins were failing when trying to write to the USB, and ultimately the USB was so corrupted it failed in creating the file system on boot. I'd make a backup of my flash drive ASAP, just in case it's failing.

-

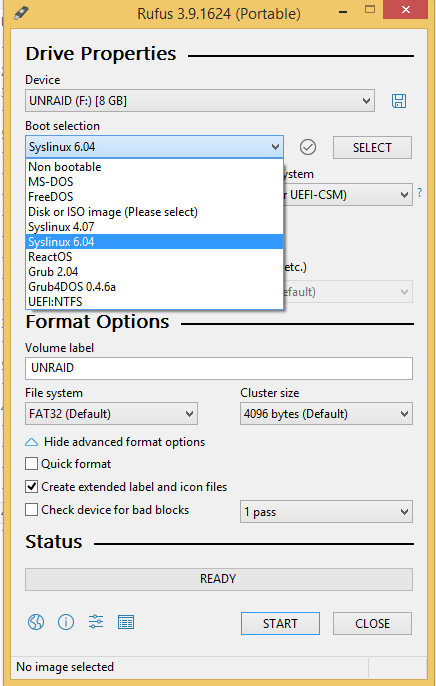

Thanks for the help @jonathanm! I used RUFUS to do a proper full write format as well as a bad sector check on the USB. I then re-made the USB drive using the USB creator and copied over the config backup... and ran into the exact same problem as before. I was so sure it was going to work. I finally gave in and hooked up a video card, keyboard, monitor and booted in safe mode (with plugins disabled) and it worked fine. Turns out that my cache drive had dropped and been un-assigned from the array probably because of cable jiggling and without the cache one of the plugins (unbalance? unassigned devices? swap file?) was screwing everything up during start up. Re-assigning the cache (while in safe mode), starting the array and then rebooting did the trick.

-

My Unraid server was having issues with plugins not being able to update. I thought I'd just reboot it and hope it fixed the problem. Unfortunately, after rebooting the server wouldn't start at all--headless system, no Web GUI, no SSH. Now I actually needed some files from the server urgently, so I'm panicking. After reading up in the forums, it seems like it might just be an issue with the boot USB, and sure enough when I pull the USB and plug it into another computer it's full of FSCKxxxx.REC files in the root directory. I was able to copy the contents of the config directory over and prepared a new USB. Formatting a new USB using the USB creation tool took hours because of the bug causing it to get stuck in "syncing file system...". So I ended up trying tons of different sticks in different ports, until I stumbled on the forum solution of changing the drive name manually to "UNRAID" while it's stuck in "syncing file system". Ugh. Unfortunately, because of this bug I was just happy that I got the USB creation to work and wasn't worrying about the fact that the USB stick I was using when I finally got it to work was kind of jenky. I transferred the config from the old stick to the new stick and boot the server. I'm overjoyed that everything seems to be working fine. I can update plugins again. I go ahead and click the button to transfer the license to this new USB stick. Then I reboot the system and to bring the array online... and it doesn't start. F*ck my life. The Web UI just won't start. I'm able to SSH in and from what I can tell the USB thinks it's full and isn't able to create the file system. I get an insufficient space error running the diagnostics command. So I play around some more. I make a backup of the config directory from the jenky USB and re-run the USB creation tool on it. I can get the server to boot from the freshly created USB, but when I transfer the config directory to it, it won't boot and has the same insufficient space problem. Now I create a third USB with the creation tool and transfer the backup config from the second jenky USB to this new third stick... and everything works! But I have to wait a year until I can transfer the license to a new USB? I've requested a transfer to a new USB from support, but it seems like it'll take a few days at least--and I still urgently need those files. So my questions: Does anyone have any ideas what's causing the insufficient space problem on my USB, other than the USB is just jenky? I don't want the same thing to start happening to this third USB. Is there any thing I should try to make the current jenky USB work while I'm waiting to transfer the license to a new USB?

-

I've noticed something similar. Torrents would mysteriously stop downloading at 95%+ completion and just sit there for days. After restarting deluge, the torrents would complete in seconds. I assume it has something to do with PIA's ongoing port forwarding issues (the port forwarding wasn't getting properly renewed?). Yesterday Deluge stopped connecting at all to my preferred Sweden server, so I was forced to switch to another server. Seems to work fine now.

-

Unbalance doesn't seem to start after upgrading to 6.8.0-rc1. The service shows as stopped and won't start. Tried uninstalling and reinstalling the plugin, but the problem persisted. Launching from command line works (/usr/local/emhttp/plugins/unbalance/unbalance -port 6237).

-

Just noticed that the docker has updated to Deluge 2.0! I'm already seeing a noticeable drop in my CPU load an RAM usage with a moderate number of torrents running. Kudos to: Arvid Norberg (libtorrent), Calum "Cas" Lind (Deluge), and of course @binhex!

-

See the FAQ under "Q1. I am having issues using a post processing script which connects to CouchPotato/Sick Beard, how do i fix this?". You need to define "ADDITIONAL_PORTS" environment variables to allow outgoing connections from the VPN docker to another container/application.

-

If the problem is specifically connecting deluge with couchpotato (both running on the same host), check the FAQ under the heading "How do i connect CouchPotato to DelugeVPN". The process is a lot more complicated than for things like Radarr/Sonarr because Couchpotato connects directly to the deluge daemon (instead of the webui) so you need to use the deluge credentials from the /config/auth file. (disclaimer: i haven't used Couchpotato in a ages, so it's possible it interfaces through the Deluge WebUI now)

-

Glad you got it sorted. I've had good success using freshtomato (forum link) as firmware for my wireless AP.

-

I'm no expert but it sounds like a local networking issue. Check things like cables and MTU settings. Double check your ddwrt settings to make sure it's not doing anything it's not supposed to be doing (particularly things like QoS or other flow controls and anything that involves packet inspection). Make sure your not doing things like using Wi-Fi for your torrent box or running a VPN client on your router in addition to the VPN client running on the delugeVPN box. Maybe try reverting back to the older ddwrt firmware.

-

The core has been completely rewritten to maintain compatibility with the latest libtorrent versions. The biggest difference is in terms of performance--the new Deluge v.2.0 is a lot more resource efficient especially when seeding a large number of torrents. But there are plenty of cool new features too. For example, Deluge v.2.0 adds multiuser support in the WebUI, so you can have different login usernames and passwords and each user will only see their own torrents. The roadmap suggests that cas was aiming for a Dec. 31 release of 2.0.0, but I'm not so sure he's going to make it because of the Windows issues. Nevertheless, the project is really close to a release and it's probably a good time to start thinking about a docker for it. Plus the Christmas holidays might make a good time for people to play with an experimental docker to find bugs .

-

@binhex Any chance of getting a Deluge-2.0 VPN docker? The beta has been out for a year now and is quite stable from my (albeit limited) testing. The biggest current issues before a final 2.0 release seem to be around the Windows version not playing well with Python 3 (from the bug-tracker).

-

Oh, that sounds pretty handy. +1

-

Yes. See my post a few posts up. Preclear just inexplicably stalled about 20 hours into pre-read. Tried it again and the same thing happened.

-

I just completed my run using the binhex patched bjp script through the plugin, so I can confirm it works fine on unRAID v.6.5.3.

-

Preclearance is hell. Not sure why, but preclearance never seems to go straightforward for me. Problems: 1) Unfortunately, disks that are being pre-cleared count towards your license disk limit. I'm upgrading storage by replacing disks with higher capacity disks, so I didn't really think I needed to upgrade my license. But, during pre-clearance I'm temporarily going to have extra disks plugged in--so down goes the array. Think your family doesn't like you, try telling them the Plex server will be down for the entire weekend or more. 2) After ~20 hours preclearance stalls during pre-read. The web UI is still accessible and shows 3 (out of 4) CPUs pinned to 100%. I don't see any errors in the logs, so I let the preclearance continue running. After another 10hrs without advancing in the preclearance, I stop the preclearance. After stopping the preclearance, 3 of 4 CPUs are still pinned at 100% according to the web UI. I SSH into the system to see what's eating the CPU, but htop shows the CPUs as idle. I assume it's a visual bug with the WebUI and attempt to restart the server. Server doesn't respond to the restart command through the WebUI or a 'powerdown' command through SSH shell. I'm forced to hard powerdown using the power button (but don't mind since the array wasn't loaded because of he disk limit). 3) Another ~20 hours preclearance confirms the bug. Same symptoms as before: preclearance stalls @ 20hr mark, 3 of 4 CPUs pinned to 100%, server unresponsive to powerdown commands. 4) So I decide to use @binhex's updated bjp fast pre-clear script. I get past the 20hr mark fine, so I figure I'm home free. But alas, I get hit with a power outage at the 40 hr mark. Apparently, preclearance doesn't exit gracefully when the UPS shutdown command is issued. Luckily I'm around when this happens, so I manually stop the preclearance which allows the server to shutdown. Unfortunately it's only after the unraid server has eaten up a huge portion of my UPS battery. Think your family hates you, try telling them that the battery power that supposed to keep the internet on during power outages only has half an hour of juice left because the unraid server kept running.

-

Out of curiosity, how much memory does everyone's Sonarr docker use? You can check your docker memory usage using the "docker stats" command in SSH. My Sonarr docker seems to creep up daily from ~160MB to about 1GB (of a 4GB system) after 5 days. I'm not sure if this is normal or a memory leak.

-

I like this idea a lot! 100+ page threads are really terrible for someone trying to search for the answer to a problem, so the same questions end up get asked again and again. Having users able to submit posts so that each issue is in its own thread would make things much easier to look up. Many authors maintain links to frequently referenced information in their signatures (which is a life saver). However, I don't think that people realize that you can disable your ability to see others signatures in your profile settings (and it's disabled by default i think). I know I didn't realize that I had signatures disabled and spent way too much time trying to find information that was linked in the signature. Having authors able to create sticky threads in their sub-forum would be a better solution for info that is otherwise put in the signature now.

-

How to mitigate issues with Intel Management Engine

death.hilarious replied to Marcel's topic in Security

The Intel ME vulnerabilities require a BIOS fix. If your motherboard is still receiving BIOS updates, update your BIOS. If, like most people (i assume), you're running unRAID on older hardware which no longer gets BIOS updates there are a still a few things you can do. 1. The most worrying aspect of Intel ME vulnerabilities is that you can be remotely exploited since Intel allows direct access to the ME through NIC integration (something AMD doesn't do). Fortunately, it seems that simply using a secondary NIC (e.g. from a PCI-e NIC card) mitigates this issue, since the ME is only configured to communicate through the built in NIC. 2. It may also be possible to fully disable and/or remove Intel ME from your system using ME_Cleaner. Originally ME_Cleaner simply deleted most of the ME partition from the bios and left only what was necessary for booting. Recently, however, it has integrated a soft_disable functionality which simply sets a specific flag in the ME firmware to "1" which prevents ME from loading (interestingly, the NSA was behind forcing Intel to include this hidden flag so they could disable ME on their computers). I've used ME_Cleaner on a couple of computers (both with soft-disable and full removal) and never had any problems. However, it's a pretty complicated procedure requiring external flashing of the bios chip using something like a raspberry pi (you'll need to buy a soic clip and some short wires). 3. Assuming your unRAID box is behind a NAT firewall, you can also simply block access to ports 16992-16993 (which are the ports Intel ME listens on). EDIT: For clarity, the Asus Intel ME update tool updates the Intel ME firmware partition independent of the rest of the BIOS. I really wouldn't try to run it through a VM. You can try something like this instead: https://www.flamingspork.com/blog/2017/11/22/updating-windows-management-engine-firmware-on-a-lenovo-without-a-windows-install/ -

Fails to install after the latest update (2018.01.18) on unRaid 6.4.0 for me. plugin: installing: https://raw.githubusercontent.com/docgyver/unraid-v6-plugins/master/ssh.plg plugin: downloading https://raw.githubusercontent.com/docgyver/unraid-v6-plugins/master/ssh.plg plugin: downloading: https://raw.githubusercontent.com/docgyver/unraid-v6-plugins/master/ssh.plg ... done +============================================================================== | Skipping package putty-0.64-x86_64-1rj (already installed) +============================================================================== plugin: run failed: /bin/bash retval: 1

-

Interesting. I didn't know about this board. Seems it would make a decent pfsense box too, since it supports AES-NI. Might buy a couple, next time they're on sale.

-

[Support] Linuxserver.io - Nextcloud

death.hilarious replied to linuxserver.io's topic in Docker Containers

1) Thank you for the great Nextcloud docker! 2) Can this work with a reverse proxy service like pagekite, beame, ngrok etc.? I'm not so keen on having my home IP show up on Shodan. Maybe portmap.io and an openvpn docker? If anyone is doing something like this, tips and guidance would be greatly appreciated. -

Try IPMagnet instead. It's open-source, so you can even run it on your own server if you want. http://ipmagnet.services.cbcdn.com/

-

Persistent SSH Keys for easier SSH Login

death.hilarious replied to Rikumo1978's topic in General Support

See this thread: https://forums.lime-technology.com/topic/51160-passwordless-ssh-login/