-

Posts

810 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Posts posted by casperse

-

-

-

2 hours ago, keith8496 said:

@casperse - Short answer is "yes".

I was able to pass the NVMEs thru another way and get pretty good performance. I'm not sure what this method is called. It looks like passing thru the raw partitions but it's really the root above the partitions. Using the VirtIO driver yields fast speeds at the expense of CPU cycles. Nice thing about Epyc is that we've got enough cores to afford it. (screenshot attached)

I will continue to tinker with passing the bare-metal NVMe thru. I have not been able to pass the bare-metal NVMe controller thru without severe crashes. I was trying to bind them to VFIO and pass them thru like a GPU. I feel like this is an issue with the motherboard or BIOS. Before this board, I was trying to use Supermicro's equivalent. I was able to pass the bare-metal NVMes on the SuperMicro but had to RMA three of them for dead BMCs before getting the AsRockRack ROMED8-2T.

The BIOS has a lot of options. It took me a few sessions to get the BIOS dialed in for my workload. It's definitely an enthusiast board. Having said that, I don't think Ryzen 3 requires all the tinkering/optimization that I've read about from Ryzen 1 & 2. I have two of my three GPUs passed thru. I expect the third to work just fine, I just haven't set it up yet. Sometimes I have to disconnect my USB devices to boot after a complete disconnect from power.

Thanks for the feedback!

I have a Xeon E2100G and even this setup is causing problems during passthrough of the NVidia cards 😞

I am starting to consider a setup like yours with plenty of possibilities for future expansion!

But NOT running Unraid as a bare-metal hypervisor (Like I always have done!)

But instead run Proxmox as my hypervisor and then having all the VM's running here! (Passthrough should be much easier)

And Unraid running as a virtual setup on Proxmox with HW passthrough of all the drives

That way I could re-boot Unraid and keep all my VM running + the backup of VM's and snapshots would be built into Proxmox

-

Just want to hear if you got the ROMED8-2T motherboard stable running VM's?

Are you happy with this MB & CPU? - I am asking because I am in the middle of ordering the same MB as an upgrade to my stable Xeon MB

-

-

On 4/13/2022 at 5:44 PM, ich777 said:

Nice, but just a quick reminder to keep in mind that Emby will stop playback if the buffer runns full, Plex doesn't do that...

I have made the changes and I havnet seen any memory warning for some time, but got one today (Havent found any Emby reference)

Is this just one off these things where you have to upgrade your memory 😞

Attached new Diag file

-

On 5/1/2022 at 2:04 PM, SimonF said:

This is fine as you are not on 6.10rc.

When you upgrade the entries should be removed from the qemu file and a new file created in qemu.d

I was on RC4 I am now on RC5

Do you need anymore info or additional running commands from me? :--)

-

So sorry My BAD I got a little freaked out and did a reboot - hoping all my data would reappear again and it did....

I am monitoring my new cache very closely. I promise to send a new Diag if it happens again

-

THANKS again! - I decided to change every path to get the better performance and everything worked great!

Also updated plugins to do appdata backup etc.... so everything was running just fine and I wanted to check files on my new cache driveBut this time it was EMPTY! and in my main view I had this:

(This was not present when running the new cache drive at start?)

Somehow my new cache drive was in both places?

Afraid that I had now lost all my cache files I did a reboot and everything is back again?

I upgraded to rc5 yesterday, not sure this is an error or something else?

-

14 hours ago, trurl said:

attach diagnostics to your NEXT post in this thread

Thanks trurl

I found a post saying I should reboot again, but that didnt help.

I then removed the new cache drive it was empty anyway, and then I started without the new cache and stopped and added it again and now its active!

But I have a question , very afraid to mess up my existing Appdata on my existing cache drive!If I rename my existing cache to a new name cache_appdata and then start the Docker service!

Whar will happen to all the paths that are assigned to the currunt path

On 4/24/2022 at 4:55 PM, Squid said:Those 2 paths are actually more or less identical. The first is referring to a share on the cache drive and the second is referring to a share on all drives including the cache.

You'd want to stop all the running containers, and edit them to be /mnt/cache_apps/appdata to refer to the correct pool (for anything that had /mnt/cache/appdata). And also set the appdata share to use cache_apps. No need to change /mnt/user/appdata to refer to anything else.

So its actually best and recommended to just use the default /mnt/user/appdata and set prefer Cache!

And NOT like me and others to have set it manually on each docker to use the /mnt/cache/appdata - because when adding pools and renaming you have to change the paths on all dockers! -

-

Argh no its a black screen right after the last load on screen of the nvidia loading

So somehow the login promt and the local UI resolution does not like my 4K monitor?

I could see that it changed resolution while loading on the screen 1080P -> 4K (Very small text) -> back to 1080P

and at the login promt black screen?

Is there any setting to get this working on a 4K screen?

I am now pretty sure that is the problem

Diagnostic attached below

-

On 4/22/2022 at 4:59 PM, SimonF said:

Are you running on RC4 now? can provide output of cat /etc/libvirt/hooks/qemu and

On 4/22/2022 at 4:59 PM, SimonF said:I think I am missing removal of the 6.9.2 vers updates during upgrade to 6.10 versJust checked should be removing.

Yes of course, anything to help

#!/usr/bin/env php <?php #begin USB_MANAGER if ($argv[2] == 'prepare' || $argv[2] == 'stopped'){ shell_exec("/usr/local/emhttp/plugins/usb_manager/scripts/rc.usb_manager vm_action '{$argv[1]}' {$argv[2]} {$argv[3]} {$argv[4]} >/dev/null 2>&1 & disown") ; } #end USB_MANAGER if (!isset($argv[2]) || $argv[2] != 'start') { exit(0); } $strXML = file_get_contents('php://stdin'); $doc = new DOMDocument(); $doc->loadXML($strXML); $xpath = new DOMXpath($doc); $args = $xpath->evaluate("//domain/*[name()='qemu:commandline']/*[name()='qemu:arg']/@value"); for ($i = 0; $i < $args->length; $i++){ $arg_list = explode(',', $args->item($i)->nodeValue); if ($arg_list[0] !== 'vfio-pci') { continue; } foreach ($arg_list as $arg) { $keypair = explode('=', $arg); if ($keypair[0] == 'host' && !empty($keypair[1])) { vfio_bind($keypair[1]); break; } } } exit(0); // end of script function vfio_bind($strPassthruDevice) { // Ensure we have leading 0000: $strPassthruDeviceShort = str_replace('0000:', '', $strPassthruDevice); $strPassthruDeviceLong = '0000:' . $strPassthruDeviceShort; // Determine the driver currently assigned to the device $strDriverSymlink = @readlink('/sys/bus/pci/devices/' . $strPassthruDeviceLong . '/driver'); if ($strDriverSymlink !== false) { // Device is bound to a Driver already if (strpos($strDriverSymlink, 'vfio-pci') !== false) { // Driver bound to vfio-pci already - nothing left to do for this device now regarding vfio return true; } // Driver bound to some other driver - attempt to unbind driver if (file_put_contents('/sys/bus/pci/devices/' . $strPassthruDeviceLong . '/driver/unbind', $strPassthruDeviceLong) === false) { file_put_contents('php://stderr', 'Failed to unbind device ' . $strPassthruDeviceShort . ' from current driver'); exit(1); return false; } } // Get Vendor and Device IDs for the passthru device $strVendor = file_get_contents('/sys/bus/pci/devices/' . $strPassthruDeviceLong . '/vendor'); $strDevice = file_get_contents('/sys/bus/pci/devices/' . $strPassthruDeviceLong . '/device'); // Attempt to bind driver to vfio-pci if (file_put_contents('/sys/bus/pci/drivers/vfio-pci/new_id', $strVendor . ' ' . $strDevice) === false) { file_put_contents('php://stderr', 'Failed to bind device ' . $strPassthruDeviceShort . ' to vfio-pci driver'); exit(1); return false; } return true; }

could do the list

/bin/ls: cannot access '/etc/libvirt/hooks/qemu.d/': No such file or directory? -

Thanks Squid! much appreciated!

-

Hi All

I am finally going to setup multiple cache pools! 🙂

And I am wondering how to go about it in the right way?

Current setup: One cache drive called "cache"

Future setup; Having 3 cache drives - cache_apps / cache_vms / cache_shares

- Would I have to manually go and change all my dockers from /mnt/cache/appdata/ --> /mnt/user/appdata/ before renaming my existing cache drive?

-

Would it be best to move all things back to the array (Change prefer: Cache --> Yes : Cache) and then setup all my cache drives?

- (The system files and the Docker image files and the Appdate would be keept on the existing cache drive!)

I have all my Media dockers on my current cache drive and moving all these small files would take days!

Would love to just rename the current cache drive to cache_apps and move the rest to the other cache drives?

I might me overthinking this LOL

Did see the video about multiple pools but in that they where all empty

-

On 4/15/2022 at 9:57 PM, trurl said:

I couldn't see that much detail so didn't know if it was a duplicate or not. The file on cache would have precedence so that one on disk7 wasn't in use, and if it were, you couldn't delete it.

Thanks I have removed them now!

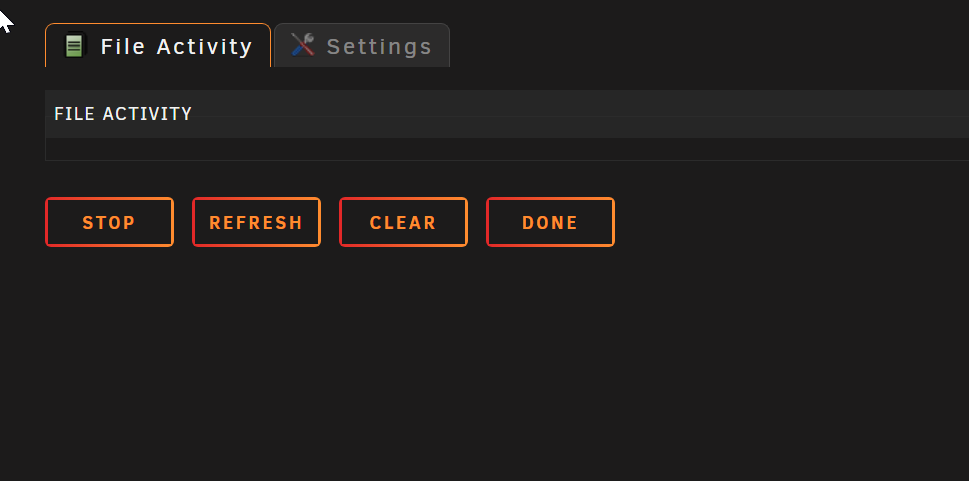

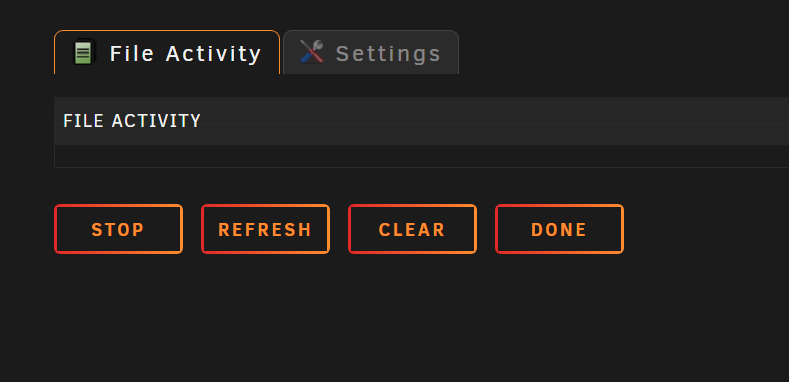

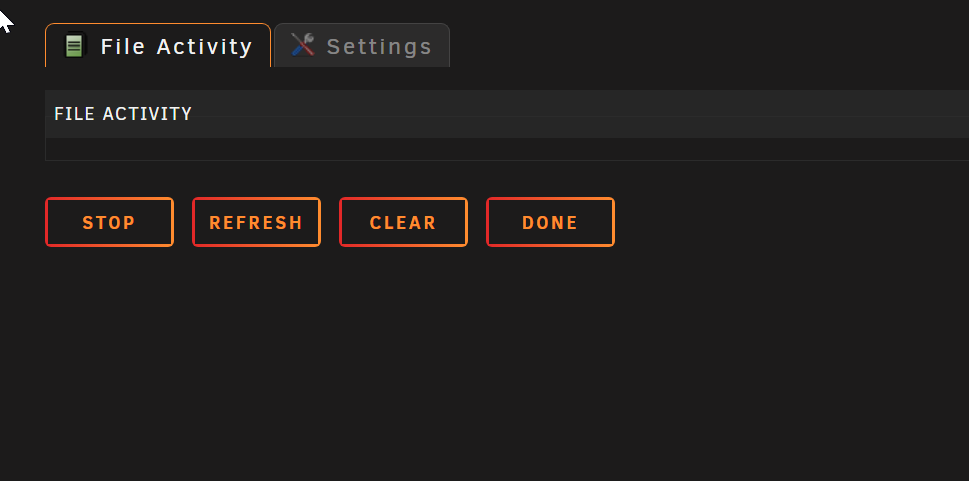

I cant get the File activity working in RC4?

-

Hi All

I am using the OZNU Homebridge docker.

And it works for everything except the Sonos ZP plugin?

I have by troubleshooting with the auther found that it is upnp that causes these problems?

What network setting are you running with?

Mine is:

And in the UI:

But I keep getting periodic UPnP announcements for .6 - the HOST! ?

[4/19/2022, 9:00:21 AM] [Sonos] upnp: RINCON_542A1BE254D901400 is alive at 192.168.0.6

Any input on how to resolve this?

-

-

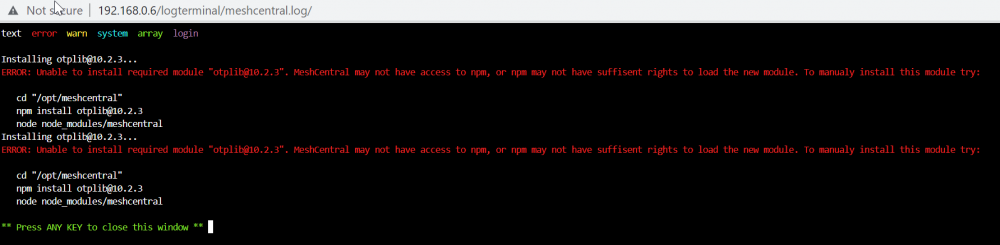

I just updated to 6.10.rc4 and installed the File activity plugin (My drives keep spinning up)

But no matter what I do I dont get any activity in the plugin?

Log:

But its already over the default size?

I then updated it to:

I dont get the warning anymore but still no activity listed?

Diag file attached below

-

I know this is an old post but every one is talking about the SC847 and the 36 bays!

And it has been on my list for a very long time (Hitting the max supported drives on Unraid)

But isn't it a big problem that all cards have to fit in the low-profile expansion slot? (Or is it full?)

-

Yes thanks!

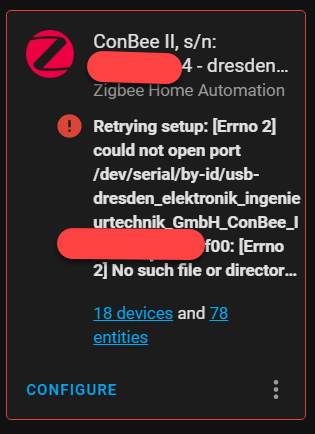

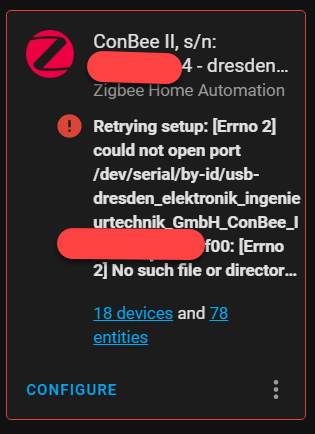

I had to do the XML changes to get the serial USB device added in the VM - and then the HA process above to change the Zigbee HA path and keeping the DB for all my existing devices 🙂 - so I am really happy to now be on 6.10.rc4

-

If anyone come here to get Home assistant working in a VM running UNraid 6.10,RC4 and you don't want to start over configuring your ZIGBEE HA then do this:

https://community.home-assistant.io/t/conbee2-stick-dev-path-configuration-problem/411424

-

1

1

-

1

1

-

-

I did the insert this in the XML for Home assistant if using the Conbee2 stick!

</memballoon> placed under this line: <serial type='dev'> <source path='/dev/serial/by-id/usb-0000_0000-if00'/> <target type='usb-serial' port='1'> <model name='usb-serial'/> </target> <alias name='serial1'/> <address type='usb' bus='0' port='4'/> </serial>

Get USB ID by console:

ls /dev/serial/by-id/

But even if I got the VM started then Home assistant cant access the Conbee stick?

-

1

1

-

-

On 4/11/2022 at 7:17 AM, ich777 said:

Why are you doing this? I can't see any benefit from this?

Please remove this script, also remove the parameter that it only has 2GB of memory (this is only the maximum amount of memory the application can consume) and add this to your extra parameters:

--mount type=tmpfs,destination=/tmp,tmpfs-size=8589934592

This will basically create a tmpfs on the Docker start in /tmp (yes, that's perfectly fine and should used like that since then there is no chmod or anything else needed) and change the transcoding path in Emby to /tmp

I got the script from mgutt and I keept them because its practually the same as writing it in the docker:

Have one for Emby and another for Plex) both with at RAM folder

But I guess its simpler to have just tmp, so I will delete the scripts and add the parameter to them both

But using your extra parameter in docker for Emby I now have:

--runtime=nvidia --log-opt max-size=50m --log-opt max-file=1 --restart unless-stopped --mount type=tmpfs,destination=/tmp,tmpfs-size=8589934592

And for Plex:

--runtime=nvidia --no-healthcheck --log-opt max-size=50m --log-opt max-file=1 --restart unless-stopped --mount type=tmpfs,destination=/tmp,tmpfs-size=8589934592

So long time since I set this up, but it has been running pretty stable

-

1

1

-

-

Seems to be a known problem back to 6.10.rc2?

Seem strange that it works in 6.9....

[REQUEST] Meshcentral

in Docker Engine

Posted

Seem to be some access problem?