-

Posts

1355 -

Joined

-

Last visited

-

Days Won

1

Fireball3 last won the day on August 2 2017

Fireball3 had the most liked content!

Converted

-

Gender

Undisclosed

-

Location

Good old Germany

-

Personal Text

>> Engage <<

Recent Profile Visitors

Fireball3's Achievements

Community Regular (8/14)

48

Reputation

-

LSI Controller FW updates IR/IT modes

Fireball3 replied to madburg's topic in Storage Devices and Controllers

Please check if the Mini-SAS connector is properly seated. Sometimes it looks like but it isn't! Unplug and plug again just to rule that one out. -

Thanks for the heads up! Obviously I missed some improvements since I didn't have to jump on every new release as my servers are doing well as they are now. [emoji28] In the meantime I'm increasingly following the principle: "never change a running system" [emoji56] Although, pausing the parity check, is a much anticipated feature that would fit my needs. [emoji847]

-

Of course you will and that's why the check is done. But it is expected to be only read errors on the drives. That was the reason why I joined unraid in the first place, after I found out that my drives we throwing read errors when lying on the shelf. I remember having had this discussion some years ago in this forum already. If this is a frequent and serious issue, shouldn't there be a better solution how to deal with sync errors? 1. There should be no automatic correction 2. There should be an easy way to inspect the potentially affected data 3. The resync should be limited to the affected area without the need to run another 24h parity check Parity checks put the most stress on the system - at least on mine.

-

Assuming the system has been properly commissioned and tested before taken into service, it's not reasonable to tear it apart everytime a sync error pops up. At some point one should be able to rely on what it shows - a sync error. That's why the correcting check is on by default and from my understanding there is still no commonly agreed recommendation that this should be done in another way.

-

Hi, sorry to hijack this topic but is this the commonly agreed procedure? If so, what is the recommended workflow to get everything straightened if there is a sync. error detected (and not corrected)?

-

Fireball3 started following LSI Controller FW updates IR/IT modes , ControlR (Android/iOS app for unRAID) , GUI not available due to rootfs run out of space and 2 others

-

ControlR (Android/iOS app for unRAID)

Fireball3 replied to jbrodriguez's topic in User Customizations

This should answer your question: https://forums.unraid.net/topic/47914-controlr-androidios-app-for-unraid/?do=findComment&comment=1014190 -

LSI Controller FW updates IR/IT modes

Fireball3 replied to madburg's topic in Storage Devices and Controllers

Have you tried taping the contacts? There is a detailed description somewhere in the forum. Use the search function please, I'm on tapatalk at the moment. Maybe you have to use another board for the flash procedure. -

GUI not available due to rootfs run out of space

Fireball3 replied to Fireball3's topic in General Support

OK, weekend is approaching and I have to get the media server up and running again... 😓 My plan is to setup a clean unRAID on the USB and copy the settings without the docker from the old install. Maybe I should skip the plugins also since they are giving errors in the syslog? So, what are the essential parts that I need to copy to the fresh install? super.dat license file ... ? -

GUI not available due to rootfs run out of space

Fireball3 replied to Fireball3's topic in General Support

I pulled the syslog. Maybe this will encourage you to give me some advice. 😉 syslog_18.01.2021.txt I wonder why the rootfs is 684M only. Shouldn't that be of the size of installed RAM??? 🤔 -

Hi folks, I ran into this issue after installing the MiniDLNA docker. Probably didn't pay attention (took the presettings) with the settings of the docker. Now the server is running out of space on the rootfs when booting. The GUI is not available, SMB not running, array is not mounted. Luckily I can ssh in and see what's going on. When trying to grab a diagnostics I get this: root@Tower:~# diagnostics Starting diagnostics collection... Warning: file_put_contents(): Only 0 of 5 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 80 Warning: file_put_contents(): Only 0 of 975 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 85 Warning: file_put_contents(): Only 0 of 78 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 101 Warning: file_put_contents(): Only 0 of 2 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 120 Warning: file_put_contents(): Only 0 of 34 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 122 Warning: file_put_contents(): Only 0 of 2 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 120 Warning: file_put_contents(): Only 0 of 34 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 122 echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device echo: write error: No space left on device Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 29 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 160 Warning: file_put_contents(): Only 0 of 65 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 166 Warning: file_put_contents(): Only 0 of 535 bytes written, possibly out of free disk space in /usr/local/emhttp/plugins/dynamix/scripts/diagnostics on line 210 done. ZIP file '/boot/logs/tower-diagnostics-20210117-0922.zip' created. The diagnostic file contains only empty files. Checking for space: root@Tower:~# df -h Filesystem Size Used Avail Use% Mounted on rootfs 684M 684M 0 100% / tmpfs 32M 368K 32M 2% /run devtmpfs 684M 0 684M 0% /dev tmpfs 747M 0 747M 0% /dev/shm cgroup_root 8.0M 0 8.0M 0% /sys/fs/cgroup tmpfs 128M 140K 128M 1% /var/log /dev/sda 1003M 246M 758M 25% /boot /dev/loop0 9.2M 9.2M 0 100% /lib/modules /dev/loop1 7.3M 7.3M 0 100% /lib/firmware Is my assumption correct about the docker filling up the rootfs? That was the last thing I set up before this problem arose. How can I free up the space used by the docker? I checked the stick on a Windows machine for filesystem issues, but all is fine. I have teamviewer access only to the server. Safe boot and such things are not an option. I can ask the remote user to plug the stick into a Windows machine and change/modify content on the stick or do it via console. I would prefer not to set up a new config but remove the docker to free up space.

-

DiskSpeed, hdd/ssd benchmarking (unRAID 6+), version 2.10.8

Fireball3 replied to jbartlett's topic in Docker Containers

It's it true that solid state drives are always filled up according to those graphs posted? Will empty space always be shifted in the right side of the graph/drive or will it be more like a sawtooth pattern on a well run in drive where data may have been deleted in random areas of the drive? -

DiskSpeed, hdd/ssd benchmarking (unRAID 6+), version 2.10.8

Fireball3 replied to jbartlett's topic in Docker Containers

Just to make sure I understand it right. The flat line that basically indicates the max. interface throughput is trimmed (empty) space on the SSD? -

Those are the features I personally need most. 🤤 Thanks for finally bringing this to life.

-

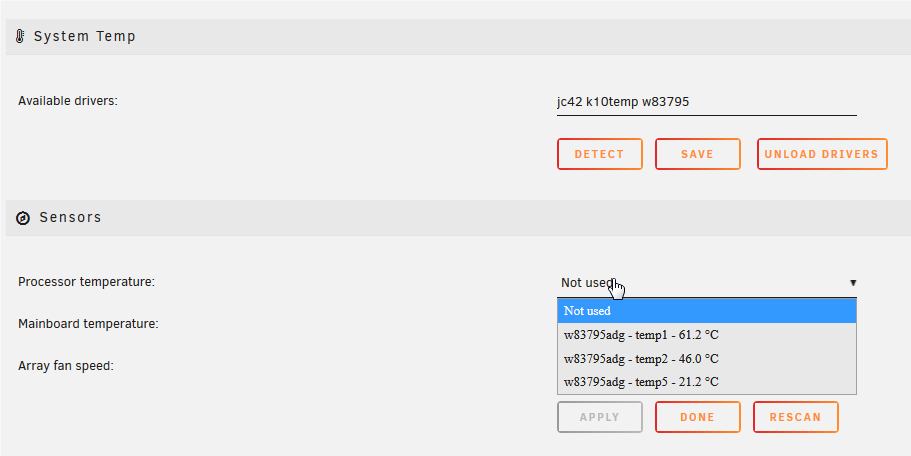

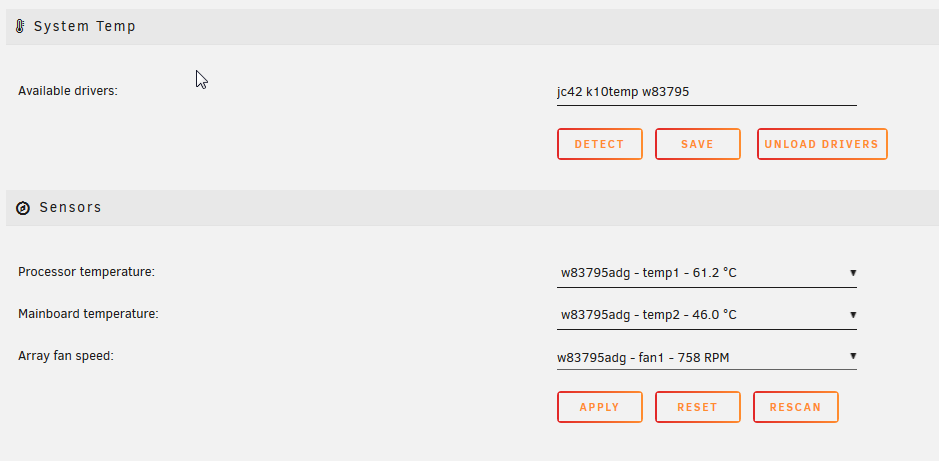

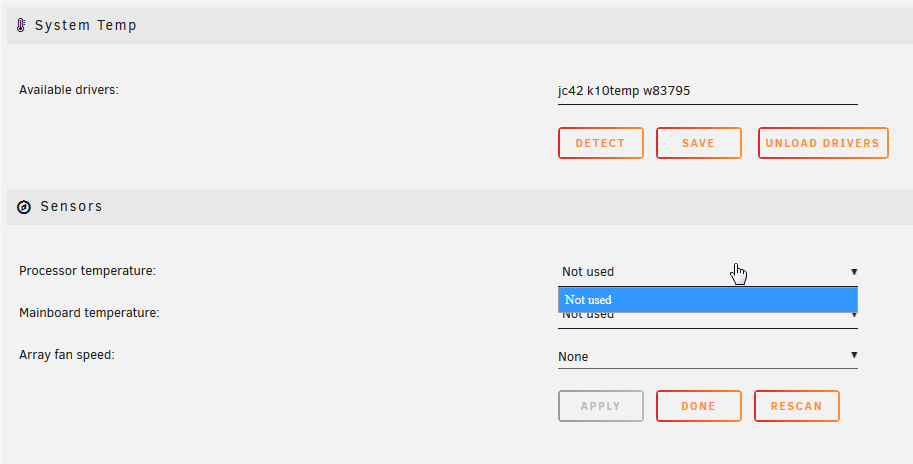

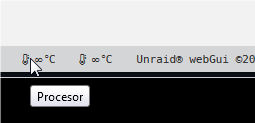

I can second this find. I'm playing with a HP NL54 microserver. After installing the plugin it detects the correct sensors. Assignment of sensors Pressing "APPLY" Of course, no sensor data is displayed. After deleting /etc/sensors.d/sensors.conf I can repeat the procedure. If the file is not deleted or modified like below, no chance to get the selection back. Initial sensors.conf root@Tower:~# cat /etc/sensors.d/sensors.conf # sensors chip "k10temp-pci-00c3" label "temp1" "CPU Temp" chip "w83795adg-i2c-1-2f" label "temp2" "MB Temp" chip "w83795adg-i2c-1-2f" label "fan1" "Array Fan" root@Tower:/etc/sensors.d# sensors Error: File /etc/sensors.d/sensors.conf, line 4: Undeclared bus id referenced Error: File /etc/sensors.d/sensors.conf, line 6: Undeclared bus id referenced sensors_init: Can't parse bus name Deleted the sensors.conf and rescan. Sensors were back for selection in the GUI. Note, the K10 sensor disappeared... Pressed APPLY again and they were gone. I change the sensors.conf like this root@Tower:/etc/sensors.d# cat /etc/sensors.d/sensors.conf # sensors chip "w83795adg-i2c-12f" label "temp1" "CPU Temp" chip "w83795adg-i2c-12f" label "temp2" "MB Temp" chip "w83795adg-i2c-12f" label "fan1" "Array Fan" root@Tower:/etc/sensors.d# sensors Error: File /etc/sensors.d/sensors.conf, line 2: Parse error in chip name Error: File /etc/sensors.d/sensors.conf, line 4: Parse error in chip name Error: File /etc/sensors.d/sensors.conf, line 6: Parse error in chip name But the sensors reapear in the GUI. Of course there is no further benefit in having them back for selection, as long as they can't be saved and the temps are not displayed as intended. @bonienl Please let me know how I can contribute further to help debugging this issue. I also noticed this little spelling error in the mouseover.

-

LSI Controller FW updates IR/IT modes

Fireball3 replied to madburg's topic in Storage Devices and Controllers

Is the PCB itself genuine LSI or a rebrand? For LSI you shouldn't need the workaround as for the rebranded cards. Have you tried the toolset I used for the Dell adapters? Should be linked in my signature. Ima posting this from my mobile so can't provide a link right now.