-

Posts

269 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Everything posted by Jorgen

-

I'm seeing this too, have reported it to Binhex on github and will patiently wait for a new image build. Could you post new supervisord.log from the older version? Don't forget to redact user names and passwords first.

-

If you are using a proxy in the app settings of radarr/sonarr you can’t check from the container console wether they are using the VPN or not. The console works on a OS level in the container, and the OS is unaware of the proxy that the apps are using. I don’t know how to check that the app itself is actually using the VPN via the proxy, maybe someone else has a solution for that. Sent from my iPhone using Tapatalk

-

Glad you worked it out! It can be confusing with the two different methods of using the VPN tunnel, each one with its own quirks on how to set it up. Sent from my iPhone using Tapatalk

-

Wireguard is supported and has been for a while, but maybe I’m misunderstanding your question? Sent from my iPhone using Tapatalk

-

I ended up adding all of the download apps in the delugeVPN network, including NzbGet (the non-VPN version from binhex). I am seeing slower download speeds for nzbget this way, but at least it’s working and all apps can talk to each other again. Binhex is working on a secure solution to allow apps inside the VPN network to talk to apps outside it, so it might be possible to run NzbGet on the outside again in the future. Your only other option is to configure sonarr/radarr to talk to NzbGet on the internal docker ip, e.g. 172.x.x.x, but beware that the actual up is dynamic for each container and may change on restarts. Edit: yeah jackett is of no use for NZB’s, you need to set them up as separate indexers in radarr/sonarr. Which shouldn’t be a problem, both apps should talk to the internet freely over the VPN tunnel Edit2: sorry ignore the first bit of my reply, you’re using privoxy, not network binding. Sorry for the confusion.

-

I see, learn something new everyday. This container is based on arch which seems to support firewalld, but that’s obviously up to binhex what to use. From all the effort he’s put into the iptables I’d hazard a guess that he’s not keen to change it anytime soon. Sent from my iPhone using Tapatalk

-

Just out of curiosity, what do you think it should use instead of iptables? They seem well suited to the task at hand of stopping any data leaking outside the VPN tunnel? Sent from my iPhone using Tapatalk

-

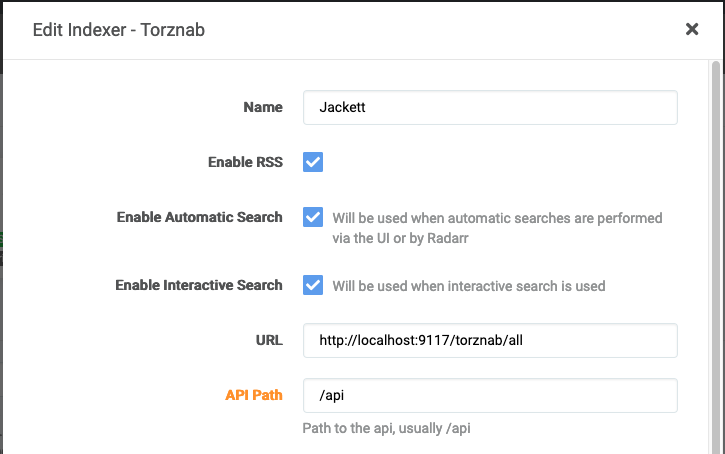

This doesn't answer your question directly, but in Sonarr/Radarr you can also set them up to use all your configured indexers in jacket, see example from Radarr below. That way you only have to set up one indexer, once, in sonarr/radarr. If you add new indexers to jackett, they will be automatically included by the other apps. Doesn't work if you need different indexers for the different apps though...

-

I have the same problem, where everything works as expected (after adding ADDITIONAL PORTS and adjusting application settings to use localhost) except being able to connect to bridge containers from any of the container with network binding to delugeVPN. Jackett, Radarr and Sonarr are all bound to the DelugeVPN network. Proxy/Privoxy is not used by any application. NzbGet is using the normal bridge network. I can access all application UIs and the VPN tunnel is up. Each application can communicate with the internet. In Sonarr and Radarr: I can connect to all configured indexers, both Jackett (localhost) and nzbgeek directly (public dns name) I can connect to delugeVPN as a download client (using localhost) I CAN NOT connect to NzbGet as a download client using <unraidIP>:6790. Connection times out. I CAN connect to NzbGet using it's docker bridge IP (172.x.x.x:6790) It's my understanding that the docker bridge IP is dynamic and may change on container restart, so I don't really want to use that. @binhex it seems like the new iptable tightening is preventing delugeVPN (and other containers sharing it's network) from communicating with containers running on bridge network on the same host? Here's a curl output from the DelugeVPN console to the same NzbGet container using unraid host IP (192.168.11.111) and docker network IP (172.17.0.3) sh-5.1# curl -v 192.168.11.111:6789 * Trying 192.168.11.111:6789... * connect to 192.168.11.111 port 6789 failed: Connection timed out * Failed to connect to 192.168.11.111 port 6789: Connection timed out * Closing connection 0 curl: (28) Failed to connect to 192.168.11.111 port 6789: Connection timed out sh-5.1# curl -v 172.17.0.3:6789 * Trying 172.17.0.3:6789... * Connected to 172.17.0.3 (172.17.0.3) port 6789 (#0) > GET / HTTP/1.1 > Host: 172.17.0.3:6789 > User-Agent: curl/7.75.0 > Accept: */* > * Mark bundle as not supporting multiuse < HTTP/1.1 401 Unauthorized < WWW-Authenticate: Basic realm="NZBGet" < Connection: close < Content-Type: text/plain < Content-Length: 0 < Server: nzbget-21.0 < * Closing connection 0 sh-5.1# Edit: retested after resetting nzbget port numbers back to defaults. Raised issue on github: https://github.com/binhex/arch-delugevpn/issues/258

-

No. You can use privoxy from any other docker or computer on your network by simply configuring the proxy settings of the application/computer to point to the privoxy address:port. For example, you would do this under settings in the Radarr Web UI. Or in Firefox proxy setting on you normal PC. But for dockers running on you unraid server, like radarr/sonarr/lidarr, there is an alternative way. You can make the other docker use the same network as deluge, by adding the net=container... into the extra parameters. It has some benefits in that you are guaranteed that all docker application traffic goes via the VPN. When using privoxy, only http traffic is routed via the VPN, and only if the application itself has implemented the proxy function properly. But doing it the net=container way, you shouldn't also use the proxy function in the application itself. So one or the other, depending on your use case and needs, but not both.

-

I'm not familiar with PrivateVPN, so not sure how much help I can offer on this. Have you considered using PIA instead? Either way, I think we need to see more detailed logs at this point, can you follow this guide and post the results please? Remember to redact your user name and password from the logs before posting! https://github.com/binhex/documentation/blob/master/docker/faq/help.md

-

This is almost certainly the main problem. If surfshark doesn't support port forwarding your speeds will be slow, sorry. Maybe someone else is using surfshark and can confirm if they have managed to get good speeds despite this? Strict_port_forward is only used with port forwarding so you might as well leave it at "no" It has definetly helped others, so it's worth a shot. But you really are fighting an uphill battle without port forwarding. So this is worth pursuing for sure, due to the error you're seeing. The defaults include PIA name servers, which I think have been depreciated by now. There are also other considerations, i.e. don't use google, see Notes at the bottom of this page: https://github.com/binhex/arch-delugevpn Try replacing the name servers with this: 84.200.69.80,37.235.1.174,1.1.1.1,37.235.1.177,84.200.70.40,1.0.0.1 If the error persists, it's likely something wrong with your DNS settings for unraid itself. No idea about this one, sorry

-

If you haven't done so already, work through all the suggestions under Q6 here: https://github.com/binhex/documentation/blob/master/docker/faq/vpn.md

-

Ok, I'm really guessing here, @binhex will need to chime in with the real answer, but I think you need to: 1. Remove the ipv6 reference 2. Remove the DNS entry (maybe, it might also be ignored already. Either way it would be better to move it to the DNS settings of the docker) 3. Add the wireguardup and down scripts 3. Ensure the endpoint address is correct. "pvdata.host" does not resolve to a public IP for me and I'm pretty sure it needs to for wireguard to be able to connect to the endpoint and the tunnel to be established. 4. Try removing the /16 postfix from the Address line So apart from #3, try the below as wg0.conf. Although I'm pretty sure it will fail still because of #3. [Interface] PrivateKey = fffff Address = 10.34.0.134 PostUp = '/root/wireguardup.sh' PostDown = '/root/wireguarddown.sh' [Peer] PublicKey = fffff AllowedIPs = 0.0.0.0/0 Endpoint = pvdata.host:3389

-

@Nimrad this is what the wg0.conf file looks like when using PIA. I assume it needs to have the same info when using other VPN providers as well. The error in you log specifically states that the Endpoint line is missing from your .conf file, but I don't know what it needs to be set to for your provider/endpoint. [Interface] Address = 10.5.218.196 PrivateKey = <redacted> PostUp = '/root/wireguardup.sh' PostDown = '/root/wireguarddown.sh' [Peer] PublicKey = <redacted> AllowedIPs = 0.0.0.0/0 Endpoint = au-sydney.privacy.network:1337

-

Can you post your wg0.conf file (but redact any sensitive info)? Which VPN provider are you using? Sent from my iPhone using Tapatalk

-

[Support] Linuxserver.io - Unifi-Controller

Jorgen replied to linuxserver.io's topic in Docker Containers

It should be Bridge network, and you have already found the correct application setting to fix AP auto-adoption when running inside a Docker container (in Bridge mode). Set Controller Hostname/IP to the IP of your Unraid server, then tick the box next to "Override infrom host with controller hostname/IP" See https://github.com/linuxserver/docker-unifi-controller/#application-setup for more details, especially this: -

[Support] SpaceinvaderOne - Macinabox

Jorgen replied to SpaceInvaderOne's topic in Docker Containers

Thanks for this, just confirming that method 2 pulls down the correct installer for me as well. Sent from my iPhone using Tapatalk -

[Support] SpaceinvaderOne - Macinabox

Jorgen replied to SpaceInvaderOne's topic in Docker Containers

@SpaceInvaderOne I just pulled down your latest container and trying to install Big Sur it actually runs the Catalina installer. I've deleted everything and started over a few times (with unraid reboot in between for good measure) with the same result, is there a bug in the latest image? The installer is correctly named BigSur-install.img but when you run it from the new VM it is definetly trying to install Catalina: -

Possibly, not sure how you would find out though. Can you ask the tracker? Sent from my iPhone using Tapatalk

-

I don’t think you can, sorry. The port is randomly given out by PIA from its pool for the particular server you are connecting to. The container uses a script that asks for a new (random) port on each reconnection to the VPN server. Sent from my iPhone using Tapatalk

-

Using iCloud is the way to go in my experience. Using the same library from two different macs is not safe. Apple recommends against it and there are many report of corruptions from users doing it. An alternative would be to share the library from within Photos on the VM. Other macs can then access it in read-only mode on the same network. Sent from my iPhone using Tapatalk

-

For backups yes, if you have enough space on your Mac to store the full library in the first place (I don’t). There’s also a live database in the folder structure so syncing that without corruptions will need consideration. Probably easier to use TimeMachine instead of custom script as Apple have already worked it out for you. Sent from my iPhone using Tapatalk

-

[Support] SpaceinvaderOne - Macinabox

Jorgen replied to SpaceInvaderOne's topic in Docker Containers

Should cut down on the support requests in this thread... Just out of interest, how did you manage to do this? I thought this was a limitation in unraid’s VM implementation? Sent from my iPhone using Tapatalk -

My MediaCover directory is in appdata on my cache disk, not inside the docker.img. As far as I know I haven't done anything special to put it there, wasn't even aware of it until I saw your post. It should be inside /config from the container perspective, which should be mapped to your appdata share.