-

Posts

40 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Everything posted by prongATO

-

How is a server grade motherboard better?

prongATO replied to FlyingTexan's topic in Motherboards and CPUs

Integrated lights out IPMI server level support ability to run Xenon or Core processors -

I had some of the exact same errors when trying to use the recommended unassigned devices preclear plugin. It would be my recommendation to use the Binhex docker preclear. Just know, the listed CLI examples aren’t correct. It is preclear_binhex.sh when you launch the http for the preclear docker, NOT preclear_disk.sh. ex. preclear_binhex.sh -l (to list the eligible drives) preclear_binhex.sh -A /dev/sdX (with X being the corresponding letter you see in your list) Also, the old school way isn’t terrible. Ssh to your server, start a screen session and use the old, rock solid, preclear_disk.sh script and leave the screen session running. I hope this helps as we transition away from the preclear plug-in.

-

6.9.2 RC2 "Read SMART" spins up the same 2 disks throughout the day

prongATO commented on cholzer's report in Prereleases

I actually think I tracked down my problem and it wasn’t an UnRaid issue. After I posted, I started looking at every device on my network linked to my server. After I re- enabled mover logging, I saw my router was writing information quite frequently. I just updated my router with the latest Advanced Tomato firmware, I had previously configured a CIFS IP data usage to write to my backups share on the server. Low and behold the interval was 1 hour. Now that data usage tracking is working properly in Advanced Tomato, I don’t need to do it anyway. it doesn’t explain why it spun up the entire array every hour (because backups is limited to one specific disk In the array) but now it’s only happening on a few drives and, in my best estimation, it’s functioning properly now. My tip to anyone else having similar issues is to double check every device that might read/write to a share on the server and be as detailed as possible. I also just configured a separate share for time machine backups from the Mac on my network so I expect to see, in my case Disk 9, spun up quite a bit. mrpunrfsx1-diagnostics-20220211-0206.zip -

6.9.2 RC2 "Read SMART" spins up the same 2 disks throughout the day

prongATO commented on cholzer's report in Prereleases

I'm also having a similar issue. I have my spin-down set to 15 minutes and every hour, all 15 disks spin up to read SMART values. My logs show that over and over all day. Looking into things on my side attempting to track anything down that could be contributing to the issue but it's a new-ish thing. I just had to add another drive to the array because I was almost out of space so I've been looking a little more closely. If there's another place I should be posting let me know and I'll provide logs, etc. -

Someone may want to post as sticky somewhere to update NerdPack and/or python if their speedtest-cli is broken after update to unRAID 6.7. It's a simple fix and it's always good practice to update/disable plugins when upgrading the OS but I figure there will be a lot of people asking when they figure it out their scheduled speedtest is broken.

-

It takes it a bit to start building up numbers. Did you ever have to change permissions on vnstat.pid from the running user/group to write? I want to make sure that the developer has as much feedback as possible for troubleshooting.

-

Let's see if it works for MMW first. I just have a hunch because I gave the user/group that runs the plugin write access to the .pid file and the next time I installed the plugin it worked perfectly after previously giving the same error.

-

Just a thought By default, at least on my system, the file vnstat.pid that is unavailable is root/root. If the process is trying to replace that file then it would need elevated permissions. Try chmod +w vnstat.pid in /var/run/

-

Thus it may need the write permission I wrote about.

-

I know the version didn't work but I listed the steps that worked for me. If they don't work following each step then I have no idea. It worked for me in that specific order. I just did them less than 45 minutes ago.

-

Sure, Uninstall the .plg file via the web interface under PLUGINS reinstall via the tab under PLUGINS-INSTALL PLUGIN (via direct http link) from here "https://raw.githubusercontent.com/dorgan/Unraid-networkstats/master/networkstats.plg" Check the PLUGINS tab again and it will show there's an update for the app, go ahead and update it. If that doesn't work I did one more thing you can try and give the group/user that runs this app write access to the vnstat.pid file in /var/run

-

I initially installed it via the Apps tab option. I then uninstalled it and used one of the direct links posted here and while I was reading this thread I went back to plugins tab and it showed it had an update so I updated it and it's now working flawlessly. If the remove and reinstall doesn't work I can give you an exact step-by-step on how I got it working.

-

I updated the plugin via the menu system after installing via http link to the plg instead of through Apps. I can verify it's now working for me also. Previously it was throwing the following error. Error: pidfile "/var/run/vnstat.pid" lock failed (Resource temporarily unavailable), exiting.

-

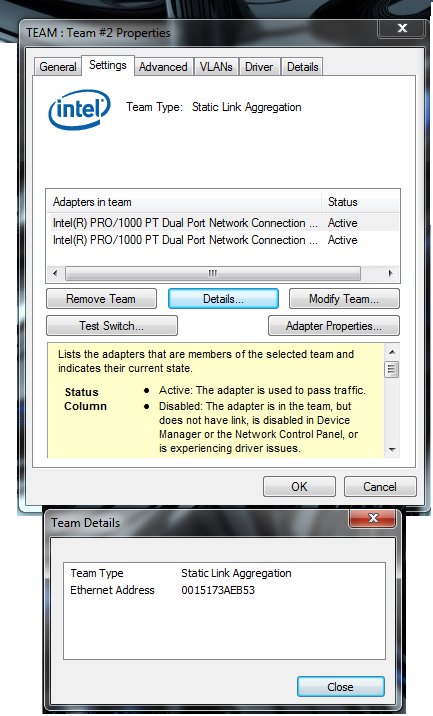

How to setup link aggregation?

prongATO replied to JimPhreak's topic in General Support (V5 and Older)

I realized this is an old thread but in response to the last statement: http://www.intel.com/support/network/sb/cs-009747.htm The Windows driver I'm using has an option for static aggregation and another for dynamic link aggregation. I'm having the same problems with Dynamix also. Spotty connections, I can ping it but the web interface won't load. (thank God I got a Supermicro board with OOB-IPMI) Did anyone ever come up with a solution to the unraid side of RR? -

How many community plugins do you use with your unRAID array?

prongATO replied to harmser's topic in Unraid Polls

Dynamix Webgui Active streams Cache Dirs Disk health Email notify System info System stats System temp Plugin control Unplugged Sabnzbd Sickbeard Couchpotato Headphones Apcupsd Powerdown Screen -

"SimpleFeatures" Plugin - Version 1.0.11

prongATO replied to speeding_ant's topic in User Customizations

Just for sh/!s and giggles I tried this with my gigabyte board and it worked perfectly. The temperatures seem to be spot on. MB reads 25 C and CPU reads 41 C. Drives are variable between 32 C and 27 C. Btw the new WD Red drives seem to run consistently 3 to 4 degrees cooler than caviar green drives in the same cages, definitely worth the small price increase to step up to a quasi-enterprise drive product. I'm in the process of switching out all my old 2TB greens for 3TB Reds. WD has been STELLAR with their customer support and replacement of defective drives. I've only had two failures in 2 years (both greens) and they were replaced no problem (my server runs 24/7). Please refer to my signature for details of my setup. In addition I've been invited and qualified for WD beta testing of their new WD Black hybrid 3TB drives. (SSD/platter). Should be getting my first test drive soon. In summation, thank you very much for your detailed instructions to get the temperature sensors working with the new SimpleFeatures release. I really appreciate your sharing your expertise and willingness to help others get their sensors working. Now if we can just get this pissing contest between preclear and SimpleFeatures figured out, things will be SO much more user friendly. No more having to ssh to the unraid server, invoke a screen instance start the preclear and keep the antiquated unmenu to check progress of the preclear running in a screen instance. -

"SimpleFeatures" Plugin - Version 1.0.11

prongATO replied to speeding_ant's topic in User Customizations

Keep up the good work on SimpleFeatures. It has been an amazing transformation in user-experience from the old unmenu interface. Plugins are simple and easy to use, you can stop the array without having to stop every running process (SAB, SB, CP) and things are just cleaner and more streamlined all the way around. I'm VERY sad to see the issues with the preclear script not being included in the latest release. It all seems pretty petty to me. Hope you can all work out your differences, however small they may be. -

"SimpleFeatures" Plugin - Version 1.0.11

prongATO replied to speeding_ant's topic in User Customizations

Thank you -

"SimpleFeatures" Plugin - Version 1.0.11

prongATO replied to speeding_ant's topic in User Customizations

Could someone please explain the feature "Enable background polling for spun-down disks"? I've searched the forums and no luck. What impact does it have on the drives? What information does it provide? Does it just run periodic SMART tests when the disk are spun down? Thanks in advance -

[SOLVED] Replacing smaller drive with a larger drive

prongATO replied to prongATO's topic in General Support (V5 and Older)

I went ahead and hit the rebuild button. For some reason I was thinking when you started the array that it would rebuild it automatically. Now it says data rebuild in progress and there are a lot of writes to the new drive. Thank you! I know this probably sounded like a rookie question but I just wanted to be safe. Again, thank you! -

[SOLVED] Replacing smaller drive with a larger drive

prongATO replied to prongATO's topic in General Support (V5 and Older)

I think I was mistaken and read about a "restore" button not a rebuild button. Since I have the old drive with all the data on it, I feel like I am a little safer. -

[SOLVED] Replacing smaller drive with a larger drive

prongATO replied to prongATO's topic in General Support (V5 and Older)

I see a rebuild button but if what I understand what I have read correctly, that will re-write parity and destroy the data that was housed on the 1.5 TB drive regarding parity. -

[SOLVED] Replacing smaller drive with a larger drive

prongATO replied to prongATO's topic in General Support (V5 and Older)

I'm running 5.0-rc8a btw. -

I replaced a 1.5TB drive with a 3TB drive in my array (disk5). I think I made a terrible mistake by warm swapping the drives instead of shutting the server down. I have istarUSA cages so I figured I could warm swap. I previously pre-cleared the 3TB drive. Stopped the array, removed drive5 (1.5TB) and replaced it with the 3TB drive. Assigned then new drive under the configuration panel and hit "start array". I expected unraid to go ahead and rebuild the data on the new drive but instead the drive has very few writes (~7000) after 8 hours and was had actually spun down. It's listed on the status page as an orange/yellow ball and status returns that the data is invalid. Is there any way I can re-do this procedure to get it to rebuild all the data to the new drive? I still have the old drive with all the data intact. I've had to replace a failed disk before and followed this procedure with success but that time I actually shut down the server. I'm not sure if that is what caused these problems or not. Any help would be GREATLY appreciated.