-

Posts

152 -

Joined

-

Last visited

Content Type

Profiles

Forums

Downloads

Store

Gallery

Bug Reports

Documentation

Landing

Everything posted by Mihle

-

If I currently have a basic licence, will I be able to upgrade to a plus, after the change has happened, or would I be forced to stay on basic/upgrade all the way to pro?

-

[Guide] Fixing Nextcloud Uploads Filling up Docker Image

Mihle replied to Progeny42's topic in Docker Containers

So I did this with Nextcloud (Linuxserver) /tmp (not SWAG) a while ago by remapping it to a folder inside Nextclouds own Appdata folder. It worked fine at the time. But after a container/Nextcloud(v28) update, I started having issues with the photos app/viewing photos in Nextcloud making Nextcloud stop responding with few logs until I restarted my NAS, and then the docker service had to be turned on again manually. After a lot of testing I figured out removing the path remapping, fixed the freezing/stop responding issue. But Now I am back to /tmp being in the docker image. Anyone have heard about this before and know how to fix it or work around it? What about remapping a folder could cause problems with Nextcloud? -

So a question, I am on unraid 6.12 and didnt update to the new one yet because I didnt get around to it, but I am having problems and want to roll back appdata. Will the old version still work, even if it is not supported anymore?

-

I am no longer getting that warning, but nextcloud stops responding every time the photo app in nextcloud tries to generate previews of photos. Nothing helps exept restaring the whole NAS. I have tried to find logs about it everywhere, nextcloud.log, docker.log, other logs, unraid syslog but there is zero logs that tells me anything about what is going on. At this point I think my only option is to try to roll back to an earlier appdata version.

-

The last while I have gotten this error now and then: Allowed memory size of 536870912 bytes exhausted (tried to allocate 608580088 bytes) at /app/www/public/lib/private/Files/Storage/Local.php#327 How do I fix that? How do I increase that limit to something higher? How do I find the file in the path the error mentions, I have tried looking for it it in Appdata but I havent found it yet. it is somewhere else?

-

After another update, its indeed fixed itself.

-

I just had an issue where Jellyfin on custom network would not start with error that port was already in use, after a lot of trying different things, I figured out that it was conflicting with itself. This started after some update. In the configuration, it had both a field named "Webui - HTTPS:" and one named "WebUI (HTTPS) [optional]:" that was both set to 8920. I changed the last one to something else and it then started up fine. Is this an issue with that its an older docker that has been updated as its basically the same name, or is it an issue with the docker itself? EDIT: I just noticed my Docker is not the linuxserver one, opsie.

-

I just updated (27.0.1, latest docker image I think), and I am getting the same errors, should I delete them manually or do something else or just wait?

-

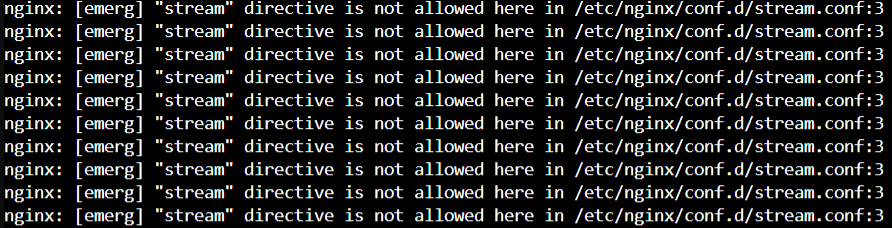

Same, only required to do ot with nginx.conf tho, not ssl.conf. For reference for other people, is is how the warning looks like in Swag log: I shut swag down and replaced the .sample with .conf and its working again

-

Swag update broke reverse proxy and publishing of nextcloud. :(

Mihle replied to PilotReelMedia's topic in Docker Engine

I only did the ngnx.conf and it worked, did not touch ssl.conf, and it worked. Thanks! -

I figured it out, its not an issue with MariaDB at all. The issue is with SWAG. 1. Stop SWAG docker (dont know if this is required, but seems like a good practice to do?) 2. Go in to appdata/swag/nginx 3. Rename the nginx.conf like for example add .old (so you still have it, just in case) 4. Rename the nginx.conf.sample to just nginx.conf 5. Start SWAG docker 6. Profit. EDIT: Found this thread after the fact right now:

-

My mistake, the command is what you say there, it was an error by copying it over. But yes, it is encrypted. $DEVICE do work for unmount tho so weird it doesnt for spindown, while using sde works? When using sde, it does say dev/sde and not /dev/mapper/mountpoint? Even tho its supposed to be an encrypted disk? EDIT: $LUKS works for spin down, Why is it better to use disk alias?

-

I have a problem, in device script I have this: 'REMOVE' ) # Spinning down disk logger Spinning disk down -t$PROG_NAME /usr/local/sbin/rc.unassigned $DEVICE But the disk do not spin down, it does do a notification I have after that part, and the system log does say: Dec 23 21:45:36 NAS OfflineBackup: Spinning disk down But if I replace the "$DEVICE" with "sde", it does spin down and the system log says: Dec 23 21:50:03 NAS OfflineBackup: Spinning disk down Dec 23 21:50:03 NAS emhttpd: spinning down /dev/sde Why doesnt "$DEVICE" work? Its probably not ideal to use "sde" in that script?

-

Sorry for the necro but I have question: I have the case with stock fans but dont yet know if its worth changing to lower HDD temp. But the question is: Do you HAVE to remove the dust filter when you change the front fan, or is it just something you choose to do?

-

unassigned disk dont have any user shares anyway.

-

Standard config in ddclient dont have the use=web in the cloudflare section, but after struggling to figure it out, as ddclient log only said something about missing IP, I came over this and adding that seemed to make it work. Had an easier time setting it up for Namecheap that I used before than Cloudflare.

-

Trying to make a script I can run that backs stuff up to an unassigned devices HDD. #!/bin/bash #Destination of the backup: destination="/mnt/disks/OfflineBackup" #Name of Backup disk drive=dev1 ######################################################################## #Backup with docker containers stopped: #Stop Docker Containers echo 'Stopping Docker Containers' docker stop $(docker ps -a -q) #Backup Docker Appdata via rsync echo 'Making Backup of Appdata' rsync -avr --delete "/mnt/user/appdata" $destination #Backup Flash drive backup share via rsync echo 'Making Backup of Flash drive' rsync -avr --delete "/mnt/user/NAS Flash drive backup" $destination #Backup Nextcloud share via rsync echo 'Making Backup of Nextcloud' rsync -avr --delete "/mnt/user/NCloud" $destination #Start Docker Containers echo 'Starting up Docker Containers' /etc/rc.d/rc.docker start ######################################################################## #Backup of the other stuff: #Backup Game Backup share via rsync echo 'Making Backup Game Backup share' rsync -avr --delete "/mnt/user/Game Backups" $destination #Backup Photos and Videos share via rsync echo 'Making Backup of Photos and Videos share' rsync -avr --delete "/mnt/user/Photos and Videos" $destination ######################################################################## #Umount destination echo 'Unmounting and spinning down Disk....' /usr/local/sbin/rc.unassigned umount $drive /usr/local/sbin/rc.unassigned spindown $drive #Notify about backup complete /usr/local/emhttp/webGui/scripts/notify -e "Unraid Server Notice" -s "Backup to Unassigned Disk" -d "A copy of important data has been backed up" -i "normal" echo 'Backup Complete' I am not sure if I should use echo or logger? Also, in a test script, I didnt manage to get the 2>&1 >> $LOGFILE that I see some use at the end of rsync commands to do anything, what does it do and should I try to get it to work? Some do >> var/log/[something].log (together with some date command) What do they do? For reference, I set the drive to automount, then I want to manually have to start the script, but when its done running I want to be ready to just unplug without having to press anything more. (and then take to another location until its time to update it) (offline backup)

-

This is my current scrip, havent tested the hole script at once yet: #!/bin/bash #Destination of the backup: destination="/mnt/disks/OfflineBackup" #Name of Backup disk drive=dev1 ######################################################################## #Backup with docker containers stopped: #Stop Docker Containers echo 'Stopping Docker Containers' docker stop $(docker ps -a -q) #Backup Docker Appdata via rsync echo 'Making Backup of Appdata' rsync -avr --delete "/mnt/user/appdata" $destination #Backup Flash drive backup share via rsync echo 'Making Backup of Flash drive' rsync -avr --delete "/mnt/user/NAS Flash drive backup" $destination #Backup Nextcloud share via rsync echo 'Making Backup of Nextcloud' rsync -avr --delete "/mnt/user/NCloud" $destination #Start Docker Containers echo 'Starting up Docker Containers' /etc/rc.d/rc.docker start ######################################################################## #Backup of the other stuff: #Backup Game Backup share via rsync echo 'Making Backup Game Backup share' rsync -avr --delete "/mnt/user/Game Backups" $destination #Backup Photos and Videos share via rsync echo 'Making Backup of Photos and Videos share' rsync -avr --delete "/mnt/user/Photos and Videos" $destination ######################################################################## #Umount destination echo 'Unmounting and spinning down Disk....' /usr/local/sbin/rc.unassigned umount $drive /usr/local/sbin/rc.unassigned spindown $drive #Notify about backup complete /usr/local/emhttp/webGui/scripts/notify -e "Unraid Server Notice" -s "Backup to Unassigned Disk" -d "A copy of important data has been backed up" -i "normal" echo 'Backup Complete' I am not sure if I should use echo or logger? Also, in a test script, I didnt manage to get the 2>&1 >> $LOGFILE that I see some use at the end of rsync commands to do anything, what does it do and should I try to get it to work? for reference, I set the drive to automount, then I want to manually have to start the script, but when its done running I want to be ready to just unplug without having to press anything more. (and then take to another location until its time to update it) (offline backup)

-

I have seen some scripts include "echo", "logger" and behind some commands they write 2>&1 >>[some adress to a log file], and a date command, what do they do? Any website I havent found yet that explains it? (might be searching for the wrong things)

-

So, If I used: rsync -avr --delete /mnt/cache/Ncloud It would only do data on the cache, if I did; rsync -avr --delete /mnt/disk1/Ncloud It would only do the data on the array disk, but if I do: rsync -avr --delete /mnt/user/Ncloud It will do everything no matter where its located? (same with appdata)

-

Would it work fine if you just do the Nextcloud share and Appdata share for Nextcloud? do you need to turn off Nextcloud while it runs?

-

How would I go about backing up Nextcloud database in a script like this where partal of the data could be on the cache? Would just selecting the hole share work? would Nextcloud have to be stopped?